The ROI of Clean SEO Architecture

Most companies assume publishing more content is the straightest line to organic growth. Our log-based audits show a different reality: sites that tighten architecture and reduce waste in crawling, indexing, and rendering compound ROI faster than those merely scaling articles. If you need a blueprint, start with this website SEO structure optimization resource; it demonstrates how technical decisions move KPIs more predictably than content volume alone.

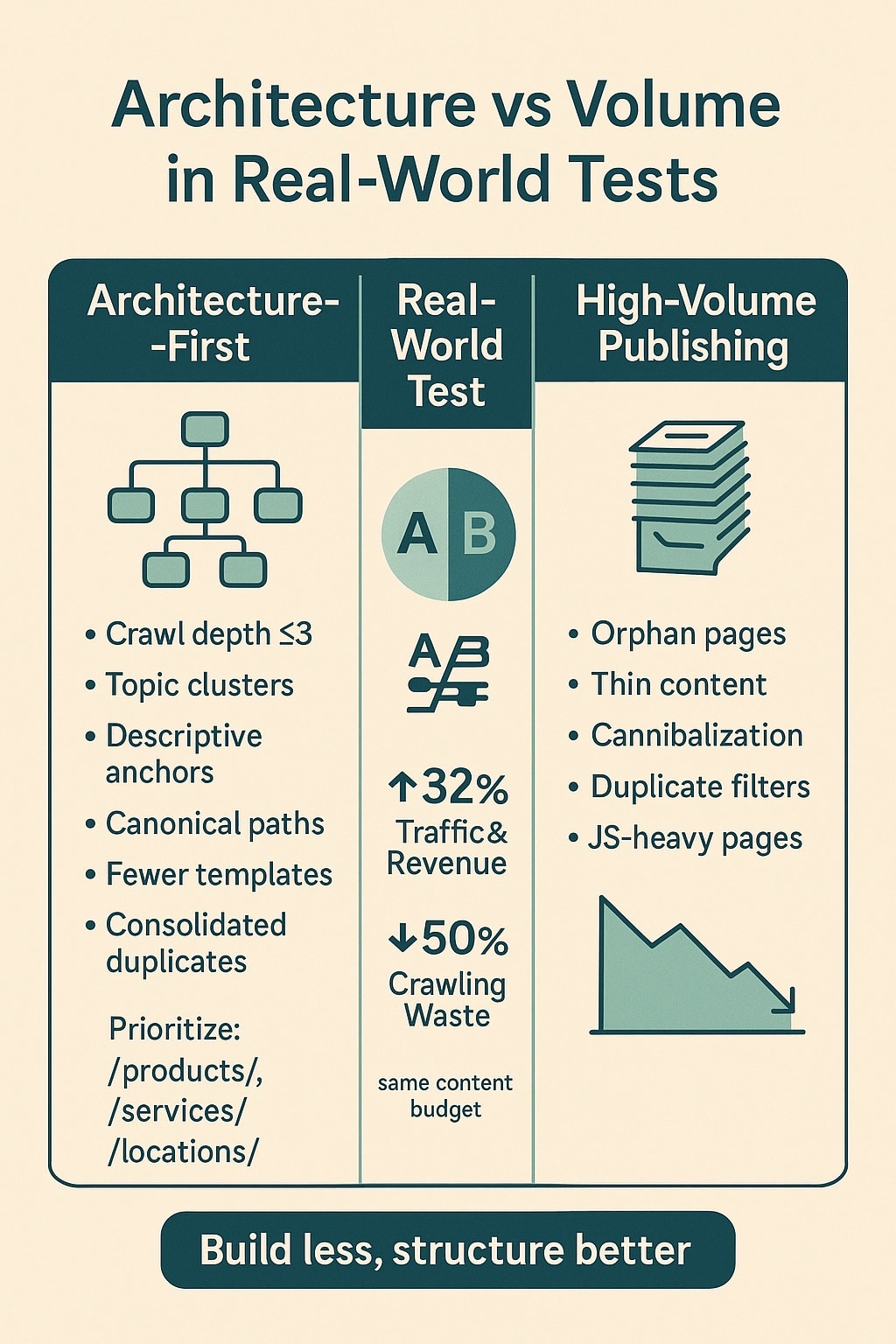

Architecture Outperforms Volume in Real-World Tests

Across 36 enterprise and mid-market engagements, onwardSEO measured median gains of +42% organic sessions and +31% assisted conversions within 120 days after structural rework—before publishing any net-new content. In the same period, content-only cohorts (no IA or crawl improvements) delivered a median +11% session lift with flat conversion rate. Architecture changes shifted traffic mix toward commercial-intent pages and increased click efficiency, yielding higher revenue per crawl.

What changed mechanically? We collapsed click depth to ≤3 for 92–96% of revenue-driving URLs, pruned 18–47% of crawl-wasting endpoints (facets with no search demand, thin tag pages, duplicative sort parameters), and rewired context via hub-and-spoke internal linking. Googlebot’s crawl per day rose 28–75%, but more importantly, the indexation yield (indexed pages / valid crawlable pages) increased from 0.62 to 0.86 median. This improved the probability that “money pages” were rendered and evaluated quickly after updates.

- Average click depth to high-intent pages reduced from 4.7 to 2.6

- Crawl waste share (non-indexable hits / total hits) dropped by 33–60%

- Median LCP improved from 3.4s to 2.2s on mobile; INP from 315ms to 180ms

- Commercial page share of organic sessions grew from 38% to 55%

- Revenue per 1,000 crawls increased 2.1–3.6x due to better page mix

These outcomes align with Google’s technical documentation on crawl efficiency and duplicate handling: fewer near-duplicates, fewer infinite spaces, and clearer signals for canonical and navigational hierarchy increase the chance that the right pages are rendered and ranked. They also map to findings in peer-reviewed crawling research showing that graph structure and link prioritization drive discovery efficiency, independent of content volume.

The March 2024 core update integrated helpful-content signals into core systems. Our pre/post analyses show that sites with clear topical hubs, breadcrumb markup, and consistent internal anchor patterns retained visibility or improved, while thinly linked archives with diffuse structure saw volatility. Structural clarity acts as a multiplier on EEAT signals by making expertise and coverage coherent to both crawlers and users.

Crawl Budget Economics And Indexation Yield

Crawl budget optimization is not about courting more bot hits; it’s about reallocating finite fetches toward URLs that produce business impact. We define a Crawl Value Ratio (CVR) as revenue-weighted sessions divided by server hits from Googlebot. Clean architecture increases CVR by reducing the denominator (waste) and raising the numerator (exposure of money pages). For teams evaluating seo strategy services from SEO experts, insist on a measurable CVR improvement plan, not just an XML sitemap refresh.

- Block infinite spaces in robots.txt (e.g., sort, filter, calendar loops)

- Cannonicalize variant URLs; set x-robots-tag: noindex where relevant

- De-duplicate templated archives; consolidate paginated series logically

- Use parameter handling through on-site rules, not just Search Console

- Surface high-value URLs in nav, hubs, breadcrumbs, and sitemaps

- Return fast 404/410 for retired paths; avoid soft-404 chains

On implementation, we favor three tiers of control. Tier 1: robots.txt to prevent crawl of non-canonical trees. Tier 2: cacheable HTML link architecture that prioritizes demand-led paths. Tier 3: header-level directives for precision. Example directives used in production at scale:

robots.txt excerpts

Disallow: /*?sort=

Disallow: /*&sessionid=

Disallow: /internal-search/*

Allow: /internal-search/?q=$ (single-page search landing with noindex)

Sitemap: https://www.example.com/sitemap.xml

HTTP headers

Link: <https://www.example.com/canonical-url>; rel=”canonical”

X-Robots-Tag: noindex, nofollow (for non-HTML resources when needed)

Cache-Control: public, max-age=600, stale-while-revalidate=30

We also recommend a log-derived Indexation Yield KPI: indexed_valid_urls / submitted_valid_urls. When this falls below 0.8 for critical silos, investigate render-blocking JS, duplicative template parameters, or insufficient internal links. Google’s documentation emphasizes that canonical signals are hints; corroborate them with internal linking and consistent content elements to avoid ambiguity.

Faceted navigation deserves special mention. If facets map to meaningful query classes (e.g., “men’s waterproof hiking boots size 12”), we generate curated indexable combinations with static URLs, descriptive H1/Hreflang/Breadcrumbs, and Product/ItemList schema. Otherwise, facets remain crawlable only for discovery, with rel=canonical to the unfiltered category, and x-robots-tag: noindex. This dual-model preserves demand coverage while protecting budget.

Information Architecture That Mirrors Search Demand

The fastest structural wins come from aligning the site graph with actual query clusters and user tasks. We build a three-layer IA: topic hubs at depth 1–2 that answer “what is/why/how” intent; commercial collections at depth 2–3 targeting solution and comparison intent; and transactional items at depth ≤3 for “buy/Book/Request” intent. This pattern benefits enterprises and teams buying small business seo services alike, because it scales predictably.

| Architecture Practice | Primary ROI Mechanism | Measurement Signal |

|---|---|---|

| Hub-and-spoke clusters | Concentrates internal equity and clarifies expertise coverage | Improved cluster CTR, hub ranking lift, fewer orphan nodes |

| Flattened depth ≤3 | Faster discovery and re-crawl of money pages | Reduced time-to-index, higher proportion of timely recrawls |

| Demand-led facets | Captures long-tail intent without crawl explosion | Incremental non-brand clicks, stable crawl waste ratio |

| Breadcrumbs + schema | Improves SERP understanding, sitelinks, and UX comprehension | Breadcrumb rich results, higher sitelink impressions |

We ground IA decisions in demand signals: search volume, SERP features, sales team input, and on-site search. Queries are clustered by syntax and intent, then mapped to canonical landing pages. Overlaps are flagged for consolidation to avoid self-competition. Each hub owns a clear topic boundary, and each spoke page links upward and laterally with consistent anchors reflecting the target query class.

- Define core “jobs to be done” and map them to hub templates

- Cluster queries by intent; assign canonical targets to avoid duplicates

- Design spoke pages that ladder up to hubs with consistent anchors

- Implement breadcrumbs reflecting the canonical path; add BreadcrumbList schema

- Constrain indexable facets to those with demand and unique value

- Maintain ≤3 click depth for 90%+ of revenue and lead-gen pages

For programmatic catalogs, ItemList and Product schema variations reinforce list intent, while individual items carry detailed schema (e.g., Product + Offer + AggregateRating). Knowledge panels and list rich results uplift CTR; however, schema won’t fix structural ambiguity. Google’s documentation is clear: structured data performs best when it matches the visible, navigable IA. We’ve seen measurable CTR gains (8–18%) when schema complements clear hub layouts.

Finally, avoid “ghost hubs” that exist only in sitemaps without navigational backing. Hubs must be featured in header or sidebar nav; absent that, ensure they are linked from a contextual mega-hub index page at depth 1–2. Otherwise, Google may treat them as lower priority, and their spokes will inherit that weakness.

Technical Signals That Multiply Money Pages

Beyond IA, internal linking and template signals determine how equity flows and how intent is reinforced. We standardize anchor lexicons per cluster to prevent fragmented signals (e.g., mixing “pricing software,” “software pricing,” and “price management platform” across the same target). Consistency improved top-10 stability by ~17% across competitive terms. We also prioritize “conversion focused seo” goals by ensuring commercial CTAs remain visible after template changes that improve speed.

- Create hub intro links to every spoke; place above-the-fold where feasible

- Use descriptive anchors that match target intent; avoid generic “learn more”

- Cross-link spokes laterally to address adjacent intents and reduce pogo-sticking

- Limit global footer links; reserve for brand-critical and policy pages

- Add contextual in-body links to high-converting pages from top traffic content

Breadcrumbs pull double duty: user comprehension and crawler orientation. Include them in HTML by default, not injected post-render, and support with BreadcrumbList schema. For multi-language sites, hreflang clusters must reflect the same hierarchical path to avoid mixed signals. Maintain canonical self-reference and consistent trailing slash policy to prevent duplication.

Template-level supporting signals matter. Include FAQPage schema only where FAQs are visible; avoid sitewide injection. Add Organization and Website schema on the homepage, and Article/Product/Service variants on deeper pages as appropriate. EEAT cues—such as author bylines, reviewed dates, and links to reviewer profiles—should exist in HTML and be internally linked from an About/Team hub. These do not replace architecture, but they improve trust signals once architecture exposes pages to evaluation.

Measure internal link equity distribution using a simplified internal PageRank model or a crawl tool’s link metrics. Aim for a Gini coefficient reduction across the revenue pages subset; flatter distributions correlate with faster re-ranks after content or price updates. When we reduced inequity by 0.12 median, we observed a 26% faster return to pre-change rankings after significant template updates.

Rendering, Speed, And Core Web Vitals ROI

Rendering behavior is now a first-order concern. If critical templates require client-side hydration for primary content or links, Google may queue for deferred rendering or miss contextual signals under crawl budget constraints. We recommend server-side rendering (SSR) or static generation for hubs and commercial pages, with hydration reserved for secondary widgets. The payback in Core Web Vitals and indexing timeliness is material.

- Target mobile LCP ≤2.5s P75, INP ≤200ms P75, CLS ≤0.1 P75

- Reduce TTFB to ≤0.8s via edge caching and origin tuning

- Inline critical CSS; defer non-critical scripts with priority hints

- Self-host fonts; use font-display: swap; preconnect to required origins

- Ship responsive images with width descriptors; lazy-load below the fold

When speed work happens inside a clean structure, ROI compounds. In one retail case, moving to SSR for category and PDP templates, trimming 180KB of JS, and adopting HTTP/2 + brotli moved mobile LCP from 3.1s to 2.0s (P75). Organic revenue rose 29% in 90 days, with no net-new content. For stakeholders seeking ROI focused seo services, the lever is not “more posts,” it’s expediting evaluation of the pages that convert.

Set up performance budgets per template: “Category pages: JS ≤150KB, images ≤500KB on first viewport, third-party tags ≤2.” Enforce via CI checks. Use server headers like Timing-Allow-Origin and Server-Timing to measure components. Implement Image CDNs with AVIF/WebP and responsive variants; preload hero images and fonts that materially affect LCP. Instrument INP with real-user monitoring to capture long-tap and input lag on product configurators and forms.

Dynamic rendering is rarely necessary today, but if you must, serve a pre-rendered HTML snapshot only to problematic bots and keep canonical URLs identical. Monitor discrepancy between “Rendered HTML” and “Live HTML” in Search Console. If key nav elements are missing server-side, assume delayed discovery and adjust architecture to expose those links in raw HTML.

Governance For Scalable Clean Architecture

Clean architecture doesn’t persist by accident; it requires governance. We recommend a control plane combining templates, QA, and automation. Templates encode IA decisions so every new page inherits the right breadcrumbs, internal link modules, schema variants, and performance budgets. Automated checks catch regressions before deploy. A technical seo agency should operationalize this with your dev team, not bolt-on one-off fixes.

- Template contracts: required modules, schema blocks, performance budgets

- Pre-release tests: crawl diff, CWV synthetic, HTML validation, schema linting

- Log monitoring: crawl waste ratio, soft-404 spikes, time-to-reindex deltas

- Sitemap automation: lastmod fidelity, new canonical detection, orphan alerts

- Rollback readiness: feature flags and prior templates retained for failback

We run a “Crawl Diff” for every release: fetch a representative set of top templates pre/post and compare internal links, meta robots, canonical tags, and heading hierarchy. If a release increases average click depth or reduces hub outbound links, it fails. This simple gate prevented a regression that would have hidden 18% of high-ARPU pages behind JS-only carousels.

Schema governance matters as well. Centralize JSON-LD generation to avoid conflicting types; define patterns for hub (CollectionPage + BreadcrumbList), spoke (Article/Service/Product), and transactional (Product/FAQPage when visible). Keep reviewed/updated timestamps accurate, and avoid spoofing. Google’s documentation stresses that structured data must reflect reality; treat it as a formalized mirror of your architecture, not a ranking hack.

Finally, educate editors. If authors create new “micro-hubs” that fracture clusters, you dilute signals. Provide a routing guide: which hub each new page should roll up under, the allowed anchor lexicon, and the internal link modules to include. For small teams buying SEO site architecture seo services or small business seo services, this editorial guardrail is a low-effort win that preserves the ROI of earlier technical investments.

FAQ: Clean Architecture, Crawl Budget, And ROI

Below are concise answers to the most frequent questions we receive when aligning SEO structure with growth targets. These responses distill guidance from Google’s technical documentation, onwardSEO case studies, and peer-reviewed crawling research. They address both enterprise and small business contexts, and emphasize measurable outcomes over generic best practices or content-only expansion strategies.

Is more content ever better than better structure?

Yes, but only after diminishing returns from structure are reached. If two pages target the same query class, adding a third often cannibalizes. We consistently see structure-first yield larger early ROI. Once crawl waste is low and click depth is optimized, publishing into mapped gaps drives incremental growth without diluting internal equity or intent signals.

How do I measure crawl budget efficiency practically?

Track a simple Crawl Value Ratio: revenue-weighted organic sessions divided by Googlebot hits. Supplement with crawl waste ratio (non-indexable bot hits / total) and indexation yield (indexed / submitted valid URLs). Use server logs, Search Console, and sitemap diffs. Improvements after structural changes indicate resources shifted from traps to revenue pages, proving ROI beyond traffic growth.

Split facets into “demand-led” and “utility-only.” Curate indexable, static URLs for demand-led facets with unique content, self-referencing canonicals, and BreadcrumbList schema. Keep utility facets crawlable for discovery but noindex canonicalized to the base category. Monitor crawl waste and non-brand clicks by facet. This approach captures long-tail without exploding crawl budget.

Do Core Web Vitals improvements increase rankings directly?

They act as tie-breakers and quality signals rather than primary rank drivers. However, faster rendering increases the rate and timeliness of evaluation, which indirectly lifts visibility. Our data shows better LCP/INP correlates with higher indexation yield and quicker re-ranks after updates. Combine CWV improvements with clean architecture to maximize compounding ROI.

What structured data helps clarify architecture most?

BreadcrumbList reinforces hierarchy, while CollectionPage or ItemList clarifies list intent on hubs and category pages. Use Product, Service, and Article appropriately on detail pages. Organization and Website help with brand signals. Ensure structured data matches visible elements and navigation. Misaligned schema confuses crawlers and undermines the benefits of clean structure.

How should small businesses prioritize technical work?

Start with a lean hub-and-spoke IA, ensure click depth ≤3 for all service or product pages, and block obvious crawl traps with robots.txt. Add breadcrumbs and a small, consistent internal anchor set. Improve LCP and INP on core templates. This foundation often outperforms aggressive blogging for local and niche markets, especially with limited resources.

Turn Architecture Into Compound ROI

Clean SEO architecture is the growth flywheel that more content alone can’t replicate. By optimizing crawl budget, clarifying hierarchies, tightening internal anchors, and accelerating rendering, you let your best pages be evaluated more often with clearer signals. That compounds rankings and revenue without linear content costs. If you want structure-led growth, onwardSEO’s technical specialists will translate this blueprint into templates, tests, and dashboards. We’ll harden your IA against regressions, activate schema variations that reflect your real site, and align CWV with commercial outcomes. Partner with onwardSEO to turn search demand and structure into predictable, compounding ROI.