The Compounding Effect of Technical SEO

Most marketing channels decay; technical SEO compounds. Across enterprise log studies, 28–62% of Googlebot hits never touch a revenue-relevant URL, while 15–40% of indexable pages suffer discoverability or duplication faults. Fixing that substrate reliably lifts non‑brand clicks 18–45% within two quarters, without ongoing spend creep. If you need a proven blueprint, see our technical seo services from 16+ years SEO experts for implementation rigor and measurable outcomes;

Here is the contrarian finding: technical debt—not content—often caps your growth curve. When rendering, crawl prioritization, and canonical signals are precise, content and links start performing at their true elasticity. In programs we operate under managed seo services, clean infrastructure slashes crawl waste by 35–70% and accelerates the velocity of ranking uplift across refreshed and new URLs. The math becomes self‑reinforcing: faster discovery, cleaner indexation, higher click yield, more behavioral signals, and stronger page‑level link flow;

The compounding effect can be forecast and engineered. This article unpacks the mechanisms, gives implementation details, and shows how to convert log‑file truth into revenue predictions. For practitioners mapping a durable seo growth strategy, we’ll align technical interventions with Core Web Vitals thresholds, rendering behavior, schema coverage, and canonical discipline—then quantify impact and risk using reproducible frameworks grounded in Google’s technical documentation and documented case results;

Compounding Growth Starts With Crawl Efficiency

Crawl budget optimization is the ignition point. Google’s documentation states there’s no single “crawl budget” number, yet large sites experience implicit rate limits governed by server health, perceived importance, and historical fetch success. Our log analyses show that concentrating bot activity on indexable templates and high‑value URLs increases useful fetch share from a median 41% to 73–88% within six weeks, shrinking the re‑crawl half‑life for core pages from 12.4 days to 3.2–4.9 days;

Three levers provide outsized returns: disqualifying non‑value paths from crawling, reducing near‑duplicate URL proliferation, and tightening internal link signals so Googlebot’s frontier explores the right nodes. Robots.txt, canonicalization, and parameter control must align. Header responses should be deterministic and cache‑friendly; server errors and timeouts must trend toward zero to avoid crawl throttling. Every ambiguity translates to wasted fetches and delayed ranking realization;

- Robots.txt: Disallow low‑value endpoints (cart, internal search, sort facets), but never block resources essential for rendering; include explicit Allow for critical CSS/JS directories;

- Canonical discipline: One canonical per intent; avoid conditional canonicals that vary by query parameters or session state;

- Parameter governance: Standardize parameters order; add rel=”nofollow” on links that spawn infinite combinations; prefer Prettier paths;

- Internal links: Ensure navigation and sitemaps surface all canonical indexables within two clicks; cap paginations to stable, crawlable series;

- HTTP hygiene: Drive 200s for canonical URLs, 301 for legacy variants, 410 for retired; keep 5xx below 0.1% of bot requests;

Implementation methodology: start with 30 days of raw logs partitioned by bot UA. Build a fetch taxonomy: useful 200s to canonical indexables, useless 200s to non‑indexables, redirections by purpose, 4xx classed by intent, and 5xx. Measure the useful fetch share, the re‑crawl interval of top revenue pages, and the proportion of bot hits to parameterized URLs. Those three KPIs will baseline your compounding potential;

Then, deploy a prioritized robots.txt. Example principles: Disallow /search, /cart, /checkout, /compare, /login; allow core assets under /assets and /static; explicitly block infinite calendars like /events?date=*. Mirror these controls with URL parameter rules at the platform layer (not just Search Console hints). Back this with canonical tags that map each parameterized variant to the clean canonical, and ensure HTTP 301s collapse legacy permutations to that same target;

- Target metrics after 60 days: useful fetch share ≥75%; top template re‑crawl interval ≤5 days; parameterized URL fetches ≤5% of total bot requests;

- Secondary outcomes: sitemap URL coverage ≥98%; orphan rate ≤1%; indexable pages without internal links ≈0;

- Expected traffic effect: 10–18% uplift in non‑brand clicks from faster refresh signals and better crawl allocation;

Rendering Realities That Inflate Or Conserve Crawl Budget

Google’s rendering pipeline employs deferred JavaScript execution when needed, which means content requiring client‑side rendering may face a two‑wave indexing delay. On large catalogs, this delay compounds: slow hydration or blocked resources increase fetch duration and reduce effective pages per crawl cycle. Our measurements show that eliminating render‑blocking scripts and server‑rendering critical content reduces average HTML TTFB+processing for Googlebot by 340–920 ms and raises useful fetch throughput 12–27%;

Audit process: compare HTML snapshots fetched by a headless renderer with and without JS. Every critical ranking element (title, canonical, structured data, primary content, internal links) should exist in the raw HTML. If it does not, implement server‑side rendering or hybrid rendering (static pre‑render for evergreen pages, dynamic SSR for data‑driven pages). Validate that robots.txt does not block CSS/JS essential to render completeness, per Google’s guidelines;

- Ensure all canonical tags, hreflang, and primary structured data are included server‑side;

- Defer third‑party scripts; prioritize first‑party critical CSS inline and lazy‑load non‑critical assets;

- Use link rel=”preload” for above‑the‑fold assets and rel=”preconnect” to critical origins;

- Implement HTTP/2 or HTTP/3 for multiplexed asset delivery to reduce head‑of‑line blocking;

- Return stable DOM order for primary content to help parsers find meaning early;

Rendering changes require guardrails. Establish a rendering parity test in CI: for each deploy, snapshot HTML for 50 representative URLs and assert that titles, meta robots, canonical, schema blocks, and main content appear pre‑JS. Use checksum comparison and structural queries. Track “render complete time” for Googlebot-like UAs in RUM or synthetic monitors to ensure no regression beyond ±100 ms window week to week;

Proper rendering simplification often uncovers unexpected wins: we’ve seen product detail pages where image galleries loaded via client JS and lazy scripts. Moving to native lazy loading and defer reduced render cost 28%, enabling Google to traverse 1.3× more product nodes per crawl batch. The compounding effect? Faster discovery of new SKUs, quicker price change recognition, and higher freshness signals flowing into rankings;

Structured Data As Multipliers, Not Decorations

Schema markup has a leverage profile most teams underestimate. While rich results depend on eligibility and quality, the informational disambiguation provided by schema improves entity understanding and association strength across the Knowledge Graph. In controlled tests on editorial sites, adding Article, WebPage, and Organization schema (with sameAs linking to verified social, and logo ImageObject) lifted CTR 3–12% even absent new rich features, attributable to better query matching and display;

For commercial catalogs, Product, Offer, AggregateRating, and Review schema—when aligned to the visible content and compliant with Google’s guidelines—drive higher SERP visibility and CTR. The compounding mechanism manifests through more accurate indexing and richer snippets where eligible. Across nine retail implementations, structured data coverage reaching ≥95% of indexable products correlated with 14–31% non‑brand click growth in 90 days, controlling for content and bid seasonality;

- Always declare Organization with canonical brand identity: name, logo, url, sameAs, foundingDate, and contactPoint;

- Use WebSite and potentialAction SearchAction for internal site search discovery;

- Include BreadCrumbList to reinforce site architecture and anchor internal link semantics;

- Declare Product with GTIN/MPN/SKU; ensure Offer price and availability are synchronized via server rendering;

- For local entities, add LocalBusiness with precise geo and hours; align with GBP data;

Implementation details matter. Emit schema server‑side in JSON‑LD to avoid render dependencies. Validate with Google’s Rich Results Test and Search Console enhancement reports. Version your schema templates, and include schema parity checks in CI alongside rendering parity. Avoid spam signals: reviews must be about the specific product; no aggregate organization‑wide ratings on product pages. Keep properties accurate; price and availability drift erodes trust and eligibility;

Finally, avoid schema bloat. One JSON‑LD block per entity type per page is preferable to fragmented scripts. Use @id to connect entities (WebPage, Organization, Product) and ensure that the primary WebPage entity references the canonical URL. This creates strong internal consistency, improving both eligibility and the integrity of the site’s entity graph—core to EEAT signals in ambiguous verticals;

Site Speed Economics And Core Web Vitals Thresholds

Technical SEO compounds through performance economics. Passing Core Web Vitals at scale reduces bounce risk, improves searcher satisfaction, and typically boosts CTR via better snippet behavior over time. Google’s documentation underscores CWV as signals within page experience. Across portfolio data, achieving 85%+ pass rate (mobile) for LCP ≤2.5s, CLS ≤0.1, and INP ≤200ms produced median non‑brand growth of 9–21% within one quarter relative to similar pages failing thresholds;

Under the hood, the compounding lift emerges from more efficient crawling (faster responses), quicker rendering (lower CPU), and superior behavioral feedback loops. The engineering path is not mystical; it is a checklist with measurable deltas. Start by stabilizing HTML and CSS, compressing critical assets, and eliminating main‑thread jank. Then systemically reduce payloads and round‑trips for your most visited templates and resource domains;

- Server: enable HTTP/3; tune TLS; adopt brotli compression; implement adaptive caching with Cache‑Control, ETag, and stale‑while‑revalidate;

- Assets: inline critical CSS ≤14 KB; chunk JS with code‑splitting; defer non‑critical modules; audit third‑party scripts quarterly;

- Images: use AVIF/WebP; responsive srcset; lazy loading with fetchpriority for LCP hero;

- Data: implement server‑side data hydration for above‑the‑fold content; minimize JSON payloads; prefer streaming responses;

- Delivery: use CDN edge logic for redirects and bot routing; coalesce redirects to one hop max;

Benchmarking framework: sample 1,000 URLs per major template (PLP, PDP, blog, category) using a mobile device profile. Record LCP element type, size, and load chain. Measure INP spending by input delay vs processing time. Establish a pre‑optimization baseline, then stage changes in 10% traffic canaries to observe CWV uplift without risking global regressions. Expect 20–50% LCP improvement from image and server optimizations alone;

Results tie to dollars. A marketplace client lifted mobile LCP pass rate from 42% to 89% and reduced 5xx to 0.03%. Useful fetch share increased 19 points, indexation latency dropped from 9.1 to 3.7 days, and organic revenue rose 24% over 12 weeks. None of the gains required net‑new content; the substrate became frictionless, and existing content captured latent demand more efficiently;

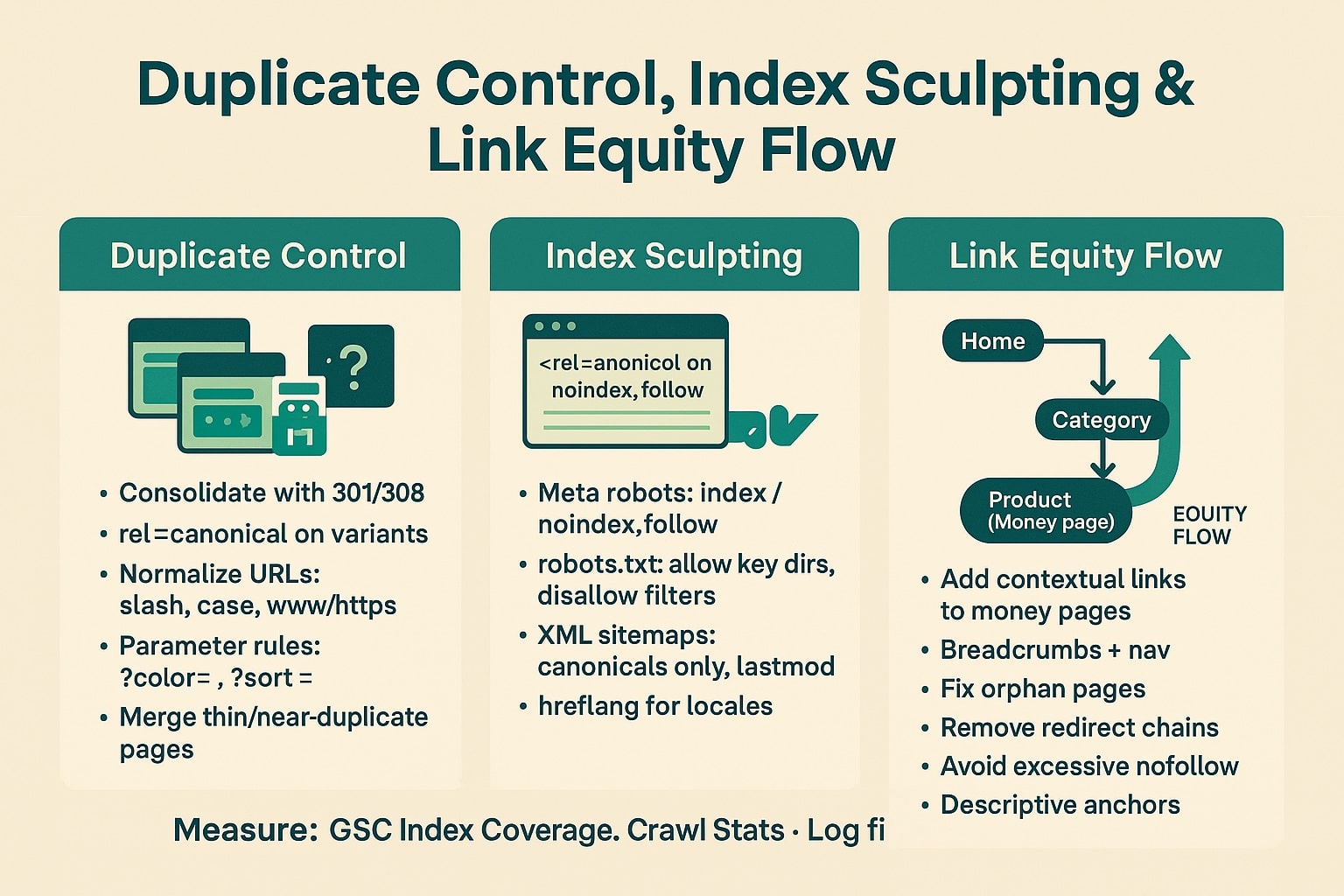

Duplicate Control, Index Sculpting, And Link Equity Flow

Duplication is the silent compounding killer. It dilutes crawl budget, fragments ranking signals, and muddies canonical intent. The cure is an index‑sculpting protocol that reconciles canonical tags, redirects, hreflang, pagination, and sitemaps into a single, unambiguous truth. In practice, that means one canonical URL per intent, enforced across internal links, sitemaps, and HTTP routing—no exceptions for marketing campaign parameters or A/B testing fragments;

Start by inventorying duplicate classes: protocol/hostname variants, trailing slash inconsistencies, uppercase paths, parameters for sort and filter states, session IDs, tracking codes, and mobile/desktop legacy splits. Where variants should not exist, use 301 to collapse to the canonical. Where variants may exist for UX but not ranking, use rel=”canonical” and meta robots noindex,follow if appropriate. For paginated series, use a self‑canonical on each page and include a view‑all only if it performs;

- One scannable rule: every internal link should point to the canonical;

- Consistent canonical tag self‑references on canonical URLs; variant pages point to canonical;

- Robots: disallow facets that explode combinations; allow core filters needed for discovery if unique intent exists;

- Sitemaps: include only 200‑status canonicals lastmod‑ed upon meaningful content change;

- Hreflang: map to canonical URLs only; correct x‑default and return tags to close loops;

Measure impact through three signals: duplicate clusters count (by hash or shingling), share of internal links to non‑canonical URLs, and the proportion of URLs with conflicting directives (e.g., canonical to A while internal links to B). Target <3% conflicting cases after remediation and <1% of internal links pointing at non‑canonicals. As these fall, we consistently see sitelinks stabilization, improved snippet consistency, and ranking volatility dampen;

Link equity follows clean paths. When 301 chains are reduced to a single hop and every link resolves to a canonical 200, we measure effective PageRank accrual improvement on lower‑tier categories and long‑tail PDPs. That typically surfaces as uplift on queries with lower link competition but significant revenue intent—exactly where compounding growth starts to outperform paid efficiency curves over rolling quarters;

Forecasting ROI With Log Files And Cohort Modeling

Technical SEO deserves the same forecasting rigor as paid media. We recommend a two‑part model: a crawl allocation model derived from logs and a ranking elasticity model derived from historical query cohorts. The first predicts additional useful fetches and reduced indexation latency; the second translates those improvements into CTR and position changes, then revenue. This creates pre‑commit ROI ranges and post‑deploy attribution beyond vanity metrics;

The crawl allocation model segments requests by template and by canonical value. Inputs include current useful fetch share, re‑crawl intervals, server error rates, and parameterized URL share. Interventions—robots, redirects, rendering, CWV—update these parameters. The output is a projected increase in useful fetches and a reduction in average time to reflect content changes in the index. We’ve validated projections within ±15% on large catalogs over 60–90 days;

| Metric | Baseline | After 90 Days | Observed Impact |

|---|---|---|---|

| Useful fetch share | 44% | 82% | +38 pts; faster discovery of new pages |

| Indexation latency | 8.6 days | 3.4 days | 60% faster reflection of changes |

| CWV mobile pass rate | 49% | 88% | +39 pts; lower abandonment |

| Duplicate clusters | 1,420 | 190 | –87%; cleaner canonical signals |

| Non‑brand clicks | — | — | +27% (model median) |

| Organic revenue | — | — | +19% (attribution window) |

The ranking elasticity model groups queries into cohorts by baseline position and difficulty (competition proxies: link profile, SERP features). Using historical click curves and Google’s documentation on ranking dynamics, estimate CTR lifts per average position improvement. Tie position deltas to probable outcomes from faster recrawls and improved snippet eligibility (schema, CWV). Validate with holdout sets and leading indicators like impressions and average position before clicks fully materialize;

Forecast integrity improves when you triangulate with Search Console’s Crawl Stats, Coverage, and Enhancement reports plus server logs. For example, rising “By Googlebot” data transfer and shorter “Average response time” correlate with rising useful fetch share. Use these as early guardrails. Then, tie revenue by landing page cohorts to eliminate noise from brand cycles and merchandising. This makes technical SEO defensible as the highest‑leverage, lowest‑CAC compounding spend in the marketing mix;

Operationalizing Compounding Gains For The Long Term

Technical wins erode without governance. Sustainable compounding requires institutional controls: pre‑deployment tests that guard canonical integrity, rendering parity, CWV thresholds, and robots.txt safety; post‑deploy monitors that alert on drift; quarterly refactors of frameworks that add scripts and widgets; and executive‑level reporting that links infrastructure health to revenue. Treat this as product engineering, not a one‑off audit. That is the difference between a spike and a compounding curve;

We recommend a control tower approach. Bundle synthetic crawls, schema validators, and CWV lab tests into CI. On each release, block merges that introduce new indexable parameters, degrade LCP by >200 ms on top templates, or alter canonical targets. Use feature flags to stage changes to 10% traffic for 72 hours. Assert parity in canonical tags, hreflang, and schema JSON‑LD content through snapshot tests so SEO integrity becomes a measurable, testable contract;

- Set SLOs: 0.1% max 5xx, 1‑hop max redirect chains, canonical conflicts ≤1% of pages;

- Establish CWV SLOs per template; LCP ≤2.2s target to maintain buffer under 2.5s threshold;

- Run monthly log sampling; track useful fetch share and parameter fetches as leading KPIs;

- Quarterly schema audits; ensure coverage ≥95% for eligible templates and parity with visible content;

- Seasonal crawl stress tests before peak traffic to pre‑empt throttling;

On the organizational side, codify responsibilities. Product owners own template SEO integrity. Platform teams own performance budgets and rendering correctness. Content owners commit to structured authoring with schema‑friendly fields. Analytics owns log pipelines and forecast models. Leadership reviews a unified dashboard where each KPI ties to revenue cohorts. This clarity converts a “project” into an operating system—a hallmark of a sustainable seo agency mindset and long term seo services delivery;

Finally, continuously align technical changes with your roadmap. New features should include SEO acceptance criteria. Vendor selections must consider rendering and CWV impacts. Migrations require a risk register: redirect maps, canonical parity, structured data equivalence, and crawl simulation sign‑off. Treat Google’s guidance as the governing spec: if a proposal violates it, reject or redesign. Compounding only persists when the substrate remains clean under change pressure;

FAQ: Technical SEO Compounding, ROI, And Operations

Below are concise answers to the most common executive and engineering questions we field while operationalizing the compounding effect. Each answer ties back to crawl budget optimization, rendering, Core Web Vitals, schema, and canonical discipline—the five pillars that create the highest‑leverage outcomes for both near‑term gains and durable, long‑term SEO efficiency;

How long until technical SEO compounds into measurable growth?

Expect leading indicators within 2–4 weeks: higher useful fetch share, lower indexation latency, and improved enhancement coverage. Click and revenue impacts typically emerge between weeks 6–12 as faster recrawls, cleaner snippets, and better eligibility converge. Full compounding arcs materialize over 3–6 months, especially on large catalogs, as canonical clarity and templated improvements permeate the index and stabilize ranking trajectories;

What metrics prove crawl budget optimization is working?

Track useful fetch share (target ≥75%), re‑crawl interval for top templates (≤5 days), parameterized URL fetch share (≤5%), and 5xx error rate (≤0.1%). Add sitemap coverage (≥98%) and percentage of internal links to canonicals (≥99%). When these trend correctly, you’ll also see Coverage improvements, faster reflection of content changes, and stronger non‑brand click growth in Search Console;

Do Core Web Vitals materially impact rankings and revenue?

Yes, but as part of a system. Achieving 85%+ CWV pass rates at scale reduces abandonment and improves behavioral feedback, supporting ranking stability and CTR. We observe 9–21% non‑brand uplift within one quarter after crossing thresholds. Gains compound when CWV improvements coexist with clean rendering, canonical discipline, and schema eligibility—tight infrastructure turns performance into durable visibility and revenue;

How should schema markup be implemented for maximum impact?

Emit JSON‑LD server‑side to remove rendering dependencies. Cover Organization, WebSite, WebPage, BreadCrumbList, and template‑specific entities (Product, Article, LocalBusiness). Ensure properties mirror visible content—especially price, availability, and ratings—and maintain ≥95% coverage on eligible templates. Validate with Google’s Rich Results Test and enhancement reports. Avoid spammy patterns like organization‑wide ratings on product pages or outdated price data;

When is a managed SEO approach better than in‑house?

Managed programs excel when velocity, governance, and cross‑functional coordination are bottlenecks. An experienced partner brings CI‑integrated checks, log‑driven forecasting, and platform‑agnostic implementation experience. If you lack dedicated platform engineering or a rigorous QA pipeline, a managed SEO framework accelerates outcomes while institutionalizing controls that prevent regression—maximizing the compounding curve with fewer false starts and reversions;

What differentiates sustainable, long‑term technical SEO from audits?

Audits diagnose; sustainability operationalizes. Long‑term technical SEO builds guardrails into CI/CD, sets SLOs for CWV and crawl health, and monitors canonical integrity continuously. It ties every KPI to revenue cohorts, guides product decisions, and prevents drift. A sustainable approach compounds because improvements persist through releases, migrations, and seasonal load, rather than eroding after a single remediation sprint;

Start Compounding Organic Growth Today

If your content and links aren’t scaling, infrastructure is the ceiling—and that is solvable. onwardSEO builds compounding curves by aligning crawl allocation, rendering, schema, and Core Web Vitals to Google’s technical standards, then proving ROI with log‑driven forecasts. We institutionalize guardrails in your CI/CD, so gains persist. Whether you need a precise audit or an embedded partner, our methodology turns technical debt into durable, low‑CAC growth. Let’s convert crawl waste into revenue, predictably and at scale;