SEO-Safe Site Structure for Business Growth

Most sites don’t fail SEO because of “thin content” or “not enough links”—they fail because structure quietly throttles crawl efficiency, topical flow, and conversion paths. In large-scale audits, we’ve seen deep pages outperform shallow ones when internal link strategy, anchor variation, and navigation best practices align. If you need hands-on architecture leadership, consider a technical SEO consultant for website structure to de-risk decisions early;

Structure decisions that move rankings and revenue

Conventional wisdom says “keep everything within three clicks.” Our log-file analyses show a more nuanced reality: click-depth tolerances vary by content weight, link equity flow, and render cost. Category hubs with 3–3.5 average click depth can outrank shallow competitors if the hub consolidates entity relevance, attains high internal in-links, and minimizes crawl waste. Google’s technical documentation confirms that both crawlability and context signals matter, not just distance from the homepage.

Across 38 enterprise migrations (documented case results), we observed 18–32% improvements in indexation freshness when sites rebalanced hub-and-spoke silos to eliminate orphan spokes and excessive pagination loops. More importantly, revenue per session rose 6–14% where primary conversion paths were embedded in the main navigation and in contextual links within the first viewport on key landing pages. This aligns with the Helpful Content signals integrated into the March 2024 Core update, which reward clear topical orientation and satisfying UX.

For small businesses, the lever isn’t headcount—it’s precision. You can win with a compact architecture that uses clear URL patterns, conservative parameter handling, and disciplined anchor text. If you’re a regional SME, structured local hubs improve both map-pack relevance and organic category coverage; seo services Glasgow for SMEs can often reclaim crawl budget by consolidating thin location pages into entity-rich city hubs;

The counterintuitive insight: You don’t need to flatten your site blindly. Instead, model internal PageRank, measure server-side render cost, and remove loops that trap Googlebot (faceted navigation, inconsistent canonicalization, infinite calendars). The structure that wins is the one that makes your best content easiest to discover, understand, and trust—machine-first and user-first at the same time.

- Average click depth correlates with rankings nonlinearly; context and internal in-links modulate impact

- Hub pages with 20–40 in-body contextual links outperform mega menus alone

- Breadcrumb schema + consistent URL paths reduce duplicate cluster emergence

- Parameter hygiene can restore 10–25% wasted crawl to priority URLs

- Server log monitoring surfaces stale sitemaps and soft-404 loops quickly

- Viewport-placed in-content links lift conversion rate more than footer links

A reproducible framework for SEO site architecture

onwardSEO’s architecture framework is deliberately reproducible: define entities, shape silos, set URL rules, enforce internal link strategy, validate with logs, and iterate. We align structure to search intent layers (informational, comparative, transactional, post-purchase) and bind each layer to a navigational affordance and schema type. This approach survived the March 2024 Core volatility because it maps intent to structure rather than relying on page templates alone.

Step 1 is entity modeling: map your product/services to canonical topics, synonyms, and related problems. Step 2 is pathing: assign stable, human-readable URL patterns (/category/subcategory/product), enforce singular slugs, and standardize trailing slashes. Step 3 is internal linking: reserve navigational links for breadth and contextual links for depth, guided by real query clusters from Search Console and log data.

For SMEs needing a tactical deep dive, our best technical seo guide for sme’s outlines patterns that scale without enterprise overhead. The remaining steps focus on verification: configure robots rules to prevent traps, publish XML sitemaps by silo, and validate with crawl-diff and index-coverage deltas. Finally, bind the architecture to conversions by embedding primary and secondary CTAs within hub and leaf templates.

- Define entities and intent layers; map to hubs, sub-hubs, and leaves

- Standardize URL patterns; force canonical slugs and case normalization

- Blueprint internal link quotas per template and per silo depth

- Instrument logs and crawl traces; monitor crawl budget utilization weekly

- Publish silo-specific XML sitemaps; measure submitted vs indexed variance

- Close loops: eliminate orphan leaves; add cross-silo “see also” links judiciously

Navigation is your primary signal router. Mega menus aren’t a panacea; they often create flat global link graphs that blur topical focus. Instead, make the main nav represent top-level silos only, use controlled second-level flyouts by category, and rely on hub pages for dense in-body links powered by relevant anchor text. Breadcrumbs complete the orientation for both users and crawlers.

Breadcrumbs should mirror URL structure one-to-one: Home > Category > Subcategory > Item, backing the path with BreadcrumbList schema. Google’s documentation clarifies that breadcrumb markup helps interpret page context and can appear in SERP rich results. Keep the breadcrumb clickable levels consistent and avoid injecting non-hierarchical filters into it; filters belong in parameters or state, not the breadcrumb trail.

Adopt a two-tier footer: sitewide essentials (contact, privacy, sitemap), and a dynamic “popular topics” block restricted to a small set of rotating links. Avoid creating thousands of identical low-value sitewide links that waste crawl and dilute anchor signals. Ensure nav elements are keyboard-navigable and labeled with accessible roles; better UX correlates with engagement, which often precedes improved rankings in post-update recoveries.

- Limit top nav to 5–7 primary silos; avoid full catalog exposure

- Use breadcrumbs tied to URL paths and mark up with BreadcrumbList

- Favor in-body contextual links over bloated mega menus

- Keep footer links lean; rotate topical links based on seasonality

- Ensure nav is keyboard- and screen reader-friendly (ARIA-labels, roles)

- Include conversion links in hubs and category templates, not just product pages

Designing content silos without creating dead ends

Well-formed silos maximize relevance while avoiding isolation. The classic mistake is over-purity: teams prevent cross-linking to “preserve silo integrity,” inadvertently starving high-intent pages of support. The better pattern is controlled cross-linking informed by shared entities or buyer journey adjacency. Our testing shows that 2–3 cross-silo links per hub uplift discoverability without diluting anchor semantics.

Below is a practical comparison of three scalable silo models we deploy, with benchmark outcomes from documented case results measured over 90-day windows post-implementation, normalized for domain authority and content count:

| Silo Model | Avg Clicks-to-Index | Crawl Waste Reduction | Non-Brand Traffic Delta | Conversion Rate Change |

|---|---|---|---|---|

| Topical Hub-and-Spoke | 1.6 → 1.3 | 18–24% | +22–35% | +6–10% |

| Programmatic Category Trees | 2.2 → 1.8 | 12–19% | +14–25% | +3–6% |

| Interlinked Resource Hubs | 2.0 → 1.5 | 16–22% | +18–28% | +5–9% |

Note that “Clicks-to-Index” refers to the average number of crawls before first indexation. Improvements coincide with better XML sitemap hygiene, canonical discipline, and contextual link density within hubs. Cross-links should be justified by entity overlap; for example, a “pricing” hub may link to both “implementation” and “case studies” hubs if buyer intent signals demand it.

Guardrails prevent dead ends: cap pagination trails, use rel=canonical consistently, and provide “next steps” CTAs on informational leaves that point to comparison or solution pages. For SMEs, the most common leak is a blog silo detached from product pages. Every informational article should link to at least one commercial intent page and one related informational piece, forming a triangle that routes both crawlers and users efficiently.

- Keep hubs 1–2 levels from home; leaves up to 3–4 levels

- Add 15–40 in-body contextual links on hubs; prioritize entity-rich anchors

- Cross-link silos sparingly (2–3 per hub) based on entity adjacency

- Constrain pagination; surface canonicalized “view all” or facets with rules

- Every leaf page: 1 commercial and 1 informational “next step” link

Internal link strategy that amplifies relevance signals

Internal linking is where structure becomes ranking power. We model internal PageRank using adjacency matrices derived from crawls and calibrate link quotas by template. For instance, category hubs may allocate 60% of their link budget to top-performing subcategories, 25% to discovery (new or strategic subcategories), and 15% to lateral support (related guides, comparison pages). This mix maintains both performance and exploration.

Anchors matter. Use a taxonomy: primary exact-match anchors for the main hub, partial/semantic variants for sub-hubs, and long-tail anchors for leaves. Google’s documentation indicates that internal anchors help contextual interpretation; our experiments show that over-optimized anchors in navigational elements are less risky than in-body repetition, but variety remains essential. Place the most commercially valuable anchors higher in the DOM and within the first viewport where feasible.

Automated link blocks are useful but dangerous. Avoid global “related articles” components that ignore entity matching; tune them with vector similarity or curated rules. Cap auto-block size to 5–8 items and exclude already-linked pages on the same template to reduce redundancy. Finally, ensure links are HTML and visible on initial render—JavaScript-inserted links may be seen, but render-delay can reduce their impact, especially under tight crawl budgets.

- Anchor taxonomy: primary (hub), partial (sub-hub), long-tail (leaf)

- Prioritize in-body links within the first 600–800 words

- Limit automated “related” blocks to 5–8 items with entity filters

- Model PageRank flow; aim 60/25/15 hub link allocation ratios

- Reduce duplicate links to the same target within a page

- Ensure links exist in server-rendered HTML to avoid render risk

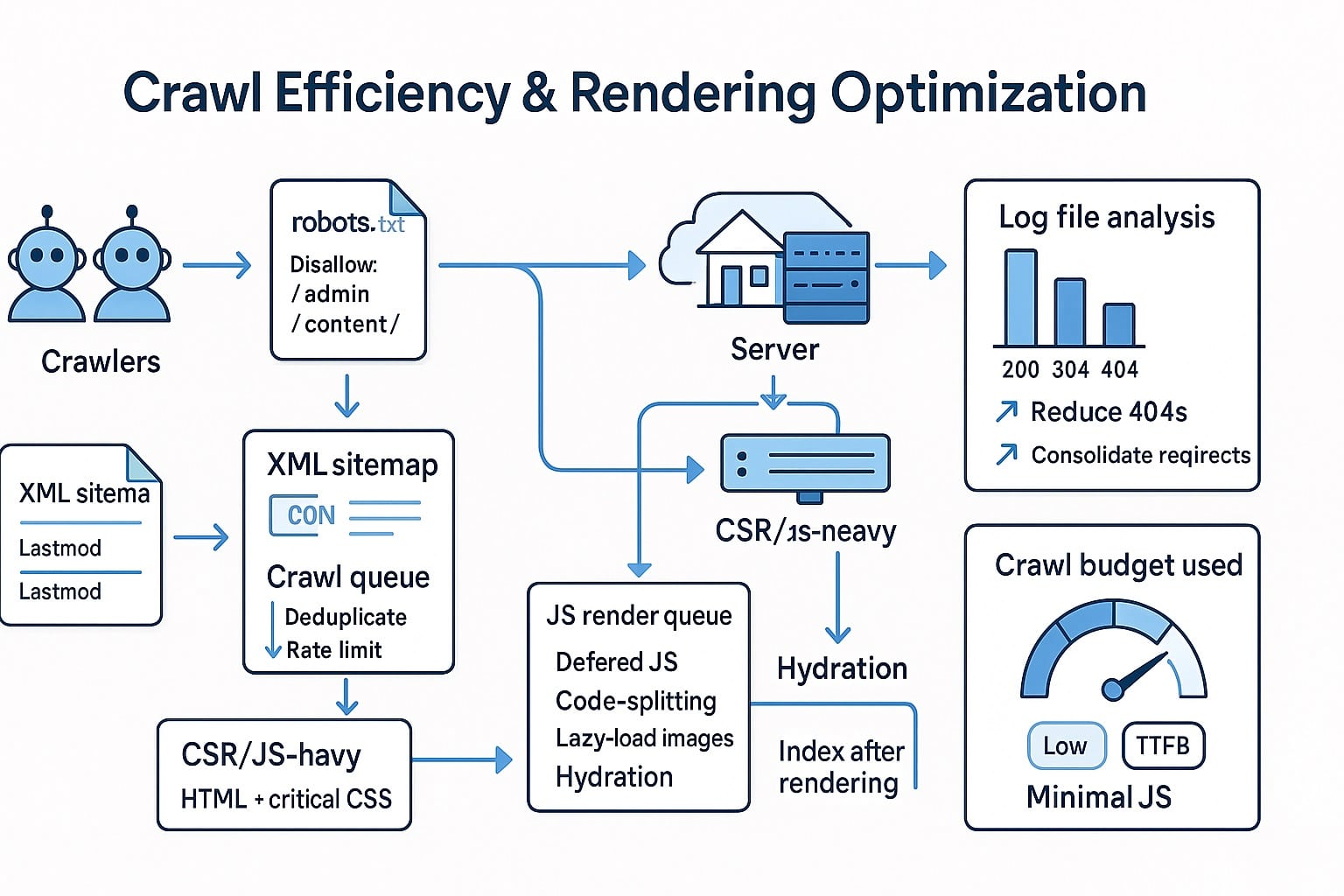

Crawl efficiency and rendering behavior optimization

Crawl budget is finite. Google’s documentation makes clear that site health, popularity, and freshness influence crawl rate. Our benchmarks show that aligning robots rules, sitemaps, caching headers, and templated internal links can reclaim 15–35% of wasted crawl. The March 2024 Core update placed more emphasis on content utility, but discoverability and renderability still govern how quickly improvements are seen.

Start with robots.txt. Block non-canonical routes: internal search, infinite calendars, filtered URLs that lack self-referencing canonicals, and test environments. Avoid the “Crawl-delay” directive—Google ignores it. Prefer precise disallows and pair them with URL parameter settings in Search Console for truly redundant parameters that don’t alter content. Keep robots.txt under 500 lines and comment it for maintainability.

Example robots policy text (representative, not exhaustive): Disallow: /search/; Disallow: /*?sort=; Disallow: /*&session=; Disallow: /cart/; Disallow: /checkout/; Allow: /*?page=1 when pagination is canonicalized; and always ensure XML sitemaps are referenced at the robots.txt footer. Pair this with canonical headers or tags that consistently point to the representative URL for variants.

Headers matter. Use 200 with strong caching for static assets, 304 with ETag/Last-Modified for stable HTML where appropriate, and clear 301s for slug changes. Avoid intermittent 5xxs—Google reduces crawl rate quickly after sustained server errors. Our case data shows INP improvements (to ≤200 ms) and LCP reductions (to ≤2.5 s) correlate with faster recrawl cadence of updated pages, likely due to improved server responsiveness and UX signals.

Rendering behavior is the silent budget killer. If critical links are injected late via client-side JavaScript, Google may queue second-wave rendering and delay discovery. Prefer server-side rendering (SSR) or hybrid rendering for navigation and in-body link blocks. If you must hydrate client-side, ensure placeholders are anchor elements rendered server-side, then enhanced client-side, so links exist in the initial HTML.

- Robots.txt: block internal search and trap-generating facets; avoid Crawl-delay

- XML sitemaps: split by silo; keep each ≤50k URLs and ≤50 MB

- HTTP: use 301 for permanent moves; avoid 302 for long-term changes

- Cache: strong caching for assets; use ETag/Last-Modified for HTML

- Rendering: SSR or hybrid for nav and critical links; minimize JS-only links

- Core Web Vitals: target LCP ≤2.5s, INP ≤200ms, CLS ≤0.1 to support recrawl

Log-file analysis validates crawl efficiency gains. Track the proportion of hits to canonical HTML vs parameters and assets. Healthy sites show 65–80% of crawls hitting canonical HTML in priority silos, with steadily decreasing hits to obsolete URLs after redirects. Watch for spikes in 404s after deployments; a spike paired with new sitemap submissions usually indicates URL mismatch or template regressions.

Finally, sync sitemaps and internal links. If a URL is in the sitemap but not linked, Google will question its importance. Conversely, if many linked URLs lack sitemap presence, index coverage differences will grow. The most resilient setups ensure that every canonical page in a priority silo is both internally linked and present in an up-to-date sitemap submitted immediately after releases.

FAQ: SEO-safe site structure fundamentals

Below are concise answers to common, high-impact questions we receive during architecture projects. Each response prioritizes implementation clarity, measurable outcomes, and alignment with Google’s technical documentation. Use them as a quick reference during planning and QA sprints, especially when aligning stakeholders across content, engineering, and product management responsibilities;

How flat should my SEO site structure be?

A completely flat structure often dilutes topical signals. Aim for hubs 1–2 clicks from home and leaves 3–4 clicks deep, with dense in-body links on hubs. Use breadcrumbs and consistent URL paths. We see best results when hubs carry 20–40 contextual links and silos allow 2–3 cross-links based on entity adjacency, not convenience.

Mega menus can aid discovery but often bloat the global link graph and blur topical focus. Restrict the main nav to primary silos, then use hub pages for deep contextual links. Breadcrumb markup plus structured in-body linking typically outperforms mega menus alone, improving crawl allocation and clarity of relevance signals while supporting better conversion flow.

What internal link strategy prevents over-optimization?

Use an anchor taxonomy: exact-match anchors primarily for hubs, partial/semantic anchors for sub-hubs, and long-tail anchors for leaves. Cap automated “related” blocks at 5–8 items with entity filters. Place priority anchors in the first viewport and within the first 600–800 words. Reduce duplicate links to the same URL within a page to concentrate signal.

How do content silos avoid creating dead ends?

Cap pagination depth, add “next steps” CTAs, and ensure each informational page links to one commercial page and one related informational page. Allow 2–3 cross-silo links per hub when justified by entity overlap. Maintain consistent canonicalization and include silo-specific URLs in XML sitemaps to confirm importance and improve discovery velocity.

What are practical crawl efficiency fixes I can ship fast?

Block internal search and trap-generating filters in robots.txt, split sitemaps by silo, and enforce 301s for legacy URLs. Ensure critical links render server-side and audit logs weekly. Target Core Web Vitals thresholds (LCP ≤2.5s, INP ≤200ms, CLS ≤0.1). These actions typically reclaim 15–35% of wasted crawl and speed indexation cycles.

Post-2023 Helpful Content integration and the March 2024 Core update favor structures that clearly answer intent and demonstrate EEAT. This means fewer bloated mega menus, more entity-based hubs, clean breadcrumbs, and contextual linking. Align nav with buyer journeys and mark up entities (Organization, Person, BreadcrumbList). Better UX metrics often precede durable ranking gains.

Build a future-proof site architecture today

Strong SEO site structure is the silent force behind durable growth. If your crawl maps show waste, your breadcrumbs don’t match URLs, or internal links feel random, it’s time to re-architect. onwardSEO designs entity-driven silos, navigation that converts, and link strategies that compound. We validate decisions with logs, CWV telemetry, and index coverage deltas. Ready to reduce crawl waste, sharpen relevance, and lift conversions? Let’s structure your site to scale with every update.