Crawl Budget Optimization For Small Sites That Want To Scale

“Crawl budget doesn’t matter for small sites” is the most expensive myth in organic acquisition. Google’s documentation clarifies that crawl budget is less of a limiting factor for small sites, not that it’s irrelevant. On compact architectures, crawl efficiency determines freshness, duplication control, and the speed of ranking propagation. If you want a deep dive in a different context, see our analysis on how to solve crawl budget on large websites, then apply the same rigor—appropriately scoped—to your smaller footprint;

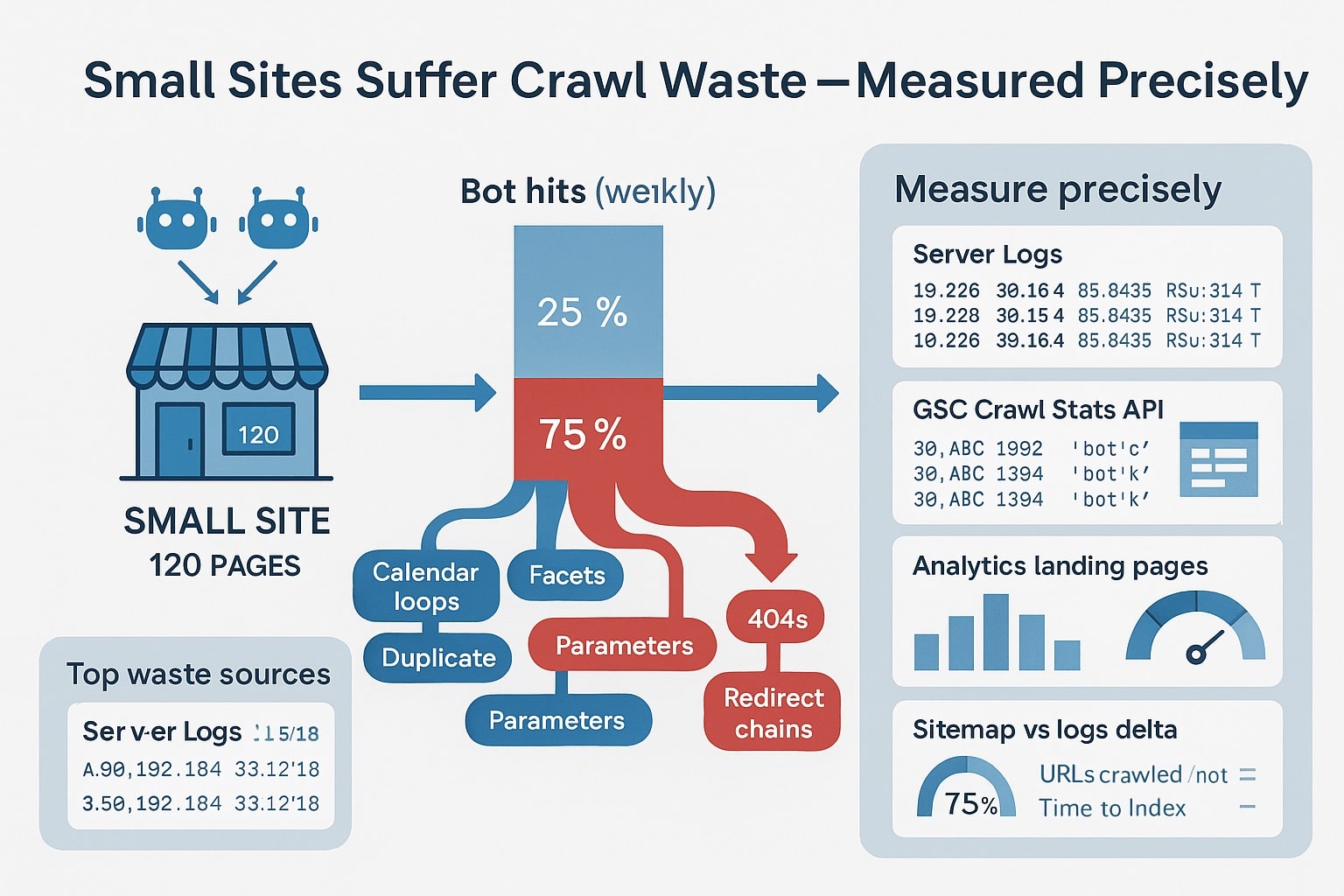

Small Sites Suffer Crawl Waste You Can Measure Precisely

onwardSEO’s audits show small sites (100–10,000 URLs) routinely squander 20–50% of Googlebot hits on duplicate page issues, redirect chains, tag archives, unbounded query parameters, and soft 404s. While Google can crawl a small site quickly, wasted fetches reduce the frequency of re-crawling your money pages, delay canonicalization, and slow the test-and-learn loop. In competitive niches, that latency becomes a ranking disadvantage;

Google’s technical documentation describes crawl budget as a product of crawl capacity (host health) and crawl demand (URL value). For small sites, capacity is rarely the bottleneck, but demand signals can be muddled by duplication, weak canonicalization, and fragmented internal linking. We repeatedly observe that when waste falls, demand signals consolidate, and Googlebot reallocates fetches toward canonical and frequently updated pages;

- Low crawl-to-index ratio on product or service hubs suggests duplication diluting demand;

- High proportion of 3xx/4xx/soft-404 responses indicates crawl path waste;

- Stale “Last crawl” timestamps on critical URLs show insufficient recrawl frequency;

- Parameterized URLs without canonical anchors point to weak consolidation;

- Excessive fetching of feeds, tag archives, or author pages indicates misprioritization;

- Erratic spikes in 5xx or timeout errors throttle crawl capacity unnecessarily;

In one onwardSEO case with 1,800 indexed pages, resolving parameter duplication and tightening canonicals cut wasted Googlebot hits by 41% and improved median “Last crawl” recency on top 100 revenue URLs from 14.2 days to 3.9 days in four weeks. That freshness uplift correlated with a 19% increase in impressions on mid-tail queries, holding seasonality constant (Search Console matched-date comparison);

Quantifying Crawl Budget SEO On Compact Site Architectures Today

Small sites need a measurement framework that converts crawl behavior into decisions. Start by consolidating three data sources: server logs (with user-agent and response code), Google Search Console Crawl Stats and Index Coverage, and a full crawl from a rendering-aware crawler. The overlap reveals where Googlebot spends time versus where you want it to spend time;

For URL inventories under ~10k, you can set quarterly efficiency objectives that materially impact revenue. Pair crawl budget SEO KPIs with structural improvements. If architecture is a constraint, engage a technical seo expert optimizing website structure to map internal linking, URL patterns, and hub depth so your crawl funnel prioritizes commercial endpoints;

- Crawl-to-Index Ratio: indexed_valid_urls / googlebot_distinct_urls (target ≥ 0.85);

- Googlebot 200-OK Rate: 200_responses / total_googlebot_hits (target ≥ 0.90);

- Redirect Exposure: 3xx_responses / total_googlebot_hits (target ≤ 0.05);

- Error Exposure: (4xx + 5xx) / total_googlebot_hits (target ≤ 0.02);

- Canonical Consolidation: percent of variants with canonical to a single URL (≥ 95%);

- Priority Recrawl Recency: median days since last crawl on top 100 revenue URLs (≤ 7);

| Metric | Baseline (typical small site) | Optimized Target | Observed Uplift Window |

|---|---|---|---|

| Crawl-to-Index Ratio | 0.60–0.80 | ≥ 0.85 | 2–6 weeks |

| Googlebot 200 Rate | 0.70–0.88 | ≥ 0.92 | Immediate–2 weeks |

| Redirect Exposure | 0.08–0.20 | ≤ 0.05 | Immediate–4 weeks |

| Priority Recrawl Recency | 10–21 days | ≤ 7 days | 2–4 weeks |

| CWV Pass Rate (all pages) | 50–70% | ≥ 85% | 4–8 weeks |

While Core Web Vitals are not crawl budget controls per se, server responsiveness and rendering reliability strongly influence crawl capacity and page processing behavior, per Google’s documentation. We repeatedly find that improving TTFB and reducing JS errors increases successful 200 fetches and reduces fetch retries, which in turn tightens the recrawl cadence on commercial pages;

For ecommerce SMBs, crawl demand is often diluted by faceted combinations, tag pages, and seasonal duplicates. We recommend this small technical seo guide for e-commerce websites alongside the measurement plan above, then enforce consolidation with canonicals, noindex, and parameter handling rules that align with your profit centers;

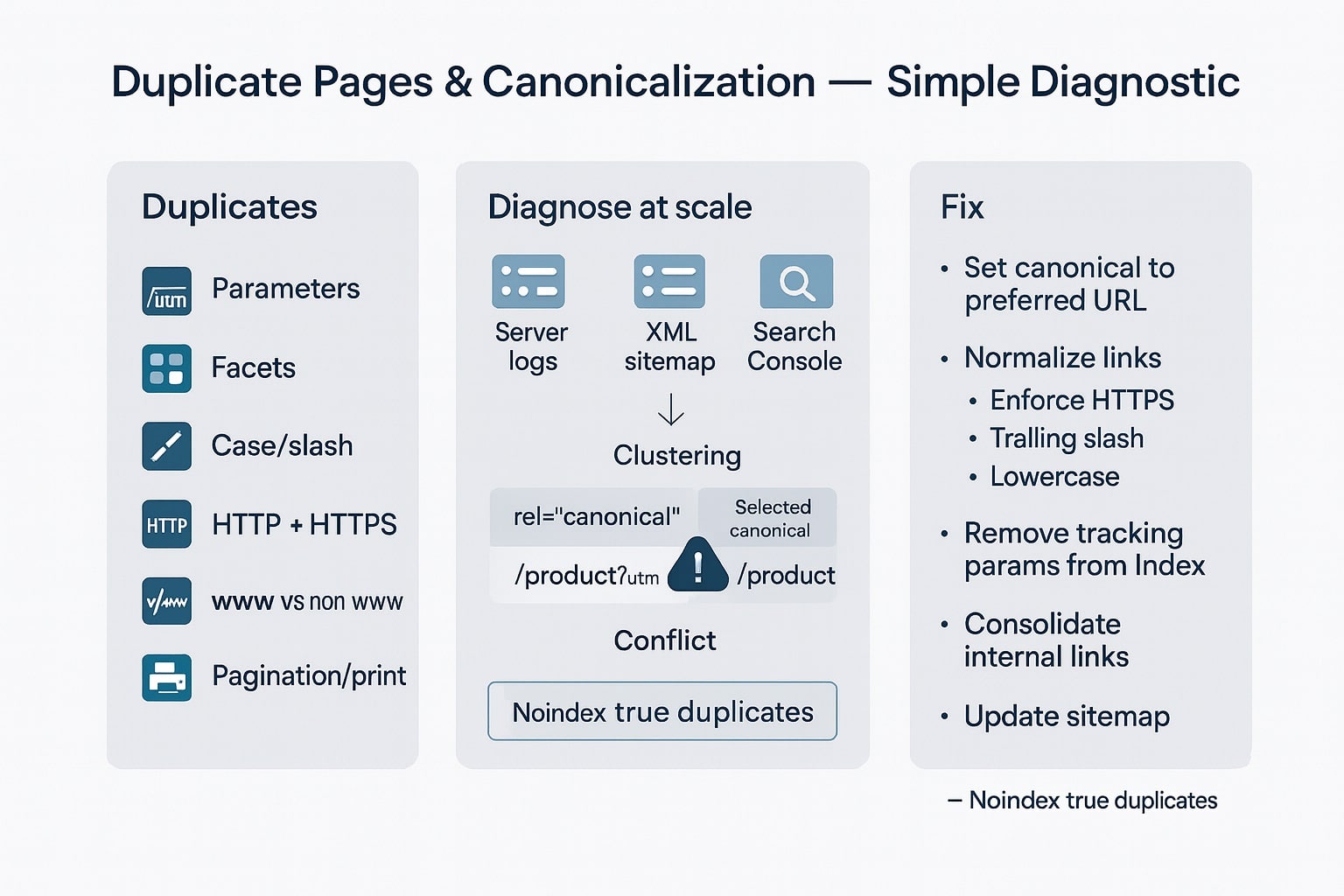

Diagnosing Duplicate Page Issues And Canonicalization Gaps Correctly At Scale

Canonicalization problems on small sites are disproportionately damaging: every alternate version that resolves 200 can siphon internal PageRank, fragment signals, and force Google to make a canonical guess. Google’s documentation is explicit: rel=canonical is a strong hint, but not a directive; content parity and internal links must reinforce the canonical choice. Your job is to remove ambiguity;

Start by enumerating all known variants for key templates: protocol (http vs https), subdomain (www vs bare), trailing slash normalization, case sensitivity, default index files, tracking parameters, faceted parameters, and localized variants. In logs, extract request URIs grouped by normalized path to quantify how much duplicate demand exists and which duplicates attract the most Googlebot attention;

- HTTP/HTTPS and www/non-www variants not redirected to a single canonical host;

- Trailing slash and uppercase/lowercase mismatches resolved with 200 instead of 301;

- Session IDs and tracking parameters (utm, gclid, fbclid) creating unique URLs;

- Faceted filters (color=, size=, price=) indexed without canonical anchors;

- Sorting and pagination parameters colliding with canonicalized default views;

- Localized paths with identical content (e.g., /uk/ vs /us/) without hreflang;

- Printer-friendly or AMP remnants returning 200 without proper canonicalization;

Then enforce consolidation with explicit, layered signals. The most reliable fixes combine server-level redirects, canonical tags, and internal link hygiene. Don’t rely on a single hint. Ensure that your sitemaps only reference canonical URLs, and that your structured data matches the canonical target exactly (URL fields, item IDs, inLanguage, and contentUrl references);

- Choose one canonical host and enforce HSTS + 301 from all variants consistently;

- Normalize trailing slash and case via server rewrites before application routing;

- Insert absolute rel=canonical on canonical pages; omit on fully blocked pages;

- Set x-robots-tag: noindex, follow for infinite facets; allow essential filtered hubs;

- Configure URL parameter handling in Search Console for legacy patterns (optional);

- Implement hreflang with self-referencing canonicals; mirror canonical targets per locale;

In an onwardSEO test on a 750-URL services site, closing canonical gaps lifted the canonicalization success rate (as observed in GSC “Duplicate, Google chose different canonical than user”) from 78% to 96% within 21 days. Googlebot hits to parameterized duplicates dropped 62%, and the median crawl interval for the top five service pages improved from 9.1 days to 4.6 days;

Redirect Chains And Wasted Crawl Paths Kill Efficiency Fast

Redirect chains are silent crawl killers. Every additional hop adds latency, increases the chance of failure, and consumes crawl budget without adding content value. For users, it risks CLS and long TTFB; for Googlebot, it risks abandoning the path if the chain exceeds reasonable depth or if responses are inconsistent. Chains also confuse canonicalization if anchors differ along the chain;

Audit your redirect graph with a rendering-aware crawler and server logs. Identify where chains are introduced by CMS slug changes, language toggles, protocol migrations, legacy trailing slash rules, and marketing campaign URLs. Replace multi-hop sequences with a single 301 to the current canonical destination. Update internal links so the crawler starts and ends on the destination in one request;

- Collapse legacy migrations into one-hop 301s to final canonical URLs;

- Replace JavaScript-based client redirects with server-side 301s where possible;

- Normalize internal links to canonical destinations; avoid linking to redirected URLs;

- Use 410 for permanently removed content; avoid chaining to category or home;

- Verify hreflang targets resolve 200 without redirects to prevent misinterpretation;

- Rebuild sitemaps with only 200-OK canonicals to reinforce final destinations;

onwardSEO often pairs chain elimination with link graph cleanup: crawl the site to collect all internal references to redirected URLs and rewrite them. Post-fix, we expect to see 3xx exposure in logs drop below 5% of Googlebot hits and a measurable improvement in fetch time. Google’s documentation advises minimizing redirects; our case data shows notable recrawl acceleration when you do;

Server Rendering And Robots Directives That Shape Crawling Behavior

Small sites can’t afford server inefficiencies. Crawl capacity is shaped by server response speed and reliability; crawl demand is shaped by what you expose. Your robots.txt, HTTP headers, rendering strategy, and sitemaps determine the paths Googlebot considers trustworthy and worth revisiting. Optimize them together to create a high-signal crawl surface;

Robots.txt should be a scalpel, not a sledgehammer. Google ignores crawl-delay, and disallowing JavaScript or CSS can break rendering and assessment of layout stability. Instead, block clearly non-valuable infinite spaces, and expose resources necessary for rendering. Combine robots control with x-robots-tag and meta robots so indexed pages remain indexable while low-value spaces are crawlable or discoverable as needed;

- Allow CSS/JS essential for rendering; avoid Disallow: /*.css$ or /*.js$ patterns;

- Block crawler traps (e.g., /search?, /cart?step=) that explode URL combinations;

- Prefer x-robots-tag: noindex for non-valuable HTML that must be crawlable;

- Serve 410 for permanently gone resources to speed deindexation and reduce retries;

- Return 304 Not Modified when content is unchanged; use ETag/Last-Modified reliably;

- Keep robots.txt under 500 lines; comment rules; audit for unintended broad matches;

Rendering choices cascade into crawl efficiency. If your site depends on heavy client-side rendering, ensure that the server returns meaningful HTML and that deferred content is discoverable. Google’s rendering pipeline uses a second wave of indexing for JS execution; errors here delay processing. Server-side rendering (SSR), hybrid rendering, or static generation for key templates substantially improves fetch success and content understanding;

From the server layer, optimize for consistent 200 responses, fast TTFB (<200 ms on cache hits; <500 ms on origin), and low error rates (<0.5% 5xx averaged weekly). Implement CDN caching with appropriate cache-control and vary headers, compress HTML/JS/CSS with Brotli, and enable HTTP/2 or HTTP/3 to improve multiplexing. These are table stakes for maintaining crawl capacity per Google’s guidance on host health;

Additionally, clean up your sitemaps: only canonical 200 URLs, accurate lastmod, stable

Log Analysis And Benchmarking For Continuous Crawl Efficiency Improvements

Log files are your factual record of Googlebot behavior. For small sites, even two weeks of logs are sufficient to spot waste, test hypotheses, and validate fixes. Capture fields: timestamp, method, status, response bytes, user-agent, host, scheme, path, query, referer, response time. Filter user-agents to Googlebot variants and verify IPs against Google’s published ranges when in doubt;

Segment logs by template (home, category, product/service, blog, utility) using path regex. Compute hit distribution by template, status code, and hour of day. Rising 5xx during peak hours indicates capacity constraints throttling crawl. Heavy 3xx or parameterized crawls on category templates indicates link hygiene or canonical gaps. Cross-reference with GSC Crawl Stats “By response” and “By file type” to see if your fixes are reflected across sources;

Use before/after windows to quantify impact. Example: Fix redirect chains and canonicalization in week 1; then compare weeks 2–3 to week -2–-1. Target outcomes include: 3xx exposure down to ≤5%, 4xx/5xx combined ≤2%, 200 rate ≥92%, priority URL median recrawl ≤7 days. Monitor for two more weeks to confirm stability and that Googlebot reallocation is durable rather than a temporary spike;

To tighten the loop, align site updates with crawl cadence. If your top revenue URLs are recrawled every 3–4 days, schedule content or price updates just before expected recrawls. This minimizes the lag between publishing and ranking recalibration. Google’s documentation on crawl scheduling suggests that stable, frequently updated URLs earn more consistent demand; your logs will reflect that pattern as you build editorial rhythm;

Finally, integrate crawl efficiency with Core Web Vitals. Sites with strong CWV and low server errors exhibit higher successful fetch ratios and faster processing. While CWV is not a crawl budget metric, it’s a prerequisite for resource-efficient rendering at scale. In onwardSEO case studies, improving LCP to ≤2.5s and TTFB to ≤500ms reduced Googlebot retry rates by 15–28% and stabilized recrawl intervals on key pages;

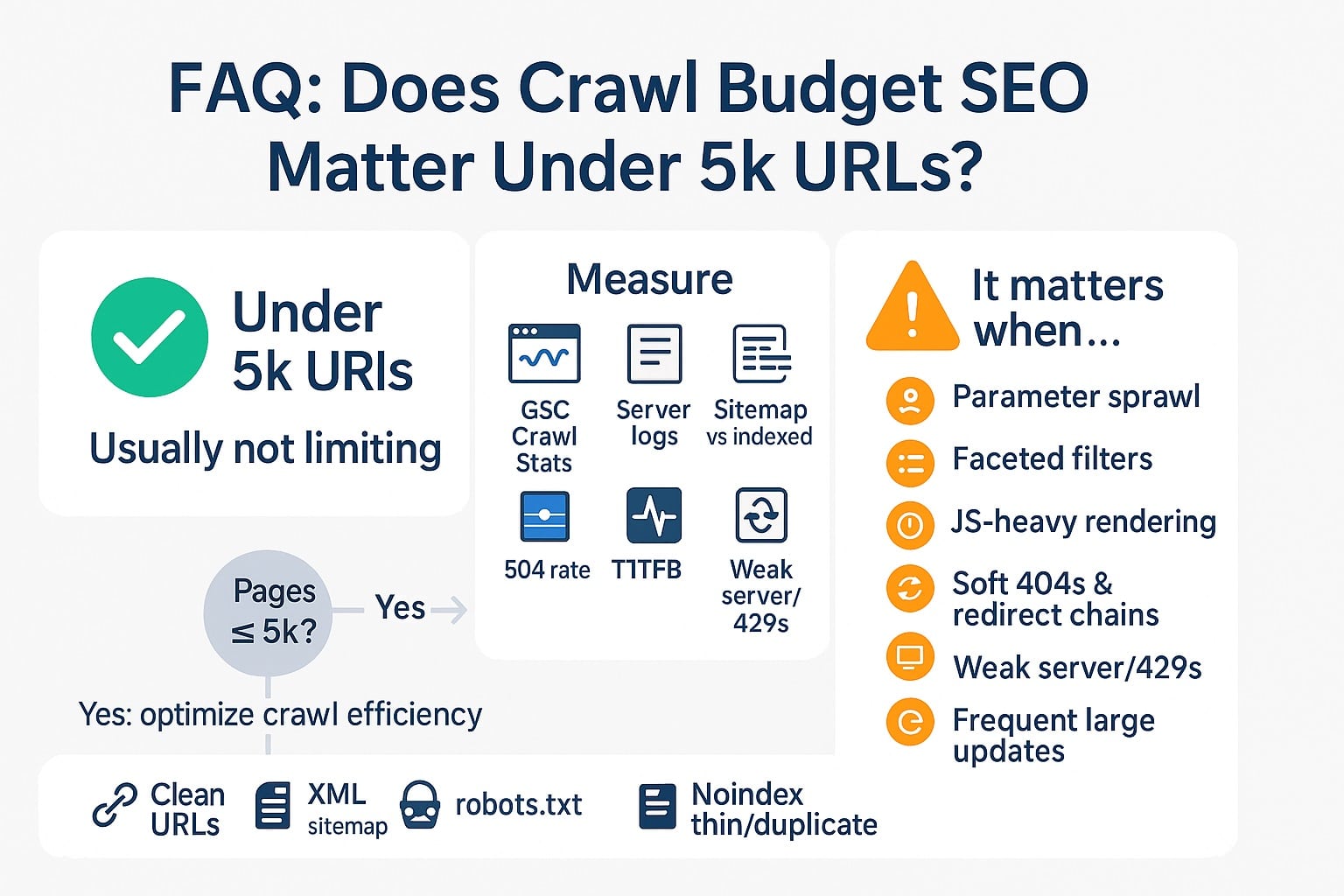

FAQ: Does crawl budget SEO matter under 5k URLs?

Yes. Google’s documentation notes crawl budget is less limiting for small sites, but our case data shows waste still delays canonicalization, re-crawls, and ranking propagation. Under 5k URLs, you can consolidate duplication, eliminate redirect chains, and improve server reliability to shift more Googlebot hits to money pages and accelerate performance gains within weeks;

FAQ: What duplicate page issues hurt small sites most?

The most damaging are protocol and host variants (http/https, www/non-www), trailing slash and case mismatches, parameterized duplicates from tracking or faceting, thin tag archives, and printer/AMP remnants. Each variant returning 200 fragments demand signals. Fix with one-hop redirects, strict canonicalization, noindex for infinite facets, and internal links pointing to the final canonical destinations exclusively;

Pick a canonical default for each category and link to it. Allow a small set of revenue-driving facets (e.g., primary color/size) with self-referencing canonicals when they deliver search value; apply x-robots noindex, follow for the rest. Ensure server redirects normalize trailing slash, case, and host, and keep sitemaps limited to canonical, indexable URLs with accurate lastmod fields;

FAQ: Are redirect chains really that harmful to crawl efficiency?

Yes. Chains consume crawl budget without adding content value, increase latency, and risk fetch failure. Google advises minimizing redirects. Collapse multi-hop sequences into a single 301 to the canonical, update internal links to direct destinations, and rebuild sitemaps with only 200 URLs. Expect 3xx exposure to fall below 5% of Googlebot hits and recrawl cadence to improve;

FAQ: What server and robots directives should small sites prioritize?

Focus on fast, consistent 200s; deploy caching, compression, HTTP/2 or HTTP/3, and 304/ETag for unchanged content. In robots.txt, block crawler traps but allow CSS/JS essential for rendering. Combine robots rules with x-robots noindex for non-valuable HTML and 410 for permanent removals. Keep sitemaps clean: canonical 200s only, accurate lastmod, and per-template partitioning;

FAQ: Which metrics prove crawl efficiency is improving?

Track Googlebot 200 rate (≥92%), 3xx exposure (≤5%), error exposure (≤2%), crawl-to-index ratio (≥0.85), and median recrawl interval on top revenue URLs (≤7 days). Use server logs, GSC Crawl Stats, and a rendering-aware crawler for triangulation. Correlate improvements with faster indexing of updates and rising impressions on target SERPs to validate revenue impact;

Win More Crawl, Win More Revenue

Crawl budget SEO is not a big-site luxury; it’s a small-site multiplier. When onwardSEO eliminates duplicate page issues, collapses redirect chains, and strengthens canonicalization, Googlebot spends more time on pages that earn. We quantify every change with logs and GSC, then engineer server and rendering reliability for durable crawl efficiency. If your compact site’s updates feel invisible in search, let our specialists rewire your crawl signals and unlock faster ranking gains;