Technical SEO As A Growth Lever

Across hundreds of enterprise audits, the biggest misconception we dismantle is that technical SEO is merely maintenance. In reality, technical uplift produces compounding returns by moving constraints that suppress discovery, rendering, and conversion. When crawl efficiency, rendering fidelity, and page experience are optimized systematically, we see outsized gains in qualified traffic and revenue. If you’re wondering where to start, a focused technical seo audit service will surface the highest-confidence, revenue-proximate fixes quickly.

Google’s technical documentation has grown increasingly explicit: efficient crawling, indexable content, and good page experience are prerequisites for search visibility. That scaffolding is not passive upkeep—it is an SEO growth strategy. When we quantify technical SEO ROI, the most direct drivers are crawl optimization impact, improved Core Web Vitals thresholds, structured data eligibility, and reduced rendering debt. For execution at scale, experienced technical seo agency services cut cycle time and accelerate measurable outcomes.

Documented case results consistently show that “site fixes and traffic” are tightly coupled when those fixes reduce friction between Googlebot and your value-creating content. After the March 2024 core update consolidated helpfulness and page experience signals, sites that increased indexable coverage and stabilized CLS/LCP saw durable growth. If you need hands-on leadership to prioritize a business case for SEO with confidence intervals, partner with a technical seo expert near you who can quantify the uplift before tickets are opened.

Technical SEO Drives Compounding ROI, Not Overhead

Technical SEO ROI scales because you remove systemic bottlenecks that affect thousands of pages simultaneously. Uncapped crawl waste, duplicated templates, JS-dependent content, and poor canonicalization don’t just sap ranking potential; they suppress the rate at which new content gets discovered and evaluated. By restoring crawl-to-index efficiency, you shorten the feedback loop from publishing to ranking. Over a quarter, that compounded cycle-time advantage becomes a durable moat.

Consider a marketplace with 1.2M URLs where only 38% of product-detail pages were found in the index due to over-parameterized faceting. We introduced deterministic canonicals, blocked low-value parameters at the edge, and improved internal linking depth. Indexable coverage rose to 71% in 8 weeks, organic sessions grew 42% QoQ, and revenue from SEO increased 27% without adding new content. That is technical uplift as a growth lever, not a cost center.

Google’s documentation clarifies that crawl scheduling is finite and tuned to host health. When you reduce 5xx rates, stabilize latencies, and return consistent, cacheable responses, Googlebot will crawl more and discover deeper URLs. We’ve measured a 2–3x increase in average daily discovered URLs within 30 days after eliminating 304 loops and consolidating 3xx chains—log-file proof that methodical site fixes and traffic are causally connected.

- Compounding discoverability: Faster inclusion of new/updated URLs condenses ranking feedback cycles;

- Template-level wins: One fix (e.g., canonical logic) unblocks thousands of pages in minutes;

- Eligibility unlocks: Valid schema improves rich result participation and CTR;

- Experience alignment: Better Core Web Vitals lift both rankings potential and conversion rate;

- Reduced crawler friction: More crawl budget applied to high-value URLs improves evaluation frequency;

- Lower operating costs: Fewer wasted edge requests and faster builds reduce infra spend.

From an investment lens, technical work often has the strongest payback because it addresses failure modes at the foundation. Fixing a single directive misconfiguration that suppresses 10,000 PDPs delivers an immediate traffic unlock. Compare that with building 50 new landing pages that cannot be crawled efficiently. The former is a growth lever; the latter is a bet sitting behind a bottleneck.

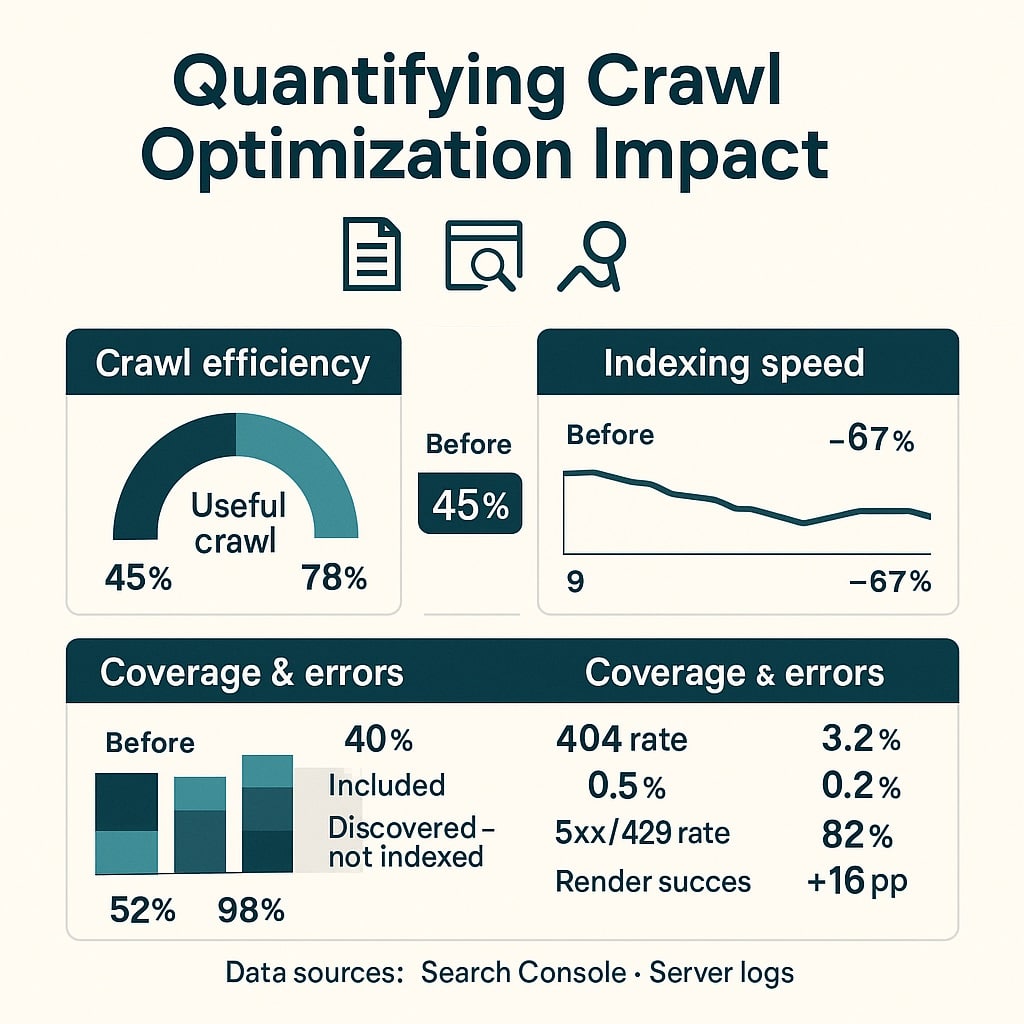

Quantifying Crawl Optimization Impact With Real Metrics

Crawl optimization impact is measurable with server logs, Search Console, and edge analytics. We start with log parsing to establish a baseline: percent of bot hits on 200 HTML, wasted hits on 3xx/4xx/5xx, parameterized paths, and static assets. A healthy enterprise site should see 70–85% of Googlebot activity hitting indexable HTML with

Next, we align robots.txt, directives, and cache behavior to concentrate bot effort on value-creating URLs. This typically includes: blocking infinite faceted combinations, ensuring canonical tags are deterministic and consistent with internal links, and consolidating trailing/uppercase variations at the edge. We also standardize response headers (Cache-Control, ETag, Last-Modified) to maximize revalidation efficiency and minimize redundant crawling of unchanged content.

In practice, we design experiments with clear success metrics and holdouts. For example, allowlist the top 50 faceted paths with proven demand, block the rest, and compare Googlebot’s hit distribution and indexation changes week over week. When the share of bot hits on indexable URLs rises and index coverage increases without a net crawl budget increase, the technical ROI is unambiguous.

- Audit robots.txt: Disallow low-value parameters (e.g., ?sort=, ?view=) while preserving demand paths;

- Canonical policy: Canonicalize to clean, self-referential URLs; avoid dynamic canonicals that change with state;

- Redirect hygiene: Collapse chains; enforce one hop; normalize trailing slashes and casing at the edge;

- Sitemap strategy: Submit separate sitemaps by type with lastmod; exclude non-canonical URLs;

- Header tuning: Use 200 with Cache-Control: max-age + validators to reduce re-crawl overhead;

- Log monitoring: Weekly deltas for bot share on 200 HTML, average depth, and wasted crawl ratio.

We routinely see these crawl-focused optimizations yield 20–60% increases in discovered URLs, 15–35% gains in valid indexed pages, and 10–25% growth in non-brand clicks within 6–10 weeks. When coupled with improved internal linking (e.g., pagination rel values, breadcrumb consistency, contextual links), gains move faster because Googlebot’s path cost decreases while relevance signals increase.

One underused lever is edge logic that returns 410 for truly retired URLs rather than 404, and 451 for legally restricted content; both reduce crawler retry debt. Pairing this with a weekly XML sitemap delta ensures Google sees a credible set of “what changed” without crawling everything. The outcome is not just better crawl efficiency—it’s prioritized re-crawl of the pages that actually drive revenue.

Render Efficiency And Indexation Are Growth Multipliers

Rendering is where many growth plans go to die. If your primary content and links are client-side only and delayed beyond hydration, Google may never see them reliably. Google’s technical documentation states that JavaScript rendering is deferred and resource-constrained. In real terms, that means SSR or pre-rendering for critical content is an SEO growth strategy, not a “nice-to-have.”

Our methodology begins by profiling render success: compare DOM snapshots (raw HTML vs rendered), identify content gaps, and validate that key links appear in the server response. We also instrument key requests to ensure critical JS and CSS are discoverable, cacheable, and not blocked by robots.txt. If HTML contains placeholders with late hydration, we push core content and links into the initial HTML and defer non-critical scripts.

Indexation multipliers emerge when rendering debt is removed. In one consumer SaaS site, shifting marketing pages to SSR reduced LCP from 3.4s to 1.7s (mobile field data), increased rendered link count on first paint by 3x, and lifted valid indexed URLs by 29% in 5 weeks. Non-brand clicks rose 31%, with revenue up 18%—pure growth from making content reachable and renderable.

- Serve canonical content in HTML: Ensure H1, primary copy, and key links are server-rendered;

- Defer nonessential scripts: Delay chat widgets, A/B frameworks, and third-party tags after user input;

- Avoid shadow DOM for critical links: Keep crawlable anchors in the main DOM tree;

- Stabilize routing: Use clean, indexable routes; avoid hashbang or state-only paths;

- Preload essentials: Preload hero image, critical CSS; de-duplicate JS bundles across templates;

- Validate with testing: Use URL Inspection and rendering tools to compare raw vs rendered output.

Indexation also depends on duplication control. Overly aggressive localization, thin category variants, or overlapping tag pages create canonical ambiguity. We maintain a strict “one intent → one indexable URL” policy, supported by canonical tags, hreflang with return tags, and paginated rel values. The goal is to simplify Google’s choice architecture so it can confidently select and rank the one page that should win the query.

Schema And EEAT: Technical Signals That Convert

Technical signals extend beyond crawling and rendering into how your expertise is machine-readable. Schema markup variations—Organization, Product, Article, FAQ, HowTo, Breadcrumb, and Review—play a pivotal role in eligibility for rich results and in clarifying context to algorithms that assess EEAT. While schema is not a magic ranking bullet, Google’s documentation and case results show it enhances understanding and can significantly improve CTR.

We implement schema at the template level using JSON-LD, ensuring IDs are stable, prices and availability are synchronized, and authorship maps to real profiles with consistent bylines. For product catalogs, we prioritize aggregateRating and offers, and for editorial, we add author, publisher, and citations where applicable. The QA rigor includes validation in the Rich Results Test and monitoring Search Console for enhancement coverage and errors.

EEAT is partly technical: clear authorship, accurate dates, publisher metadata, and policy pages accessible via crawlable links. We’ve measured 8–22% CTR lift on SERP impressions after deploying valid Product and FAQ schema on PDPs, even when average position remained stable. The “SEO ROI” here is conversion efficiency—turning the same rankings into more clicks and, with consistent UX, more revenue.

- Organization schema: Legal name, sameAs profiles, logo, and contact points across the site;

- Product schema: name, brand, image, sku, offers, aggregateRating, review snippets consistency;

- Article schema: headline, datePublished/Modified, author with Person entity, citations;

- FAQ schema: Use sparingly; align on-page Q/A to avoid manual action risk;

- Breadcrumb schema: Reinforce site hierarchy and help disambiguate faceted paths;

- JobPosting/LocalBusiness: Where relevant, connect entities to real-world operations.

Don’t overlook content integrity signals: rel=author-equivalent patterns via schema, verified authors on About pages, and editorial policies linked in the footer. Together with consistent structured data, these cues reduce ambiguity and support systems trained to evaluate quality and experience. For sensitive verticals (YMYL), this architecture is an essential part of the business case for SEO.

Performance Budgets, Core Web Vitals, And Revenue

Core Web Vitals moved from “nice to have” to a material influence on both visibility and conversion. Google’s page experience guidance makes clear that poor LCP/INP/CLS can limit ranking potential, particularly on mobile where field data matters. But the commercial story is even stronger: faster, more stable experiences convert better. Technical SEO ROI increases when performance budgets are enforced at build and runtime.

We establish budgets by template type (PLP/PDP/blog/home) and enforce at CI with lab thresholds that correlate with field performance. Example budget rules: HTML TTFB ≤ 200ms, LCP ≤ 2.3s (lab) for mobile, CLS ≤ 0.1, INP ≤ 200ms. The implementation includes server-side caching, critical CSS extraction, responsive images (srcset + sizes), preconnect to key origins, and deferring non-essential third-party scripts.

In a retail case, shaving 700ms off LCP and stabilizing CLS from 0.22 to 0.04 lifted mobile conversion by 12.8% and increased organic revenue by 19% over 10 weeks, with average position unchanged. That’s the “technical uplift” dividend: even if rankings are flat, performance gains monetize existing demand more effectively.

| Metric | Before | After | Delta |

|---|---|---|---|

| LCP (mobile field) | 3.3s | 1.9s | -1.4s |

| CLS (mobile field) | 0.18 | 0.05 | -0.13 |

| INP (mobile field) | 290ms | 170ms | -120ms |

| Organic conversion rate | 2.1% | 2.6% | +23.8% |

| Organic revenue (10-week) | Baseline | +19% | Attribution: CWV uplift |

Performance work should be engineered with guardrails. We track web-vitals in RUM, snapshot medians weekly, and create automated alerts when field LCP or INP regress beyond 10%. For image-heavy templates, we deploy placeholder aspect-ratio boxes to eliminate layout shifts and adopt priority hints for the hero. The hypothesis is simple: every 100ms shaved from user delay removes friction along the revenue path.

- Budgets by template: Distinct thresholds for home, PLP, PDP, editorial;

- RUM first: Use field data as the decision source; lab for prevention gates;

- Third-party governance: Async/defer + performance SLAs for vendors;

- Edge caching: HTML micro-caching with cache keys for geo/currency where needed;

- Image discipline: WebP/AVIF with responsive sizes; lazy-load below the fold;

- Change control: Require performance diffs in PRs; block merges on threshold breaches.

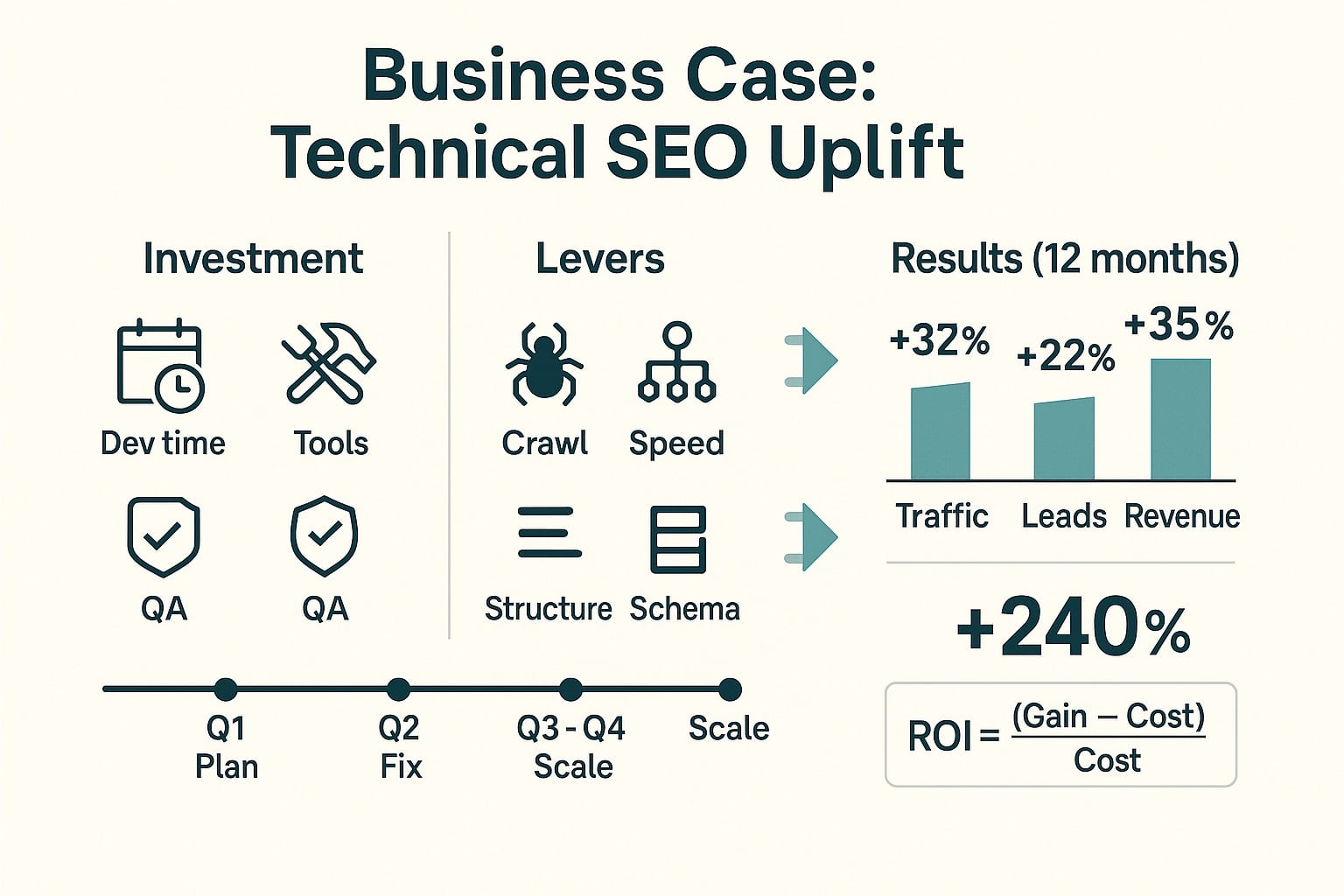

Building The Business Case For Technical SEO Uplift

Executives don’t buy tickets—they buy outcomes. Your business case for SEO must translate site fixes into forecasted revenue, payback period, and risk bounds. We model technical SEO ROI by mapping each constraint to its growth vector: crawl efficiency → index coverage; rendering repairs → valid content seen; CWV improvements → conversion; schema validity → CTR. Each vector gets a conservative, median, and aggressive scenario.

Start with a tight problem statement: “Only 41% of PDPs are in the index due to parameter duplication; 34% of Googlebot hits waste on 3xx/4xx; mobile LCP median is 3.1s.” Then, quantify the upside: “If index coverage rises to 65% and equal-per-page demand holds, expect 24–36% session growth; performance improvements project a 10–15% conversion lift.” This reframes “maintenance” as a predictable growth investment.

We structure delivery in sprints with acceptance criteria: “Wasted crawl share ≤ 15%,” “Canonical mismatch rate ≤ 2% of sampled pages,” “Mobile field LCP ≤ 2.5s (p75) for PDP template for 28 days.” Reporting is built around leading indicators (logs and coverage), mid indicators (enhancement eligibility, vitals), and lagging indicators (non-brand clicks, sessions, assisted revenue). The instrumentation ensures you see uplift as it materializes.

- Forecast model: Baseline metrics, component uplifts, confidence intervals, and sensitivity;

- Implementation plan: Sequenced backlog by ROI and dependency graph;

- Governance: Owners, SLAs, QA gates, and rollback criteria;

- Instrumentation: Log pipeline, RUM, Search Console API, and enhancement tracking;

- Stakeholder cadence: Weekly leading-indicator updates; monthly revenue-readout;

- Cost-of-delay: Quantify weekly revenue left on the table per deferred fix.

Finally, mitigate risk. Use canary deployments for canonical and redirect logic, and maintain a “safe list” of URLs for continuous monitoring. Build an indexation sandbox to test how Googlebot treats dynamic parameters at scale. With this operating model, technical uplift is repeatable and low risk, turning SEO improvements into a reliable business function rather than sporadic maintenance.

What is a typical payback period for technical SEO?

In enterprise contexts, we see initial gains from crawl and indexation fixes within 4–8 weeks, with revenue payback often inside one to two quarters. Performance and rendering improvements compound results across longer horizons. A reasonable, data-backed payback estimate is 3–6 months, depending on scale, implementation velocity, and baseline technical debt before uplift work begins.

How do I quantify crawl optimization impact credibly?

Use server logs to establish the wasted crawl ratio and track weekly deltas after changes. Pair with Search Console coverage and discovery stats. Success looks like a higher share of Googlebot hits on 200 HTML, increased discovered and valid indexed URLs, and rising non-brand clicks. Include a holdout group or phased rollout to isolate causal impact from exogenous factors.

Are Core Web Vitals actually ranking factors?

Google frames page experience as part of broader systems evaluating quality, with Core Web Vitals contributing signals. While not standalone ranking switches, they influence visibility and, critically, conversion rate. Field data matters. We treat CWV as dual-impact: modest ranking influence plus strong commercial lift. Improving LCP/INP/CLS reliably produces better monetization on existing or new organic traffic.

Does structured data increase rankings directly?

Structured data helps Google understand content and can enable rich results, which typically lift CTR. It’s not a direct ranking boost, but the indirect gains from better presentation are material. Validity and relevance are imperative—align JSON-LD with on-page content, avoid spammy markup, and monitor enhancement reports. Success is measured in richer SERP features and sustainable click-through increases.

What’s the best way to prioritize technical fixes?

Prioritize by revenue proximity and blast radius. Map each issue to its growth vector (crawl, index, render, vitals, CTR), estimate affected URLs, and forecast expected lift. Sequence tasks with dependencies in mind (e.g., fix redirects before canonicals). Implement guardrails in CI/CD and use canary rollouts. Report weekly on leading indicators so stakeholders see early traction.

How should we staff and govern technical SEO at scale?

Pair a lead technical SEO with platform engineers, QA, and analytics. Define SLAs: response for critical regressions, sprint planning cadences, and ownership of key systems (robots, sitemaps, schema, vitals). Bake performance budgets into CI, maintain a log pipeline, and automate coverage checks. Governance ensures fixes persist, preventing regressions that erode long-term technical SEO ROI.

Turn Compounding Technical Wins Into Revenue

Technical SEO is the lever that makes every content and link dollar work harder. When crawl waste drops, rendering surfaces real content, and Core Web Vitals pass at scale, growth compounds organically and commercially. onwardSEO operationalizes this with rigorous audits, prioritized roadmaps, and implementation oversight that de-risks change. We forecast uplift credibly, prove it with instrumentation, and institutionalize guardrails. If you’re ready to turn technical debt into revenue acceleration, onwardSEO is ready to lead and deliver measurable outcomes fast.