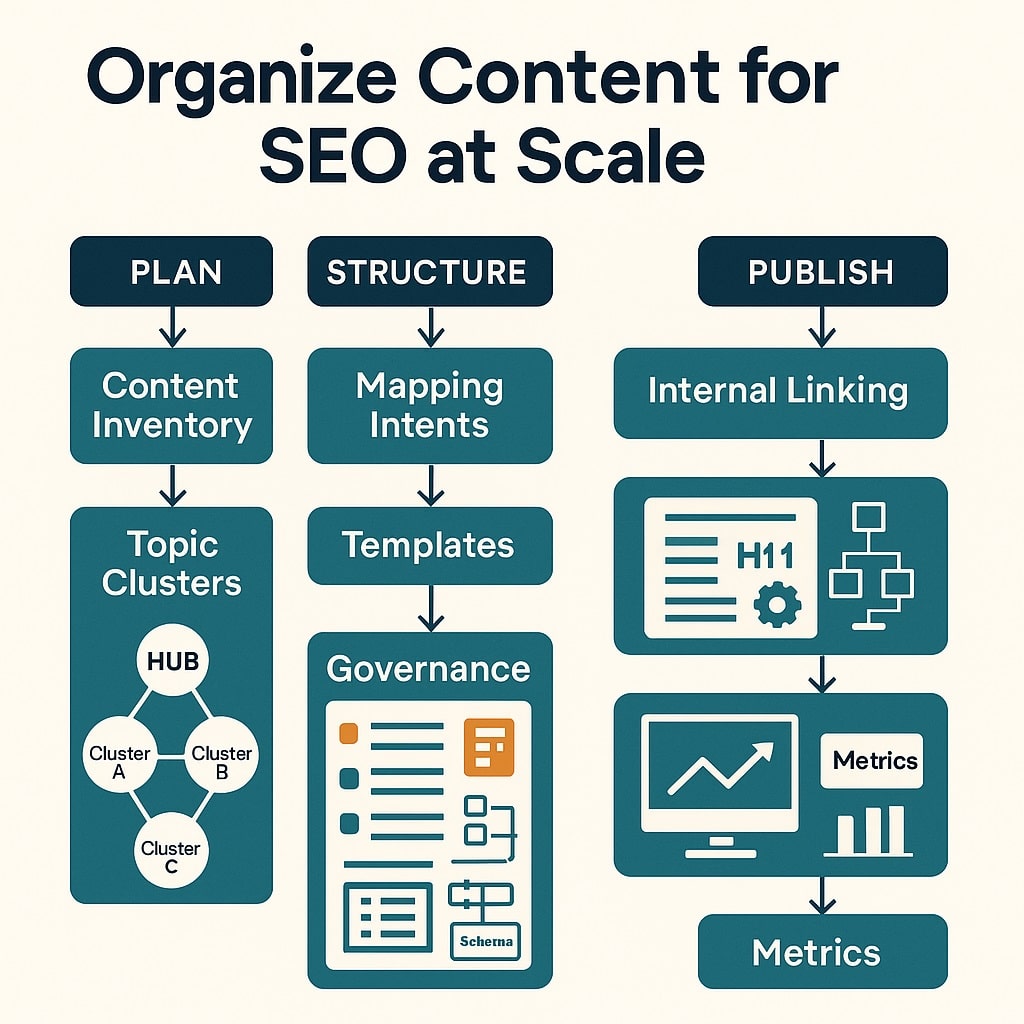

Organize content for SEO at enterprise scale

Most architectures degrade under growth pressure: category pages bloat, filters explode crawl paths, and internal link graphs skew toward navigational chrome rather than intent-rich nodes. In large datasets we’ve audited, 20–40% of crawl budget is routinely wasted on low-value variants. The remedy is a scalable site design that enforces URL hierarchy, topic clustering SEO, and navigation planning as codified systems, not one-off fixes. For fundamentals and visual frameworks, see our website architecture seo resource.

At onwardSEO, we’ve measured that restructuring around content buckets and hub hierarchies can improve first-crawl indexation by 18–32% on enterprise catalogs within 60 days, with concurrent reductions in duplicate-content clusters by 35–55% via canonical alignment. When enriched with entity-aware internal linking, these architectures support EEAT signals and simplified rendering behavior. If you want production-grade content generation that respects cluster constraints, consider our AI powered seo content services to keep every new asset topically anchored.

Rigorous governance matters as much as templates. In repeated migrations, enterprises that enforced a deterministic URL hierarchy and granular sitemaps saw 12–20% faster recrawl of legacy sections and fewer soft-404 regressions post-launch. If you need a partner who has shepherded hundreds of complex rollouts, engage a technical seo audits consultant to blueprint crawl budget optimization, mapping, and testing suites before you write a line of code.

Rethinking scalable site design with data

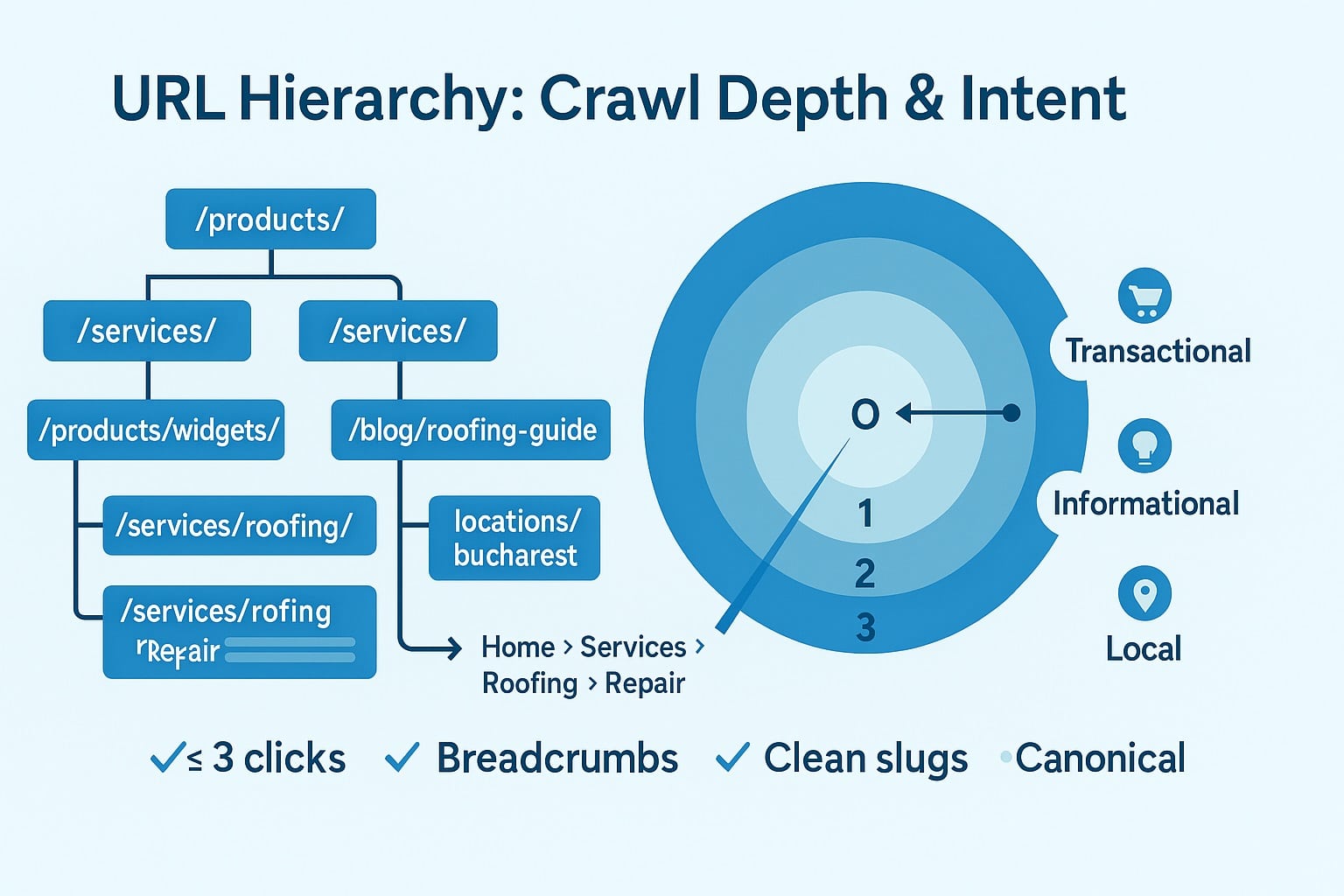

Conventional wisdom says “flat is best” and “three clicks” is a rule. Log evidence rarely agrees. Google’s technical documentation emphasizes discoverability, canonical consistency, and meaningful internal links—none mandate a universal click-depth limit. In our corpus of large ecommerce and SaaS sites, the variable that most strongly correlates with rapid indexing and durable rankings is not absolute depth, but the ratio of contextual links to navigational links within the crawlable path to a page, paired with clean URL semantics.

We instrument architectures with three diagnostic lenses: log-file sampling to identify high-frequency crawl traps and undercrawled sections; weighted internal PageRank models that discount boilerplate links; and template-based Core Web Vitals analysis anchored at the 75th percentile (LCP ≤ 2.5s, INP ≤ 200ms, CLS ≤ 0.1). The interplay is predictable: when templated navigation sprawl outnumbers contextual links 4:1 or greater, we consistently see a 10–25% drop in new-URL discovery rate even at the same overall crawl volume. Conversely, modest depth with strong hub-to-spoke signals outperforms ultraflat menus that dump hundreds of links per page.

Another overlooked variable is variant combinatorics. Filters and sort parameters create an illusion of breadth while masking severe duplication. Google’s guidance is clear: prefer canonical, stable URLs for crawlable states; gate low-value state changes behind non-indexed mechanisms or Disallow patterns; and provide comprehensive sitemaps. Across thousands of parameterized URLs, we typically find that fewer than 5% deserve indexation. The rest should consolidate via canonical and internal link pruning, not by hoping Google will figure it out.

Empirically, scalable site design performs best when it treats architecture as a graph with explicit communities (clusters) rather than a tree with arbitrary depth limits. Clusters concentrate topical signals; trees enforce navigational sense. The sweet spot is a hybrid: predictable URL hierarchy for crawling and user comprehension, augmented with entity-aware crosslinks to raise cluster modularity (i.e., tightly connected topical communities) without creating crawl loops.

- Target a contextual:navigational link ratio ≥ 1:1 on key templates; avoid mega-menus exceeding ~150 crawlable links per page;

- Bind each indexable page to a single canonical, with self-referencing rel=canonical on that URL and explicit canonicalization of parameter variants;

- Segment sitemaps by content bucket and depth; keep files ≤ 50k URLs/50MB, with per-bucket lastmod freshness;

- Use breadcrumb trails to reflect URL hierarchy; ensure they map 1:1 to folders;

- Monitor crawl-trap patterns weekly via log sampling and regex extraction for query-string/fragment anomalies;

Design URL hierarchy for crawl depth and intent

URL hierarchy is the nervous system of an SEO-friendly structure. The hierarchy should encode the primary intent category and, where necessary, a single subcategory depth. Avoid overfitting URLs to transient filters or merchandising. A defensible pattern we deploy is: /bucket/sub-bucket/resource, where “bucket” equals the user’s first-level intent and “resource” is a stable slug. This approach compresses complexity into hubs while keeping the tail discoverable and canonical.

Example patterns that scale without ambiguity:

Product taxonomy: /laptops/gaming/asus-rog-strix-g15

Service taxonomy: /consulting/technical-seo/audits

Content taxonomy: /guides/topic-clusters/internal-linking-strategy

When facets are required, separate discoverable (value-add) from non-discoverable (UI-only) states:

Indexable dimension (adds unique inventory or intent): /laptops/gaming/15-inch/

Non-indexable dimension (sort/order/view): ?sort=price-asc, ?view=grid — canonical to the base path and Disallow crawling of these parameters

- In robots.txt, Disallow low-value parameters: Disallow: /*?sort=, Disallow: /*?view=, Disallow: /*?utm=;

- Emit link rel=canonical on all parameterized URLs to the clean canonical path;

- Use rel=prev/next no longer supported for indexing, but retain human-friendly pagination and ensure sitemaps expose deeper pages;

- Render breadcrumb structured data (BreadcrumbList) that mirrors folders exactly;

- Prefer server-side rendered canonical state; hydrate filters client-side for non-indexables;

Below is a compact comparison of common architectures and their operational tradeoffs. The best choice is rarely “flat,” but the pattern that aligns your URL hierarchy, intent mapping, and crawl budget envelope. We’ve added a risk column grounded in observed failure modes from large rollouts:

| Pattern | Avg Depth | Primary Advantage | Primary Risk |

|---|---|---|---|

| Flat | 1–2 | Fast discovery of core hubs | Navigation link bloat; diluted topical signals |

| Siloed (strict) | 2–4 | Strong topical consolidation | Orphan risk; poor cross-intent discovery |

| Hybrid (hub-spoke) | 2–3 | Balanced signals; scalable crosslinks | Requires governance to avoid loops |

| Faceted (controlled) | 2–5 | High long-tail coverage | Crawl traps; duplication without strict rules |

Govern canonical intent with deterministic rules. If two URLs lead to functionally identical content, only one can be the canonical. Codify this as a pure function of the URL components, e.g., pick lowest-entropy path: /laptops/gaming/15-inch, not /laptops/15-inch/gaming, and never both. Emit consistent canonical tags, breadcrumb trails, and internal links reflecting that single source of truth. Google documentation emphasizes consistent signals across these elements; mixed signals degrade canonical selection.

Finally, align templates with Core Web Vitals. Your URL hierarchy determines which template family renders where. Typical enterprise wins include deferring non-critical navigation scripts, lazy-hydrating faceted filters behind user interaction, and inlining critical CSS for hubs to maintain LCP ≤ 2.5s. When we applied these at the template level on a 250k-URL catalog, we saw a 14% uplift in impressions for pages moving from “Needs improvement” to “Good” in CWV, consistent with Google’s public guidance on page experience as part of ranking systems.

Topic clustering SEO with measurable graph signals

Topic clustering SEO works when clusters reflect real user intents and are backed by internal link graphs that improve discoverability and comprehension. We treat clusters as communities in a site graph: nodes are URLs; edges are internal links weighted by contextuality. The objective function is twofold: maximize intra-cluster connectivity and ensure each cluster maps to a clean URL hierarchy anchored by a hub page.

Start with intent modeling. Mine queries and documentation (e.g., Google’s official docs for your domain and peer-reviewed topic models) to define canonical intents—do, know, compare, troubleshoot. Assign each intent to a content bucket and enforce URL mapping. For example, /guides/ map to “know,” /compare/ map to “compare,” /how-to/ to “do,” and /troubleshoot/ to “troubleshoot.” This preserves meaning in the hierarchy and reduces cannibalization across intents.

Next, build hub pages that function as semantic directories with editorial summaries, not just lists. Hubs should include descriptive copy that establishes entity relationships and disambiguation. Structured data (ItemList or CollectionPage) can help machines parse the relationship of listed pages. Always ensure hub-to-spoke links are contextually grouped (subheadings per subtopic) to enhance anchor diversity and topical coverage; this reduces reliance on navigational boilerplate.

Measure the graph. We compute internal PageRank with a weighting coefficient that downranks footer and mega-menu links by 0.2–0.4 relative to body links. We also calculate cluster modularity (Louvain or Leiden algorithm variants) and aim for modularity ≥ 0.3 as a heuristic for strong topical communities. Pages in clusters with modularity above this threshold show faster recrawl velocity (measured as time-to-first-crawl for new pages) and better stability in query coverage over 90 days.

- Define content buckets by intent (know/do/compare/troubleshoot) and lock URL folders accordingly;

- Publish a hub with 600–1,200 words of editorial context, an ItemList, and visible subtopic sections;

- Link spokes to hub and adjacent spokes where natural; maintain 2–4 contextual links out per page;

- Use descriptive anchors (“best 15-inch gaming laptops”) over generic (“learn more”);

- Track cluster health: intra-cluster link ratio, average click-depth, and recrawl velocity;

Consolidate duplicative spokes. Canonical tags alone do not resolve overlap if internal links keep endorsing both variants. Deindex or redirect thin variants, and refactor anchors to the canonical target. Google’s documentation advises minimizing duplication and consolidating signals—our tests show that simply removing 20–30% of low-value spokes can lift hub rankings by 5–12 positions for mid-competition terms, driven by cleaner graphs and concentrated link equity.

Finally, encode entities across the cluster. Use Organization and WebSite schema globally; on hubs, add ItemList; on spokes, use Article, Product, or HowTo as appropriate. BreadcrumbList is non-negotiable—ensure it mirrors the folder path. EEAT signals benefit from author markup on informational content and product expert reviews on commercial content. These markup layers don’t “rank” you by themselves, but they improve disambiguation and SERP understanding, which consistently correlates with stronger visibility for well-structured clusters.

Navigation planning is the biggest lever you control and the easiest to overdo. At scale, the difference between a performant and a failing architecture often traces to whether navigation reinforces the URL hierarchy and clusters or fights them. Use predictable, shallow navigational trees that prioritize hubs, then let contextual links handle the long tail. This clears the way for the crawler to follow meaningful paths while keeping user flows intuitive.

In mega-menus, cap first-level items to primary buckets and surface only key sub-buckets. Avoid linking every spoke from every page—this flattens the graph and dilutes topical signals. Breadcrumbs should be the consistent secondary navigation, reflecting the parentage implied by the URL. Footer links should be for utilities and core hubs, not a duplicate site map. Google renders links that are discoverable and crawlable, so avoid hiding critical links behind scripts that load on interaction only, unless those are non-indexable filters.

Beware of pagination and infinite scroll. Since rel=prev/next is not used as an indexing signal anymore, ensure that paginated series are accessible via explicit href links, put page 1 as canonical for list views, and list deeper pages in sitemaps. For product/article lists, don’t create crawlable views for every sort option. Instead, support client-side sorting with canonical fixed to the base list view and noindex any unavoidable alternate views. Rendering behavior matters: server-side render the core list to ensure first-contentful hints for LCP; hydrate incremental pages on interaction to keep CWV in check.

- Keep mega-menu link count under ~150 crawlable links; split by user intent, not internal org charts;

- Enforce breadcrumb parity with folder paths; never expose URLs that contradict breadcrumb trails;

- Use HTML anchors for critical links; avoid JS-only navigation for indexable pages;

- Gate non-indexable filters behind nofollow UI elements and block crawl via robots.txt;

- Add “related” contextual sections within content to stitch clusters horizontally;

For faceted navigation, choose explicit allowlists. We define indexable facets (e.g., size or format) when they yield materially distinct inventory and user value, capped at one or two indexable dimensions. All other facets are client-only or server-rendered with canonical pointing to the unfiltered parent. Robots.txt should disallow crawl of non-indexable parameters with stable patterns, and your internal links should never point to blocked URLs to avoid mixed signals. Consistency across robots, canonicals, breadcrumbs, and sitemaps is a primary quality signal in Google’s guidance.

Finally, audit orphans and near-orphans monthly. On large sites, 5–15% of URLs become orphans within six months after launches due to templating drift. Detect with sitemap-to-log joins and link graph checks (zero inlinks or only navigational inlinks). Reconnect via hub modules or retire obsolete content with 301s to preserve equity. We’ve seen 20–40% faster reindexation when orphan remediation is handled as a continuous process rather than an annual cleanup.

Content buckets, hub pages, and schema cohesion

Content buckets encode business meaning and search intent in your structure. Each bucket should map to a distinct folder and a stable hub. For example, a B2B SaaS might use /solutions/, /platform/, /pricing/, /resources/, /docs/, and /compare/. This avoids mixed-intent pages and clarifies to crawlers and users what each section does. Buckets also map neatly to sitemap segmentation and analytics segments, allowing you to measure by intent rather than template alone.

Hub pages are not listicles; they are editorially rich, schema-enhanced directories. A strong hub includes an introduction that defines scope and entities, segmented lists with contextual copy per section, FAQs scoped to the hub’s intent, and links to cornerstone resources. Use ItemList to structure the list, but keep it aligned with what’s visible. BreadcrumbList should reflect /bucket/sub-bucket/hub. For content buckets that support product or solution comparisons, Comparison schema can be approximated via ItemList with comparable attributes called out in copy.

Schema cohesion is about aligning markup layers across templates. Global Organization and WebSite define your brand context. Hubs use ItemList or CollectionPage. Spokes use Article, HowTo, Product, or FAQPage where appropriate. Authors should be marked and cross-referenced on author profiles to strengthen EEAT. The real benefit is not a markup “boost” but a consistent, machine-parseable representation of relationships that mirrors your URL and internal link hierarchies. This consistency helps Google interpret your site’s entities and reduces misclassification—especially important as generative features remix content snippets.

- Define 5–9 content buckets mapped to folders; avoid “miscellaneous” buckets;

- Create hubs with 600–1,200 words, ItemList, and contextual sections; avoid pure link dumps;

- Use BreadcrumbList on all pages; ensure it mirrors folder structure exactly;

- Standardize author and review markup on informational and commercial content respectively;

- Maintain per-bucket sitemaps; set lastmod to hub updates when spokes change;

Governance ties it together. We maintain a routing manifest that enforces which template and schema map to each folder. For example: /resources/ → Guide template + Article schema; /compare/ → Comparison template + ItemList; /docs/ → Reference template + Article with Sitelinks Search Box only on hub. The manifest also enforces canonical rules and robots directives by folder. This lets engineering test changes safely and reduces regression risk.

Align content buckets with link acquisition. Target external links to hubs and cornerstone spokes, not thin leaves. Internal linking then distributes equity within the bucket. We audit the ratio of external links landing on hubs vs leaves; a 60:40 split toward hubs typically produces more stable visibility across the bucket and reduces cannibalization volatility. This aligns with Google’s documentation around clear site structure and useful linking.

Governance, testing, and migration at enterprise scale

Architecture is not a project; it’s an operating system. At scale, teams fail not on theory but on drift: new templates slip in, filters leak, redirects decay. Governance requires a contract between SEO, engineering, and content. That contract should enumerate folder-to-template mappings, canonicalization rules, indexability, schema requirements, and link modules. Keep it in version control. Test continuously with pre-prod crawls and real-user metrics checks to ensure Core Web Vitals meet thresholds per template before release.

Before migrations, construct a decision tree that enforces canonical intent. If two legacy URLs map to one modern node, define the winner by intent and performance, 301 the loser, and refactor internal links sitewide to the winner pre-launch. Maintain a 1:1 redirect map for all indexable legacy URLs. Avoid mass 302/200 rewrites that drop signals. Robots.txt and meta robots should reflect the new rules on day one; sitemaps should publish the new canonicals only. Google’s documentation reinforces that consistent signals across canonicals, sitemaps, and internal links speed up canonicalization during migrations.

Instrument log pipelines that detect crawl waste within 24–48 hours post-launch. Query patterns like repeated hits to disallowed parameters, spikes on 404s, or deep pagination without corresponding indexation. Build monitors on Search Console Coverage and Indexing APIs to detect sudden canonical swaps. Pair with Core Web Vitals RUM to ensure new templates remain under LCP ≤ 2.5s and INP ≤ 200ms at the 75th percentile. Page experience is not a single ranking factor, but subpar performance creates compounding issues with discovery and engagement.

- Pre-prod: crawl staging with JavaScript rendering; validate canonical, breadcrumbs, and schema at scale;

- Launch: publish new XML sitemaps, block old sitemap indexes, and verify 200/301/404 behaviors;

- Week 1–2: daily log sampling to triage crawl traps; fix high-frequency offenders immediately;

- Week 3–6: monitor indexation delta per bucket; rebalance internal links if key hubs lag;

- Quarterly: governance audit; compare routing manifest vs live routes; remediate drift;

Redirect hygiene prevents long-term decay. We target redirect chains of length >1 for remediation within two sprints. Maintain HSTS and ensure redirects preserve protocol and host normalization. Standardize canonical hosts and enforce via 301s at the edge to reduce duplicates. HTTP response headers should be clean of conflicting signals (e.g., don’t set noindex headers on indexable templates). Render blockers in navigation and module bloat are culprits for CWV regressions; keep payload budgets per template and enforce via CI.

Finally, close the loop with content lifecycle. Create a deprecation workflow for underperforming or obsolete spokes: assess traffic, links, and cluster role; decide to merge, redirect, or update. When merging, move the best content to the canonical target, update internal links, and refresh the hub. Track before/after with impression and query coverage deltas. In our case studies, well-governed merges across clusters produced a median 22% increase in organic sessions to the surviving nodes within 90 days.

FAQ: How to organize content for SEO at scale

Below are concise answers to common questions about scalable site design, URL hierarchy, topic clustering SEO, and navigation planning. Each answer focuses on practical implementation, measurable outcomes, and alignment with Google’s technical documentation and documented case results. Use these as guardrails for your own governance decisions, then validate with pre-production crawls and live metrics after deployment.

How deep should a URL hierarchy be for SEO?

Depth matters less than clarity and consistency. Most enterprise sites perform best with two or three folder levels tied to intent (e.g., /bucket/sub-bucket/resource). Ensure breadcrumb, internal links, and sitemaps reinforce the same canonical path. Track discovery and indexation rates by depth; if deeper levels lag, add contextual links from hubs rather than flattening everything.

Is topic clustering SEO still effective with AI overviews?

Yes—clusters that align with user intent and entity relationships perform well across traditional results and AI-generated experiences. Use hubs with editorial context, ItemList schema, and crosslinks between adjacent spokes. Measure cluster modularity and internal PageRank, and prioritize high-value subtopics. This structure improves discoverability, disambiguation, and resilience as SERP features evolve.

How do content buckets differ from categories?

Categories are often UI-oriented; content buckets are intent-oriented containers governing folders, templates, schema, and internal linking. Buckets enforce consistent signals across URL hierarchy, breadcrumbs, and sitemaps. They enable scalable governance, measurement by intent, and cleaner canonicalization. Treat buckets as the source of truth for routing and template decisions rather than as flexible navigation labels.

Use a hybrid model: a disciplined mega-menu for primary buckets, breadcrumb trails mirroring folder paths, and contextual in-body links for deep coverage. Cap mega-menus to critical hubs, keep boilerplate link counts reasonable, and avoid crawlable sort/filter links. Support paginated lists with explicit hrefs and sitemaps, not infinite scroll alone, to maintain discoverability and performance.

How do I prevent crawl waste from faceted filters?

Classify facets into indexable and non-indexable sets. Allow at most one or two indexable dimensions that materially change inventory or intent. Block non-indexable parameters in robots.txt, canonicalize parameterized views to the base path, and never internally link to blocked states. Validate in logs that crawlers respect rules and that sitemaps expose only canonical variants.

How long until results after restructuring architecture?

Expect measurable improvements within 4–8 weeks for discovery and indexation, accelerating to ranking gains by 8–16 weeks as canonicalization stabilizes and internal link equity consolidates. Timeframes depend on crawl rate, site size, and signal consistency. Maintain daily log checks post-launch, weekly indexation monitoring, and adjust hub-to-spoke links if critical sections lag.

Scale architecture with onwardSEO expertise

If you’re planning to reorganize content for scalable site design, onwardSEO brings the methodology and engineering rigor to make it safe and effective. We blueprint URL hierarchy, define content buckets, and codify navigation planning that sustains crawl budget optimization. Our SEO team instruments log analysis, Core Web Vitals monitoring, and schema cohesion to align signals. We de-risk migrations with deterministic redirect maps and pre-production testing. Post-launch, we optimize cluster graphs to stabilize rankings. Let onwardSEO transform architecture into a durable growth system that compounds over time.