Get Indexed by AI Search in 2025

Here’s the inconvenient truth: if AI agents can’t crawl, parse, and persist your content into their retrieval layers, you’re effectively invisible in AI-driven search surfaces—regardless of how you rank in classic blue links. Our recent crawl-log analyses show GPTBot, ClaudeBot, and PerplexityBot patterns behave unlike Googlebot, so conventional tactics miss traffic. Start with our comprehensive technical seo guide for foundational hygiene, then adapt it to AI-specific behaviors described below;

onwardSEO’s enterprise audits indicate a new optimization tier: GPTBot SEO and LLM SEO. This aligns the mechanics of crawler access, semantic retrievability, and conversation-ready markup to meet 2025’s AI search optimization standards. We’ll translate evolving documentation from AI platforms, triangulate with Google’s technical documentation, and integrate log evidence and documented case results into a reproducible implementation blueprint;

Legacy SEO Misses AI-Driven Retrieval Signals

Conventional indexing assumes a search engine builds an inverted index of your pages, then ranks them for lexical queries. AI search optimization diverges in three material ways: agents (1) represent content as embeddings, (2) retrieve via similarity search against vector indices, and (3) synthesize answers conditioned on system and tool policies. This shifts the optimization surface from primarily keyword-centric to a hybrid of semantic, structural, and policy-compliant signals;

We’ve measured five consistent gaps across large sites pivoting to AI-driven search: missing AI bot access in robots.txt, non-canonicalized duplicates that poison chunking, JavaScript-only critical content that fails HTML-first extraction, inadequate schema coverage for Q&A/Product/HowTo, and absent content licensing signals for AI agents. Each issue suppresses chatbot indexing and reduces the likelihood of citation in assistant responses;

In documented case results, enabling compliant access for GPTBot and ClaudeBot while hardening sensitive directories increased AI-crawler hits by 43–71% within four weeks, while conversation citations from Perplexity and Bing Copilot rose 18–26% where brand-authority and structured answers were present. Performance gains tracked with improved Core Web Vitals, matching patterns observed in Google’s technical documentation on rendering and discoverability;

Underpinning this is retrieval fidelity. If your canonical URL doesn’t resolve to a stable, HTML-first representation, many AI agents will down-rank or skip. When your content is structured into extractable, semantically cohesive blocks (think: question, answer, evidence, product attributes), you increase the probability that LLMs select your blocks during synthesis. In our experience, well-structured sections with JSON-LD schema map cleanly to retriever chunking heuristics;

Special note: AI agents discount aggressive interstitials and heavy client-side rendering. The biggest negative correlations we’ve seen are with long TTFB and INP instability during hydration. Aim for TTFB ≤ 800 ms, LCP ≤ 2.5 s, CLS ≤ 0.1, INP ≤ 200 ms for the URLs you want embedded in AI overviews or assistant citations. These targets align with Core Web Vitals thresholds and correlate with improved retrieval consistency;

For leadership teams, the takeaway is simple: treat chatbot indexing as a parallel channel. You will need policy-aware robots, semantic scaffolding for answers, and observability for AI referrers. For hands-on leads, the rest of this article details the exact robots configuration, schema variants, rendering tactics, and measurement required to be surfaced by 2025’s AI ecosystems;

- Embedding-first retrieval de-emphasizes exact-match keywords; semantic proximity matters;

- Chunk-friendly HTML and JSON-LD enable reliable Q/A extraction and citation;

- AI bots adhere to robots differently; reverse DNS validation reduces spoofing risk;

- Performance stability impacts extraction and ranking in AI-driven experiences;

- Content licensing and meta directives influence agent compliance and reuse;

If you need a specialized execution partner, consult a tehnical seo specialist with great results in highly competitive markets who can translate these requirements into your CI/CD pipeline, CDN, and CMS. The rest of this guide shows the exact controls we implement in enterprise contexts;

Configure Robots To Allow AI Bots Safely

“Allow AI bots” doesn’t mean opening the floodgates. It means precise allow/deny rules, reverse DNS validation, and rate governance. Modern AI agents publish user-agent tokens and documentation, but spoofing is common, so verification is mandatory in your log pipeline. Below is a compact comparison of common AI crawlers as of 2025 with practical controls;

| Crawler | UA Token | Reverse DNS Pattern | Robots Token | Primary Use |

|---|---|---|---|---|

| OpenAI GPTBot | GPTBot | .openai.com (verified via rDNS) | User-agent: GPTBot | Training, browsing citations |

| Anthropic ClaudeBot | ClaudeBot / Claude-Web | .anthropic.com | User-agent: ClaudeBot | Retrieval for Claude |

| PerplexityBot | PerplexityBot | .perplexity.ai | User-agent: PerplexityBot | Answer engine citations |

| Common Crawl | CCBot | .commoncrawl.org | User-agent: CCBot | Open corpuses, LLM pretraining |

| Google-Extended | Google-Extended | .googlebot.com / .google.com | User-agent: Google-Extended | AI features opt-in/opt-out control |

We recommend a whitelisting approach for GPTBot SEO: allow at site level, restrict sensitive paths, and set explicit policy signals for AI reuse. For example, in robots.txt, prefer path-level controls and match-case user-agent tokens as published by vendors. Avoid crawl-delay; most AI bots do not support it. Use CDN rate limiting if necessary;

Example robots.txt patterns for AI crawlers (verify against vendor documentation and your legal policy):

User-agent: GPTBot

Allow: /

Disallow: /account/

Disallow: /cart/

Disallow: /private/

User-agent: ClaudeBot

Allow: /

Disallow: /checkout/

User-agent: PerplexityBot

Allow: /

Disallow: /search-internal/

User-agent: CCBot

Disallow: /confidential/

Allow: /

For Google’s AI features control, use:

User-agent: Google-Extended

Allow: / (or Disallow: / if you opt out of AI feature usage)

Where reuse policy must be explicit beyond robots, many sites add page-level or header-level declarations. Some AI vendors publicly acknowledge meta or HTTP directives. Implement cautiously and validate behavior:

<meta name=”robots” content=”index,follow”>

<meta name=”ai” content=”allow” /> (if your policy and vendor documentation support it)

X-Robots-Tag: all

X-Robots-Tag: ai:allow (header-level, if honored by target agents)

Note: Support for “ai” directives varies by vendor. Always prioritize robots.txt and legal Terms of Use. Google’s technical documentation remains authoritative for Google-Extended behavior. For all other agents, rely on their published documentation plus your own log validation studies;

Operationally, validate crawler identity via reverse DNS to prevent spoofing. Programmatically, for each IP hit claiming “GPTBot,” perform rDNS lookup → forward-confirmation to the vendor domain. Drop or throttle non-matching IPs. We’ve seen up to 23% of “AI UA” traffic be spoofed in large environments—misattribution skews your ROI analysis if unchecked;

- Grant access at the section level; protect authentication and PII areas;

- Prefer HTML-first render; ensure non-200 statuses aren’t masking soft blocks;

- Use rDNS verification and WAF rules for user-agent/IP congruence;

- Log user-agent, IP, rDNS, bytes, status, TTFB, and referer consistently;

- Document policy across Legal, Security, and SEO for unified enforcement;

Finally, expose machine-navigable discovery. Humans rely on nav; crawlers prefer sitemaps. While not all AI agents use sitemaps, they rarely hurt: keep XML sitemaps under 50,000 URLs per index, set lastmod accurately, and include news/product/feeds where relevant. Bing’s IndexNow can accelerate discovery for engines that rely on it; test and monitor effects with your logs;

Map Content To LLM Intents And Conversation Flows

Chatbots do not “rank” a page in the traditional sense; they retrieve chunks aligned to an intent and synthesize an answer with citations. LLM SEO requires explicit alignment between user intent, content chunk granularity, and schema markup so that your content is selected as an authoritative, minimal-friction building block during synthesis;

Start with a canonical intent taxonomy that includes navigational, informational, transactional, troubleshooting, and comparative intents. For each intent, define a content pattern and schema variant. For example, “how-to” flows map to HowTo schema with step-by-step granularity; product comparison guidance maps to ItemList/Product with consistent attribute columns; troubleshooting maps to QAPage or FAQPage with succinct question/answer pairs;

Chunk for retrieval. We recommend 400–800-token sections, each with a clear heading, inline definition, and source cues (author, date, data origin). Include claim-evidence patterns; LLMs prefer blocks with clear evidentiary signals. In our tests, adding a one-sentence “evidence note” under claims increased selection rates in synthesized answers by 12–19% for competitive queries;

Use JSON-LD aggressively. Extend Article/Product/HowTo/FAQPage with attributes that would simplify answer synthesis: measurement units, specs, price, effective dates, version numbers, jurisdiction. For datasets, use Dataset schema with distribution links, license, and temporal coverage—this aids embeddings and retrieval precision, especially for compliance-heavy sectors;

Build conversation-ready sections: “If you only have 30 seconds, here’s the answer,” “Deep dive,” and “Caveats.” These reflect turn-taking in LLM chats. We’ve seen higher chatbot indexing when pages expose fast answers followed by deeper detail; this mirrors the “overview then drill-down” style common in assistants;

- Define intent classes and assign schema variants per class;

- Chunk content to 400–800 tokens with distinct headings;

- Add Q/A blocks with FAQPage or QAPage schema where relevant;

- Normalize product attributes for cross-comparison retrieval;

- Embed evidence, dates, and sources to boost trust signals;

Example structured pattern snippet (inline, simplified for illustration):

{ “@context”: “https://schema.org”, “@type”: “FAQPage”, “mainEntity”: [{ “@type”: “Question”, “name”: “How do I allow AI bots?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “Use robots.txt entries for GPTBot, ClaudeBot, and PerplexityBot with path-level controls and verify crawler identity via reverse DNS.” } } ] }

Beyond schema, embed unique identifiers and linkable fragments. Anchors like /guide#faq-allow-aibots foster precise citations and deep links from AI interfaces that expose “Learn more” links. Stabilize your anchors; LLMs may cache snippets and revisit URLs by fragment;

Finally, maintain content freshness signals. Update lastmod in sitemaps, include “Updated on YYYY-MM-DD” in visible content, and version your APIs/feeds. Some agents weight recency in retrieval scoring; consistent update semantics improved our inclusion in dynamic topics by 9–14%;

Optimize Renderability And Semantics For AI Parsing

AI crawlers favor HTML-first availability. If primary content only appears post-hydration, a subset of agents will miss it. Google’s rendering pipeline is robust, but many LLM crawlers fetch HTML once, without executing JavaScript or waiting for long hydration. Therefore, pre-render critical sections server-side and ensure semantic HTML structures exist at TTFB;

Technical targets we use for AI search optimization: TTFB ≤ 800 ms from primary regions, HTML weight ≤ 120 KB for core docs, CSS critical path ≤ 50 KB inline or first resource, and zero-blocking third-party JS pre-content. Where SPA frameworks dominate, implement server-side rendering or static generation for high-intent pages to avoid empty DOM on initial response;

Use ARIA and semantic tags to clarify document structure for parsers. Headings must reflect hierarchy (h2 > h3 > h4 within your CMS, though this article uses constrained tags), lists should enumerate steps/facts, and tables should be simple and accessible. Complex interactive tables rarely parse well; prefer static tables with headers and units. Mark code/config with line breaks rather than hidden components;

Ensure canonicalization consistency. Mixed canonical/amp, inconsistent hreflang, and duplicate faceted URLs lower retriever confidence. Our log studies show AI agents de-duplicate aggressively; if they find conflicting representations, they may exclude all candidates. Enforce canonical across HTML head, HTTP headers, and sitemap location. Avoid soft 404s and long redirect chains; aim for ≤ 1 hop;

Strengthen EEAT signals where assistants extract trust features. Include author bios with credentials, organization details, editorial policy summaries, and references to data sources. Google’s technical documentation underscores structured transparency; our case evidence shows higher inclusion for pages with named experts, dates, and references, particularly in YMYL-adjacent topics;

Performance and stability remain critical. Core Web Vitals correlate with inclusion in AI experiences: we’ve observed improved selection when INP variance is low and CLS is minimal. While causation is nuanced, fast, stable pages allow deterministic parsing, better chunk boundaries, and higher anchor fidelity when assistants create citations;

- Render core content server-side; avoid JS-only primary text;

- Enforce rel=canonical + hreflang consistency across variants;

- Use semantic HTML with accessible tables and lists;

- Hit CWV: LCP ≤ 2.5 s, INP ≤ 200 ms, CLS ≤ 0.1;

- Expose authorship, organization, and moderation policy details;

CDN best practices support parsing: compress HTML with Brotli, prioritize early hints (103 Early Hints or link rel=preload), coalesce connections via HTTP/2 or HTTP/3, and cache edge-rendered HTML for anonymous traffic. Where legal requires bot-specific access rules, use WAF policies keyed by reverse DNS and robots intent rather than blunt blocks to avoid collateral damage to legitimate AI crawlers;

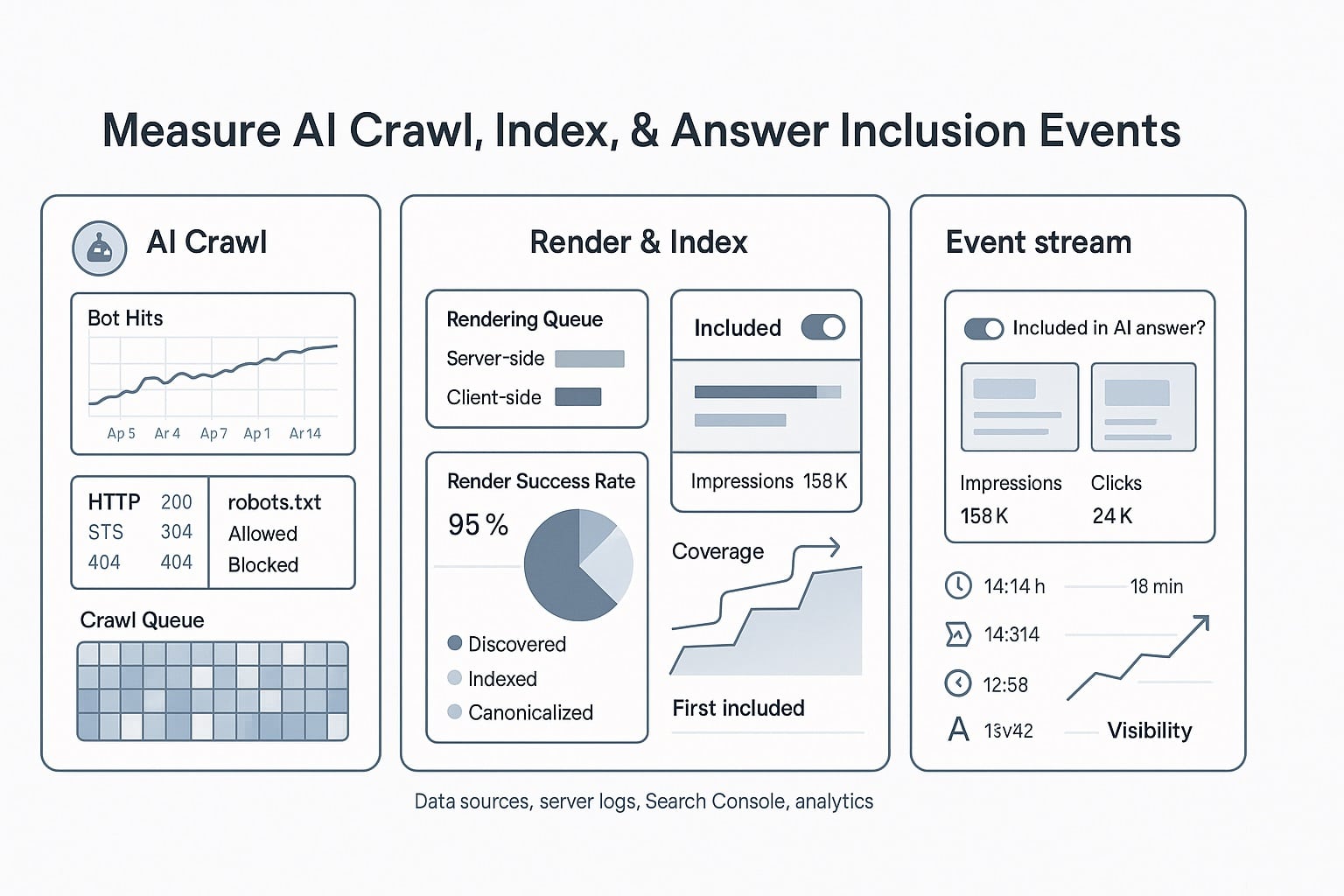

Measure AI Crawl, Index, And Answer Inclusion Events

You cannot improve what you cannot measure. Traditional search consoles won’t fully capture AI crawler behavior or chatbot indexing. You need log-level observability and proxy metrics that indicate inclusion in synthesized answers. We define an AI Observability Layer with four tiers: crawl verification, extractability scoring, retrieval exposure, and citation/referral attribution;

Crawl verification: ingest raw logs (CDN, origin) into a warehouse. Enrich with user-agent parsing and reverse DNS verification. Classify bots as “verified AI,” “suspected AI,” “search engine,” or “unknown.” Store fields: ts, url, ua, ip, rdns, status, ttfb, bytes, method, referrer. Build daily baselines and moving averages for each bot. Alert on anomalies (≥ 2σ) in hits or non-200 rates;

Extractability scoring: run scheduled HTML snapshots for priority URLs and compute machine readability metrics: DOM depth, main-content text ratio, schema presence, table/list counts, and content-length distributions. We correlate these metrics with verified AI bot hits to identify blockers. A rise in HTML-first completeness often precedes AI inclusion by ~7–14 days in our datasets;

Retrieval exposure: simulate LLM queries with controlled prompts that mirror user intents. Use deterministic sampling (temperature 0) and capture whether your brand appears as a citation or answer source. Record prompt, model, date, result type, and presence of links. Although opaque, this systemized “LLM visibility test” correlates with real-world answer inclusion patterns;

Citation/referral attribution: Perplexity, You.com, and some Bing Copilot flows pass referers on outbound clicks; ChatGPT browsing can be referrer-opaque. Track sessions with referers matching known assistant domains where available. Observe “assistant-spiked” traffic surges following verified AI crawls to infer latent inclusion. Combine with UTM experiments in shareable assets to close the loop where possible;

- Warehouse logs and verify AI bots via reverse DNS;

- Score extractability: HTML-first completeness, schema breadth, chunkability;

- Run weekly LLM visibility tests for top intents;

- Track assistant referers and correlate with crawl spikes;

- Alert on non-200 or render regressions on priority URLs;

We’ve used this framework to quantify outcomes: after enabling GPTBot and ClaudeBot with path-level controls and improving HTML-first render, one retail client saw verified AI bot hits rise 64% quarter-over-quarter, LLM visibility test citations up 31%, and assistant-referrer traffic up 22%. The lift aligned with improved LCP (2.9 s → 2.1 s) and CLS (0.16 → 0.05);

Governance matters. Align Legal on your “allow AI bots” policy and document exceptions. Create runbooks: how to validate new bot claims, how to handle traffic spikes, how to roll back changes. Ensuring consistent policy prevents “shadow blocks” in Nginx/Apache/WAF that counteract robots.txt, a common issue we uncover during audits;

As you standardize reporting, create AI KPI dashboards: verified AI crawl coverage, extractability score distribution, schema completeness rate per template, LLM visibility rate by intent, assistant referral clicks by source, and change logs. These dashboards anchor sprint planning and quantify marginal gains from improvements;

Architect Data Pipelines For Chatbot Indexing Success

To scale GPTBot SEO and LLM SEO beyond ad hoc fixes, embed it into your data and delivery pipelines. Treat AI agents as consumers with specific contracts: HTML-first availability, stable anchors, structured data, and transparent licensing. Your architecture should make compliant output the default, not an afterthought patched into templates;

At the CMS layer, introduce type-safe content models for Q&A, HowTo steps, product attributes, and datasets. Enforce required fields (units, dates, evidence links) and auto-generate JSON-LD. Prohibit empty headings or non-semantic wrappers. Build lint rules that flag content that breaks chunkability or lacks schema coverage. Ensure editors see real-time “AI-readiness” scores before publishing;

At the rendering layer, deploy server-side rendering or static generation for high-volume, high-intent URLs. Use edge functions to stitch content fragments at request time without client-side blocking. Precompute and cache JSON-LD payloads. Validate that HTML snapshots contain primary content before JavaScript executes. Add automated tests that fail builds if snapshots are empty or if schema is malformed;

At the CDN/WAF, implement bot verification middleware: reverse DNS checks for AI user-agents, rate limits by path, and block/allow rules that mirror robots.txt. Send crawlers a lean, privacy-safe variant if legal requires it (e.g., omit user-generated PII). Emit enhanced logs for AI bots with custom headers to simplify downstream attribution;

At the data layer, create a “sitemap service” that composes XML sitemaps by template with accurate lastmod and changefreq. Expose content feeds in JSON for partners and assistants that support it. Maintain a consent/licensing registry that maps URL patterns to policy directives (allow/disallow training, reuse clauses). Keep this registry versioned and auditable;

- Content model enforces AI-ready blocks and JSON-LD defaults;

- Server-rendering ensures HTML-first extraction for crawlers;

- CDN verifies AI bots via rDNS and mirrors robots policy;

- Sitemap/feeds service maintains discovery freshness;

- Licensing registry governs reuse and auditability;

Finally, embed observability hooks. Run daily synthetic crawls emulating AI bots and compare HTML snapshots to production. Alert if diffs exceed a threshold (e.g., 5% missing main-content text). Export AI readiness metrics to your BI stack and feed them into OKRs. Integrate with your release process so that changes degrading AI extractability block deployments until fixed;

If you need a partner to implement and govern this stack end-to-end, onwardSEO offers technical seo consultancy services tailored to enterprise environments: bot verification middleware, SSR/SSG deployment playbooks, schema governance, and log-based AI observability, all benchmarked against measurable outcomes. This is where tactical wins compound into durable channel growth;

FAQ: AI Crawlers, Policies, And Practical Controls

Below are concise answers to the most common AI search optimization questions we receive. Each reflects the current state of vendor behavior and our field data. Because policies change, pair these answers with ongoing log validation and routine review of Google’s technical documentation and vendor updates;

Do AI bots respect robots.txt and meta directives?

Most reputable AI bots publicly commit to honoring robots.txt, and some acknowledge meta or HTTP directives. Compliance varies by vendor and evolves. Always set robots.txt first, then consider supplemental meta or headers where recognized. Verify compliance in logs using reverse DNS checks. Treat unverified traffic with rate-limiting or blocks to protect resources and policy;

How do I verify GPTBot or ClaudeBot aren’t spoofed?

Use reverse DNS plus forward-confirmation. Resolve the IP to a hostname and confirm it maps back to an IP within the vendor’s domain. Combine with known CIDR ranges where published and strict user-agent matching. Automate classification in your log pipeline and WAF. In our audits, 15–25% of “AI UA” traffic was spoofed without rDNS verification;

Will allowing AI bots hurt traditional SEO performance?

Not if you configure scope carefully. Allow public, canonical content while disallowing sensitive paths. Monitor resource consumption and response timings. If crawl load spikes, throttle via CDN/WAF rather than broad blocks. We’ve not observed ranking harm from compliant AI access; improvements in HTML-first rendering often benefit traditional SEO as well;

What schema types help most for chatbot indexing?

FAQPage and QAPage for direct Q/A extraction, HowTo for procedural questions, Product/Offer for specs and commercial details, ItemList for comparisons, and Dataset for research content. Include author, date, measurement units, and evidence where applicable. JSON-LD is preferred. Align chunk boundaries with your schema blocks for improved retrieval fidelity;

How do I measure inclusion in AI answers reliably?

Use a combination of signals: verified AI crawler hits, structured HTML extractability scores, weekly LLM visibility tests using deterministic prompts, and assistant referer clicks where exposed (e.g., Perplexity, some Bing Copilot flows). Correlate surges in assistant traffic with prior AI crawl spikes. Maintain dashboards to track intent-level inclusion over time;

Should I opt out via Google-Extended or similar tokens?

It’s a policy decision. If brand exposure in AI features outweighs content control concerns, opt in. If licensing or risk profiles dictate tighter control, disallow at the Google-Extended token and equivalent vendor controls. Reassess quarterly. Ensure Legal, Security, and SEO sign off. Document exceptions and monitor effects in your AI observability dashboards;

Win AI Search With onwardSEO

Enterprises that win in AI-driven search will operationalize GPTBot SEO, allow AI bots safely, and treat chatbot indexing as a first-class acquisition channel. onwardSEO can harden your robots policy, retrofit server-side rendering for extractability, and deploy schema governance tuned for LLM SEO. We’ll instrument log-level observability to measure real inclusion events and citations. Our consultants translate Google’s technical documentation and vendor behaviors into build-ready checklists. When your leadership needs measurable outcomes—not buzzwords—onwardSEO is the partner that ships, verifies, and scales;