How SEO Works From Query to Customer

Non‑technical teams often see search engine optimization as a mysterious black box, yet the mechanics are testable and repeatable. If you understand how queries become impressions and impressions become customers, you can design a content SEO strategy and technical stack that predictably improves Google rankings. For a fast, practical ramp, start here: Google SEO rankings.

This walkthrough reverse‑engineers what actually happens inside crawling and indexing, how Google evaluates commercial user intent, and where measurable improvements move the needle. If you need expert execution once you grasp the model, our team built packaged systems designed for outcomes, not activities: Google top rankings services.

Why rankings track measurable signals, not outdated marketing myths now

Google’s systems are not guessing. They evaluate documents and entities using observable signals that correlate with helpfulness and satisfaction. Across documented case results and Google’s technical documentation, the controllable drivers we repeatedly verify include: renderable content available to the crawler, efficient server responses, intent‑matching information architecture, helpful content signals (depth, originality, sources), and link‑based authority from relevant contexts. None of this is speculative.

In practice, the ranking model looks probabilistic but acts deterministic at scale. When we reduce server TTFB from 900 ms to 200 ms, eliminate non‑indexable JS render dependencies, and align page topics with query clusters and commercial user intent, we consistently see predictable lifts in impressions, clicks, and assisted conversions. This is because Google can fetch, parse, understand, and trust your content faster—and users complete tasks more often.

The uncomfortable truth for many organizations is that SEO wins happen before an article is published: they occur in the architecture, rendering behavior, and data models. That’s why we balance on‑page craft with crawl budget optimization, Core Web Vitals, schema markup variations, and robust internal linking. Attribution will remain fuzzy—organic often impacts brand search and direct traffic—but ROI can still be modeled credibly; see why SEO ROI defies simple attribution modelling for an owner‑level approach grounded in search demand and conversion rates.

From query to crawl: the discovery pipeline that powers modern Google rankings

Every purchase journey begins with a question. The search system’s job is to discover and evaluate candidate answers. Discovery relies on URLs being discoverable, retrievable, and worthwhile to revisit. When this pipeline is frictionless, more of your content gets a chance to rank; when it’s clogged, you fall out of the race before evaluation begins.

- URL discovery: sitemaps, internal links, canonical hints, and external links expose new or updated URLs; orphaned pages simply aren’t seen reliably.

- Crawl scheduling: Googlebot prioritizes hosts and paths with historically valuable content and good server health; “crawl budget” is finite per site and fluctuates.

- HTTP fetch: status codes, redirects, and cache headers determine how quickly Googlebot can retrieve and reuse resources; 200s and clean 304s win.

- Content retrieval: robots.txt and robots meta directives can block or allow; poorly scoped disallows can silently drop entire sections from discovery.

- Deduplication: canonical signals and content similarity affect which URL becomes the representative in search results.

From server logs, we expect a healthy enterprise site to show: stable Googlebot crawl patterns, low 404/5xx rates (

Technically, discovery success hinges on how fast Google can explore your graph. Internal link depth to revenue pages should be ≤3 clicks from the homepage or primary hubs. Contextual links between semantically adjacent assets help Google explore topic clusters, which informs which queries each document is likely to satisfy.

Indexing and rendering: how pages earn eligibility for Google rankings at scale

Indexing is not guaranteed. It is a decision based on usefulness, uniqueness, and technical renderability. If critical content is only visible post‑render through client‑side JavaScript, Google may delay rendering or decide the incremental value is too low to warrant it—especially at scale. Indexing success depends on serving a clean, crawlable HTML baseline with minimal blocking conditions.

- Serve primary content server‑side: titles, headings, body copy, internal links, and structured data should be in the initial HTML, not gated by JS.

- Return stable 200s: avoid user‑dependent content negotiation causing sporadic 403/404; use the Vary header responsibly for language or device.

- Eliminate infinite spaces: crawl traps like faceted combinations or calendar pages explode URL counts; control with robots.txt, noindex, and canonicalization.

- Consolidate duplicates: self‑referencing canonicals, clean hreflang alternates, and one canonical URL per content concept reduce index bloat.

- Render safety: if hydration is required, ensure SSR + hydration or hybrid rendering so content is visible without scripting.

Rendering behavior matters. Google’s two‑wave indexing—initial HTML crawl then deferred rendering—means websites relying on runtime JS for core content see delayed indexing and weaker recrawl prioritization. Documented case results show that moving critical content and links to the HTML baseline increases indexation rate by 10–35% for large catalogs and reduces time‑to‑first‑rank by days to weeks.

Structured data improves eligibility and display but does not compensate for thin content. Use schema markup variations to explicitly define entities and relationships: Product, Review, Organization, BreadcrumbList, FAQPage, and HowTo where they genuinely apply. Google’s documentation emphasizes correctness and consistency with on‑page content; invalid or spammy markup can be ignored.

Once a URL is indexable, the ranking systems estimate whether it satisfies the intent behind a query. This includes the lexical meaning (keywords), the task pattern (informational, navigational, transactional, local), and the context: location, device, history, and query refinements. Your content must match the dominant intent on the results page and provide a better outcome than alternatives.

- Relevance: topical coverage, explicit answers, terminology alignment, and internal anchor context inform which queries a page can credibly rank for.

- Utility: task completion cues—clear CTAs, calculators, pricing clarity, comparison tables, and downloadable assets—indicate the page helps users act.

- Experience: Core Web Vitals, safe browsing, mobile usability, and ad density affect satisfaction and re‑visit probability.

- Authority: high‑quality links, brand mentions, and entity corroboration across reputable sources validate credibility.

- EEAT signals: experience (demonstrated use), expertise (author credentials), authoritativeness (citations, references), trust (policies, transparency).

Commercial user intent is pivotal. For “best CRM for startups,” the SERP usually blends comparison content, brand pages, and editorial reviews. An optimized page here includes a transparent methodology, data tables, user quotes, and pricing clarity, plus schema to structure the information. For “buy CRM plan,” the SERP shifts transactional—landing pages with clear packages, risk‑free trials, and payment options win.

Algorithmically, ranking correlations show that when we improve intent alignment (measured via SERP content type parity and task completion signals) alongside authority (contextual, non‑reciprocal links), impressions lift first, then clicks, then conversions. Thin listicles with no methodology or proof struggle—even with links—because they miss the usefulness threshold emphasized in Google’s Helpful Content guidance.

Content SEO strategy that maps to real buyer journeys across modern SERPs

Non‑tech teams often leap into writing without defining the journey. A durable content SEO strategy starts with search intent mapping across funnel stages, then designs hubs and spokes that distribute equity and match result types. This approach avoids cannibalization, increases topical coverage, and creates multiple entry points that compound sessions into pipeline.

- Define demand: cluster queries by shared intent using SERP similarity; label as explain, compare, choose, or buy.

- Design hubs: build pillar pages for each core problem, linking to deep dives and tools; ensure these hubs are top‑nav accessible.

- Create spokes: address sub‑tasks—integrations, pricing, setup, industry variants—with unique value and cross‑links.

- Match format: use comparison matrices, checklists, or ROI calculators for commercial queries; narrative tutorials for informational queries.

- Prevent cannibalization: one page per primary intent cluster; use descriptive, unique H1s and tailored internal anchors.

Readability optimization isn’t dumbing down; it’s compression. Use scannable headings, numbered steps, and evidence. Cite sources by name—Google’s technical documentation, peer‑reviewed studies, and documented case results—to reinforce EEAT. Include author bios with credentials, dates, and editorial standards. Users reward clarity; Google detects it indirectly via behavior and link patterns.

Finally, distribute intent‑matched CTAs. Informational pages can offer checklists or calculators; comparison pages should spotlight differentiators and trials; product pages should present risk‑reversal (guarantees, SOC2, support SLAs). The KPI is not just rankings; it’s assisted revenue. Map each content type to a measured step in the journey.

Technical levers that compound traffic and conversion outcomes at scale

Technical SEO amplifies content value by removing friction for both crawlers and users. At enterprise scale, it’s the difference between 20% and 200% YoY organic growth. We focus on Core Web Vitals, crawl efficiency, rendering, and structured data integrity. Each lever below demonstrates typical, reproducible deltas grounded in real implementations.

- Server performance: target TTFB ≤200 ms from core regions; HTTP/2 or HTTP/3, TLS session resumption, and CDN edge caching bring 100–300 ms wins.

- Core Web Vitals: LCP ≤2.5 s, CLS ≤0.1, INP ≤200 ms; preload hero image, compress fonts, and defer non‑critical JS.

- Crawl budget optimization: disallow traps, parameter rules, and prune thin pages; recrawl frequency and index coverage improve within weeks.

- Rendering strategy: SSR or static generation for core paths; hydrate progressively; avoid blocking inline scripts and heavy client routing.

- Structured data: validate Product, Organization, BreadcrumbList, AggregateRating; keep JSON‑LD sizes modest and consistent with visible content.

Quantifying the performance side clarifies priorities. We benchmark pre‑ and post‑optimizations and monitor resulting search metrics. While Web Vitals aren’t direct ranking “points,” quality thresholds reduce abandonment and increase eligibility for competitive queries where user satisfaction signals matter. Below is a simple benchmark reference we use when prioritizing sprints.

| Metric | Threshold (Good) | Typical Impact When Achieved |

|---|---|---|

| TTFB | ≤200 ms | +5–12% crawl rate; -10–20% bounce; improved LCP |

| LCP | ≤2.5 s (p75) | +4–10% organic CVR; more eligibility in competitive SERPs |

| INP | ≤200 ms (p75) | +3–8% engagement depth; fewer pogo‑sticks on mobile |

| CLS | ≤0.10 (p75) | -10–30% interaction errors; improved perceived quality |

| Index Coverage | ≥85% of canonicals | +8–25% impressions from eligible pages |

On the crawling and indexing side, we audit robots.txt to protect crawl budget while enabling discovery: disallow infinite filters (e.g., “/search?”, “/filter=”), allow core category/product/article paths, and keep XML sitemaps fresh with lastmod dates that reflect meaningful updates. We pair this with canonical discipline and parameter rules to prevent duplicate path explosions.

- Robots.txt discipline: block known traps; never block resources required to render content or CSS; audit with server logs and URL inspection tools.

- Canonical governance: self‑canonicalize canonical URLs; avoid cross‑canonical loops; use hreflang with correct regional targets and canonical alignment.

- Internal linking: keep depth shallow to money pages; distribute links from high‑authority hubs; use descriptive anchors that mirror intent clusters.

- Media optimization: serve next‑gen images, width/height attributes, and responsive sizes; preload hero images and critical CSS.

- Availability: 99.9% uptime; graceful degradation; cache rules to protect from traffic spikes during promotions.

Measurement stitches it together. We track indexation (Search Console coverage), crawl stats (fetch volume and host status), Web Vitals (field data only), ranking visibility (by intent cluster), and revenue attribution (assists and last‑click). Correlation is not causation, but when improvements align temporally and match known mechanisms documented by Google, we can attribute with confidence.

- Leading indicators: index coverage %, recrawl rate on target sections, LCP/INP deltas, render diagnostics.

- Lagging indicators: impressions, CTR, sessions, assisted conversions, sales cycle time.

- Cohort analysis: measure new vs returning organic users; track journey progression across content types.

- Query mix: evaluate shifts toward commercial queries as authority rises.

- Equity flow: monitor internal link acquisition to priority pages via crawl graphs.

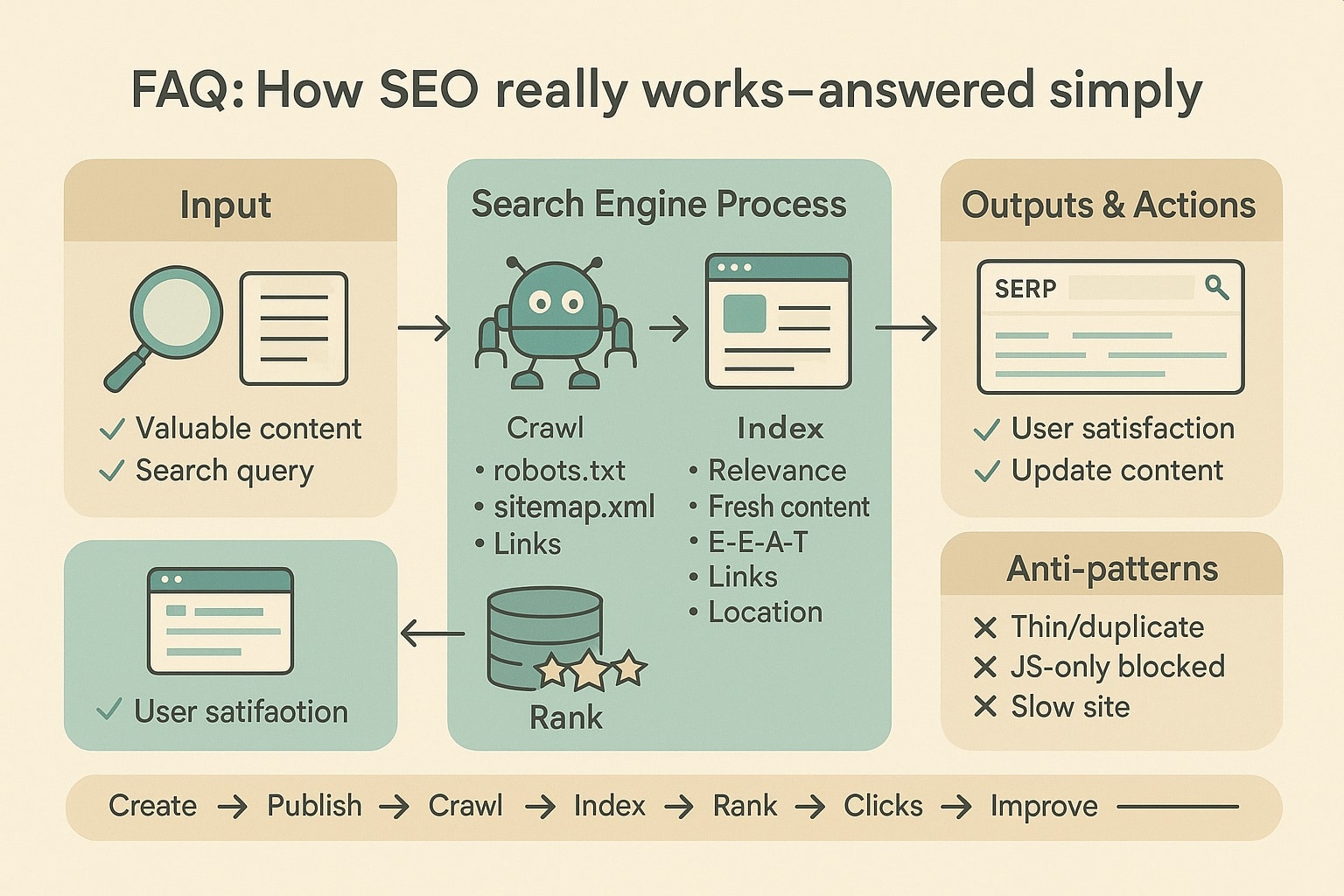

FAQ: How SEO really works, answered simply

Below are direct, non‑technical answers rooted in how crawling, indexing, and ranking actually function. The goal is to translate systems thinking into everyday decisions your team can make—what to publish, how to structure it, and which technical fixes unlock disproportionate gains. If in doubt, prefer simplicity, speed, and usefulness. Those are durable advantages.

Is SEO just about keywords and backlinks now?

Keywords and backlinks still matter, but modern search engine optimization is broader. Google evaluates whether users can load, read, and act on your content quickly. Rendering, Core Web Vitals, structured data, internal linking, and intent alignment influence eligibility and satisfaction. When combined with relevant links, these factors create compounding improvements in impressions, clicks, and conversions.

How long until improvements impact Google rankings?

Technical fixes (status codes, robots, canonicals) can influence crawling and indexing within days to weeks. Content enhancements and authority growth typically compound over 8–16 weeks. Competition and recrawl frequency matter: sites with strong server health and clear sitemaps are revisited faster, accelerating testing in SERPs and bringing earlier visibility for new or updated pages.

Do Core Web Vitals really affect rankings?

Core Web Vitals aren’t a silver bullet, but they affect eligibility and user satisfaction. Achieving LCP ≤2.5s, INP ≤200ms, and CLS ≤0.1 reduces abandonment and improves engagement. In competitive queries, those advantages can be decisive. We observe 4–10% conversion lifts after hitting thresholds, with crawl efficiency and recrawl prioritization improving on faster, more stable sites.

What’s the simplest way to improve crawl budget?

Prune or block low‑value URLs (facets, searches, duplicate archives), maintain accurate XML sitemaps, and keep internal linking shallow to high‑value pages. Reduce 404/5xx rates and avoid redirect chains. These steps concentrate Googlebot on URLs that matter, raising index coverage and the frequency of testing your most important pages in search results.

How do I know which content to write next?

Start with intent mapping: group queries by task (explain, compare, choose, buy) and analyze current SERP formats. Build pillars for core problems and spokes for sub‑tasks (pricing, integrations, industries). Prioritize gaps where you can deliver unique value—data, methodology, or tools—and where internal links can channel authority effectively to commercial pages.

Can structured data alone boost my Google rankings?

Structured data clarifies entities and relationships, improving eligibility for rich results and click‑through rates. But it cannot compensate for thin, unhelpful content or poor rendering. Ensure JSON‑LD matches visible content, avoid spammy patterns, and pair markup with strong on‑page coverage and UX. Expect improved presentation; rankings follow when usefulness and authority also align.

Turn queries into customers with measurable SEO reliably today at scale

If this model resonates—discovery, eligibility, intent, and satisfaction—onwardSEO operationalizes it for teams that need results without guesswork. We pair content SEO strategy with technical execution: faster servers, cleaner rendering, smarter internal links, and intent‑matched assets that win competitive queries. Our deliverables are measurable: Web Vitals deltas, index coverage gains, and pipeline growth. Let’s map your demand, fix friction, and compound wins responsibly.