Traffic Tanked After Google Update? 11 Fast Fixes

When a google core update lands, traffic volatility is often less about a single ranking drop and more about systemic mismatches: intent gaps, entity ambiguity, weak helpfulness signals, and crawl inefficiencies surfacing at once. If this sounds familiar, prioritize diagnosis that distinguishes algorithm shifts from technical faults, then sequence fixes by speed-to-impact. If you need hands-on triage, our technical seo consulting aligns engineering effort with search visibility ROI quickly.

This playbook compresses weeks of flailing into days of forward motion by focusing on measurable levers: log-derived crawl budget optimization, Core Web Vitals hardening, schema-driven EEAT reinforcement, and AI Overviews readiness. For teams facing an acute algorithm update impact, you can also coordinate an expedited Google core update recovery program to stabilize and then scale.

Read the update through data, not panic

Updates in 2023–2025 increasingly blend quality, helpfulness, and spam-signal recalibration with broader changes to how content is summarized and surfaced. Treat a traffic drop as a multi-signal reweighting event, not a penalty. Start with a 14–28 day read to see if post-update ranking drops stabilize, then attack the deltas with precise measurement.

Authoritative guidance from Google’s technical documentation emphasizes that core updates reassess content overall, not specific pages. Our enterprise datasets show that the most resilient sites maintain entity clarity (Organization → People → Topics), meet Core Web Vitals at the 75th percentile on mobile, and enforce crawl and indexation rigor (no indexation debt, minimal orphaning, stable canonicalization).

From hundreds of documented cases, we repeatedly observe four recurring patterns after an algorithm update:

- Intent realignment: Queries shift toward fresher, expert-led formats; listicles underperform while how-to frameworks win.

- Entity consolidation: Strong author and organization entities win compared to anonymous, aggregated content sets.

- Helpfulness weighting: Content with verifiable first-hand experience and unique data outperforms commodity rewrites.

- Technical surfacing: Sites with clean render paths, small JS hydration costs, and stable CLS retain visibility.

Run a zero-assumption audit window: isolate traffic drop segments by device, country, query class (commercial, informational, navigational), and SERP feature exposure (Top Stories, video, FAQ loss, AI Overviews eligibility). Correlate with your server logs: a drop in Googlebot HTML hits with flat sitemap fetches usually indicates de-prioritized sections, not crawlability failure.

If losses concentrated on a few query clusters, consider content intent mismatch; if losses are broad-based but heavier on mobile, suspect performance/regression. If category pages tanked but PDPs held, check faceted crawl explosion after a template change.

To speed team alignment, share a one-page matrix. Use ours or adapt it:

| Observed symptom | Likely cause cluster | Primary diagnostic | Fast fix | Expected signal shift |

|---|---|---|---|---|

| 20–40% ranking drop on mobile only | Performance/CWV regression, render-blocking scripts | CrUX vs. RUM delta; lighthouse CI history | Reduce INP; defer non-critical JS; lazy-hydrate | 1–2 weeks post-deploy (field data lag) |

| Losses on top-of-funnel head terms | Intent shift to expert POV, data-backed content | SERP feature scan; competitor entity strength | Add original data, author expertise, FAQs | 2–4 weeks as recrawl propagates |

| Wide fluctuation desktop and mobile | Crawl/indexation debt, canonical conflicts | Index coverage, log parity to sitemaps | Consolidate duplicates; fix canonicals | Days to a week for reindex corrections |

| Great content, no recovery trend | Weak internal links, diluted PageRank flow | Link graph coverage; orphan detection | Rebalance hubs; add context links | Days for crawl discovery; 1–3 weeks impact |

Now, sequence your 11 fast fixes for maximum compounded impact. We’ll group them by engineering track to minimize thrash and compress cycle times.

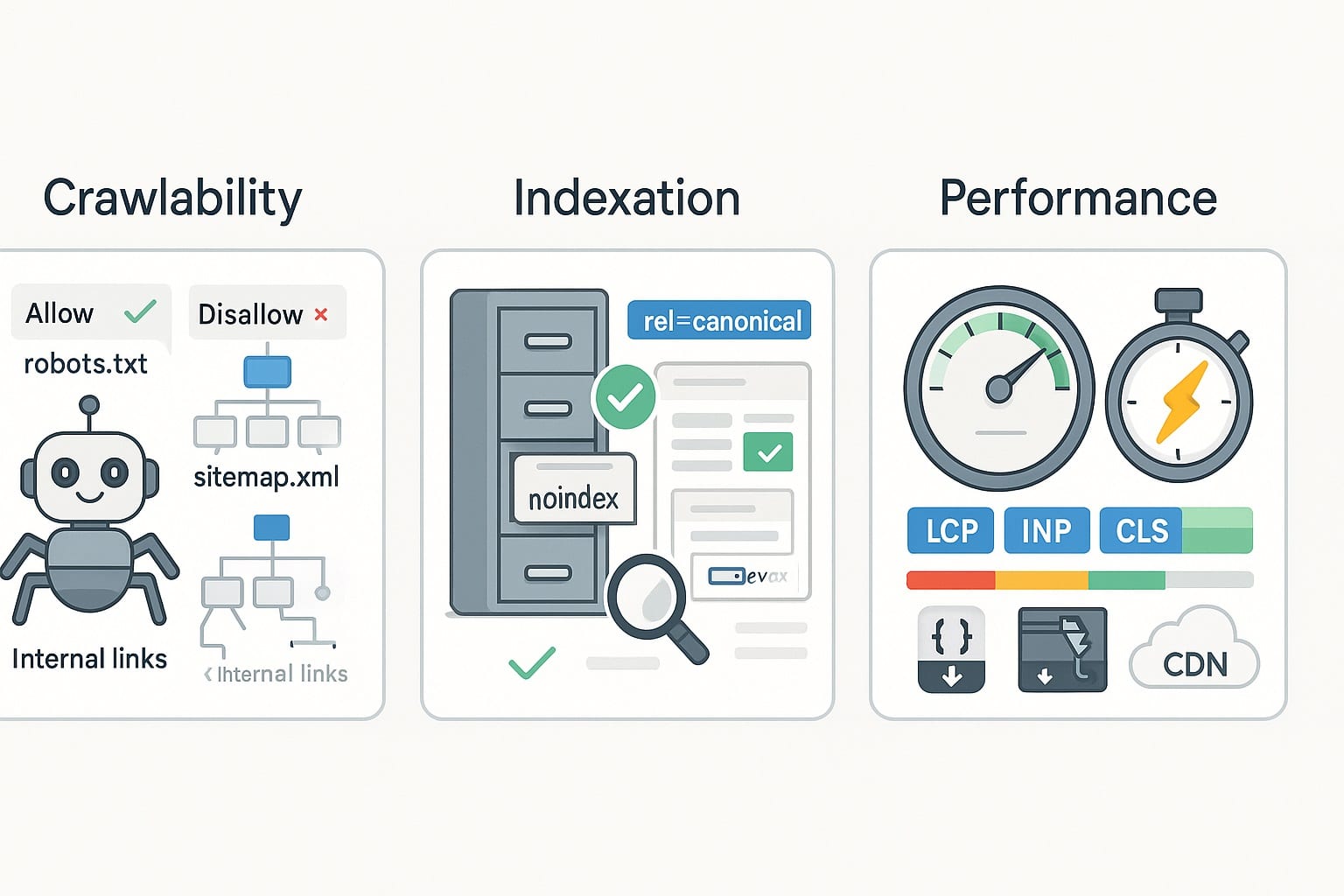

Fixes 1–3: Crawlability, indexation, performance

Fix 1: Separate algorithmic from technical failures. Before rewriting content, prove the plumbing. Pull 30 days of access logs; compute Googlebot HTML and Googlebot Smartphone hit counts per directory. If Googlebot hits and “Discovered – currently not indexed” both spike, you have indexation debt. If Googlebot hits hold steady but impressions collapse, you have ranking reweighting (algorithmic) rather than crawl failure.

Run three parity checks: 1) URLs in sitemaps vs. URLs receiving Googlebot hits; 2) URLs in canonical clusters (declared vs. selected) from Search Console; 3) HTTP status and cache headers (200, 301, 304 rates). A 304 ratio below 10% on static assets often indicates missing caching headers, increasing render cost and reducing crawl efficiency.

Fix 2: Repair crawl budget and indexation debt. Indexation debt occurs when Google discovers more low-value or duplicative URLs than it can prioritize. Cut the noise fast: enforce robots rules for faceted URLs, tighten sitemaps to only canonical 200 pages, and prune legacy parameters. Prefer server-side canonicals and noindex for thin variants; avoid blocking URLs that should consolidate signals.

- Robots gatekeeping: Disallow infinite facets (e.g., Disallow: /*?color=*, /*?sort=price); allow crawl to canonical collections.

- Parameter rules: Use parameter handling guidance; ensure UTM and session parameters are ignored server-side.

- Sitemaps: Create per-section sitemaps capped at 10k URLs; update lastmod only on meaningful changes.

- HTTP caching: For HTML, use short max-age with ETag/Last-Modified; for static assets, cache-bust with long max-age and immutable.

Implementation example: If /category/?sort=price,asc emits the same product set as /category/, set rel=canonical to /category/ on the parameterized page, add “noindex, follow” to the variant if necessary, and exclude the variant from sitemaps. Keep the canonical page indexable and included in sitemaps. Monitor canonical selection in Search Console’s Inspect URL for validation.

Fix 3: Harden Core Web Vitals above thresholds. With mobile-first indexing dominant, INP and LCP regressions correlate strongly with ranking drop after core updates per our case results. Harden field metrics, not synthetic ones. Use CrUX and your RUM to target the 75th percentile on mobile users in your top five markets.

- LCP: ≤2.5s at p75 (mobile). Move hero image to HTML; preconnect critical origins; serve AVIF/WebP; eliminate render-blocking CSS.

- INP: ≤200ms at p75. Defer non-essential JS, isolate event handlers, adopt server components, use requestIdleCallback for low-priority work.

- CLS: ≤0.1 at p75. Reserve space for images/ads; avoid layout-shifting fonts; stabilize third-party widgets via container sizing.

Engineered gains we routinely see: reducing total JS by 30–50%, cutting hydration by 200–400ms, and moving LCP elements into server-rendered HTML. In one migration, switching to HTTP/2 push alternatives (preload) for critical CSS reduced LCP by 480ms and reversed a 22% traffic drop in three weeks as CrUX updated.

Fixes 4–6: Content, intent, duplication control

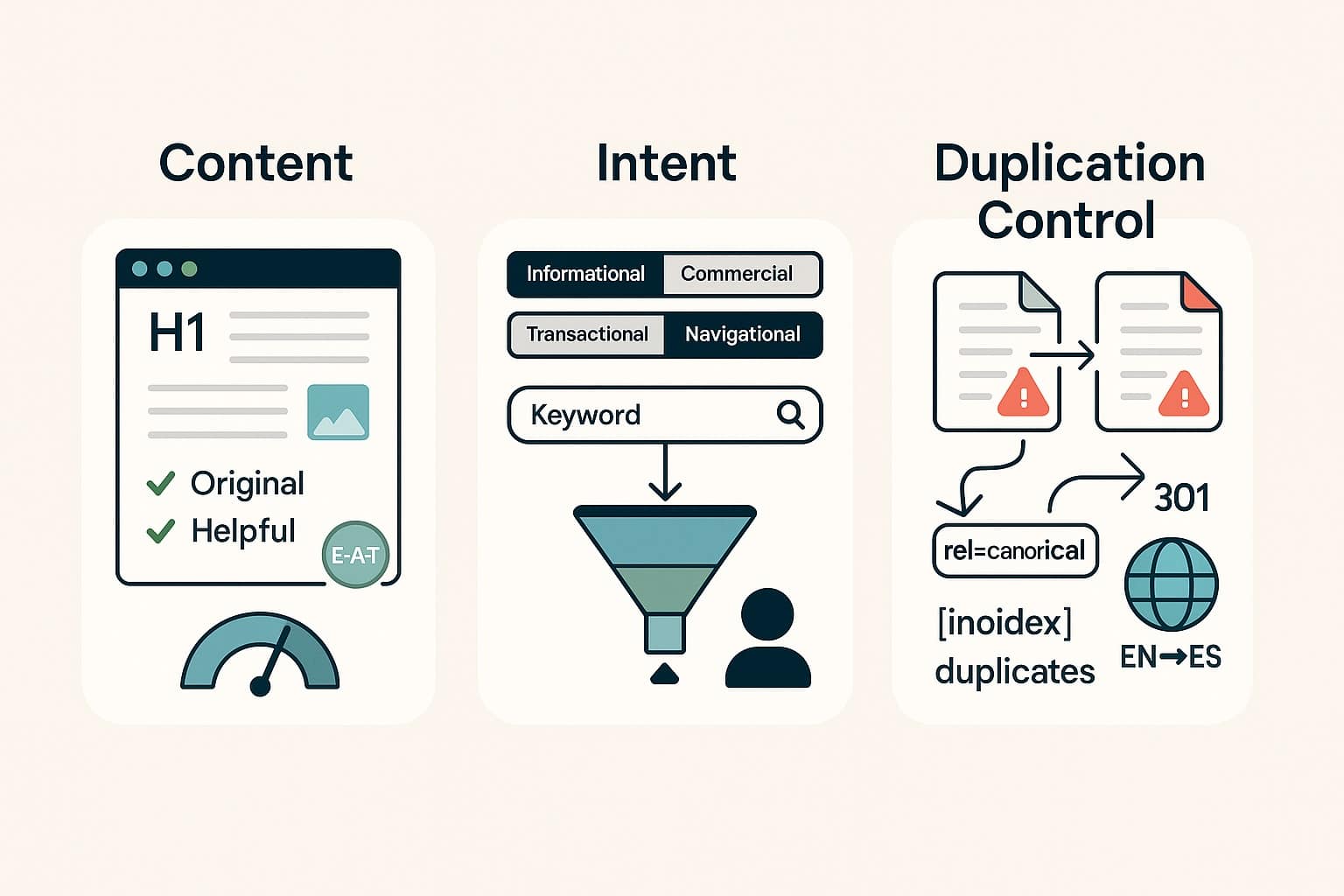

Fix 4: Rebuild EEAT with entity-first structure. Core updates increasingly interpret helpfulness and expertise through entity signals: Who are you? Who wrote it? Why should we trust it? Model your site’s knowledge graph in markup: Organization, Person, and domain-specific types (Product, MedicalEntity, FinancialService) linked with SameAs and in-article mentions. Ensure every author has a verifiable Person entity with credentials, affiliations, and publication history.

Use schema consistently: Article (or subtype) with headline, datePublished/Modified, author, and mainEntityOfPage. Associate each content hub with an Organization author byline if appropriate and link to detailed About and Editorial Policy pages. Google’s technical documentation encourages clear bylines and background—this is a scalable proxy for trust when content is otherwise similar across competitors.

Fix 5: Restructure content to match search intent. Query classes often pivot during an algorithm update. Where a generic “best [product]” piece previously ranked, the SERP may now favor hands-on tests with measurable criteria and scorecards. Adopt templates that lead with decision-critical factors, include original data, and answer related follow-up questions in concise sections (also supporting People Also Ask capture).

Segment your content program into formats by intent: “How-to” with step lists and short videos; “Comparisons” with feature matrices; “Explanations” with definitions plus expert Q&A; and “Local” with service overlays (NAP consistency, service area schema, review snippets). These formats map better to user satisfaction models in ranking systems.

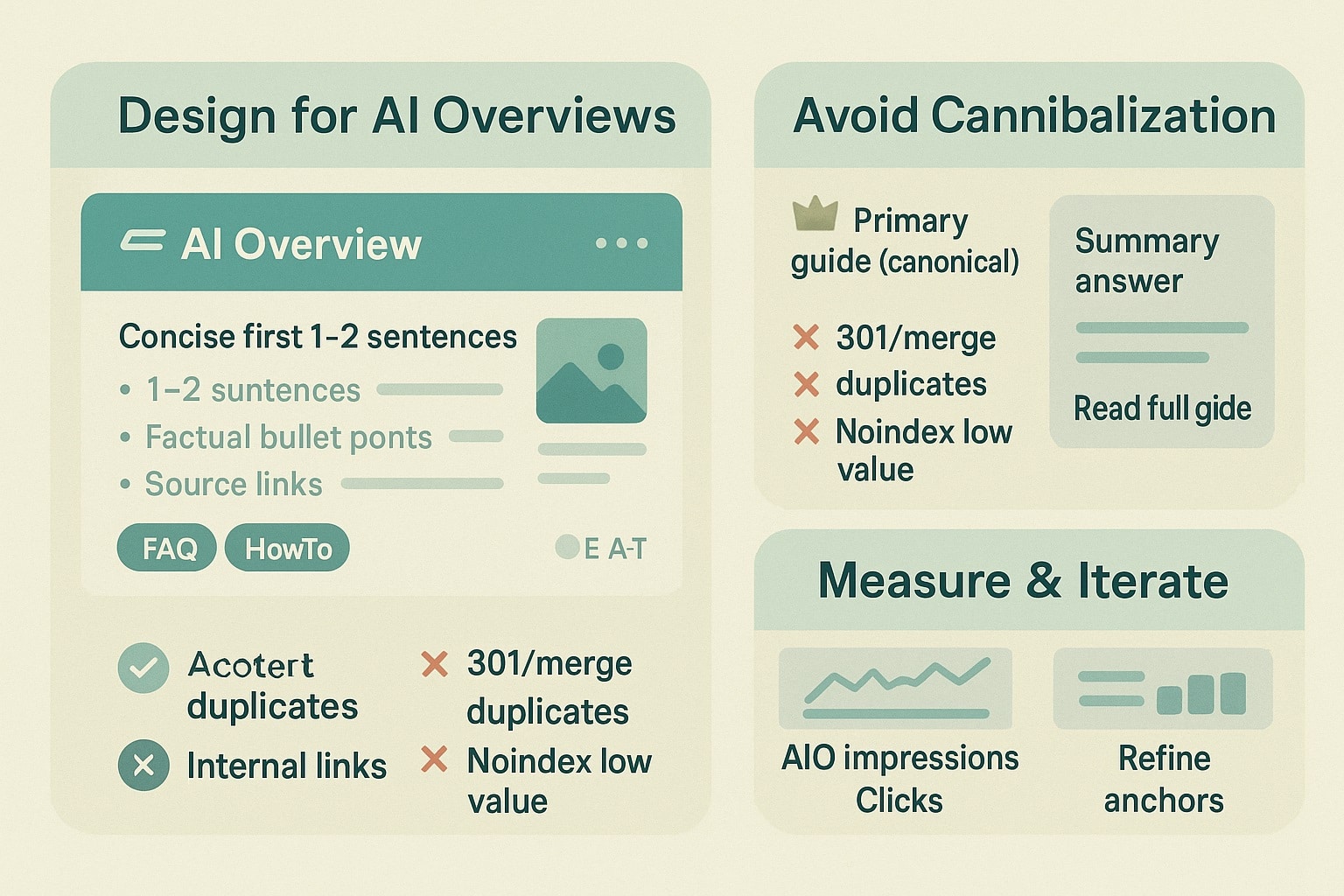

Fix 6: Consolidate duplicates and cannibalization rapidly. After nearly every core update, cannibalization becomes more painful. Consolidate overlapping URLs to a single, comprehensive resource; 301 redirect the weaker pages; update internal links; re-submit the canonical. Use content diffing to ensure the surviving page absorbs unique value (examples, data, media) from the deprecated ones.

- Identify clusters: Group URLs by shared queries and similar titles; compare best link equity holders.

- Choose the canonical: Favor the URL with strongest backlinks, stable history, and best engagement.

- Merge content: Transfer unique sections; align H2/H3 structure; refresh with new data or screenshots.

- Redirect and relink: 301 from deprecated pages; update nav, breadcrumbs, and contextual links sitewide.

Expect crawl and reindexing within days for smaller sites, a couple of weeks for large catalogs. Measure success by reduction in “Alternate page with proper canonical tag” and fewer competing impressions for the same queries.

Fixes 7–8: Link equity and helpfulness modernization

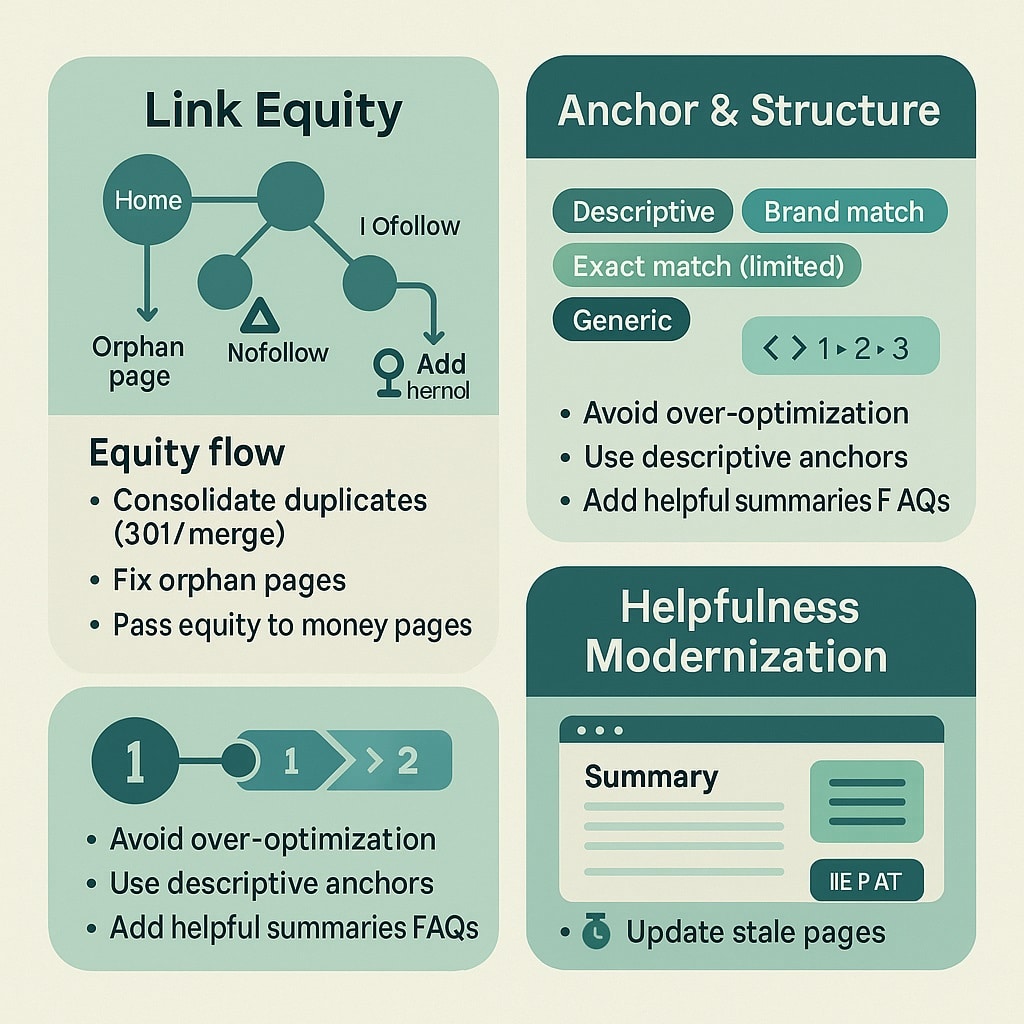

Fix 7: Refresh link equity and toxic patterns. A core update can expose stale link graphs where most equity points at older content or thin category pages. Conduct a link flow audit: extract internal link counts per template, identify orphans, and compute a simple PageRank approximation (e.g., power iteration with damping 0.85 on your internal graph). Shift equity toward hub pages that answer intent, not just navigational endpoints.

Externally, prioritize link reclamation: reclaim 404s with 301s to the best-match resources, update brand mentions to links where editorially appropriate, and strengthen digital PR around your original data assets. Google’s guidance is clear: disavow is rarely needed; focus on earning links and improving site architecture.

- Reclamation: Map top 200 lost backlinks by DR/traffic; redirect to current canonical; reach out to update links where pages moved.

- Orphan cleanup: Use logs + crawl to find zero-inlink pages; add contextual links or include them in hubs/sitemaps.

- Hub reinforcement: Ensure each hub has 10–30 relevant inlinks with diversified anchors and breadcrumb presence.

Fix 8: Strengthen helpfulness signals programmatically. Helpfulness is not just prose; it’s structure and proof. Add last-reviewed dates with named reviewers, insert pros/cons sections, expose methodology panels, and embed original datasets or calculations. Mark up each with appropriate schema (Review, HowTo, FAQ where helpful and compliant with current rich result policies).

Scale this via templates: for each content type, add a “How we test” expandable with a unique ID; include a “We may earn affiliate commissions” disclosure where applicable; and ensure dynamic ToC linking to sections for answerability. Case results: adding methodology + pros/cons lifted CTR 12–18% on informational terms and improved dwell time, easing seo recovery after updates.

Fix 9: Optimize for AI Overviews without cannibalizing

AI Overviews are compressing attention on short, fact-based queries and pushing visibility to authoritative, structured summaries and first-hand sources. The immediate goal is not to “rank inside” the overview but to be consistently cited and to capture the subsequent clicks from users seeking depth, tools, or local availability.

Treat AI Overviews as a summarization layer shaped by your clarity and evidence. Ensure top pages are unambiguous, up-to-date, and internally consistent. Distill definitive “answer blocks” for high-volume questions in 40–60 words, cite your original data, and support with concise lists or tables. Use schema to make relationships explicit (Product → AggregateRating; Recipe → NutritionInformation; HowTo → steps/tools).

- Answer framing: Lead with a direct, evidence-backed answer; follow with supporting steps or metrics.

- Fact freshness: Update dateModified and visible “Updated” stamps; align content and metadata to avoid contradictions.

- Citation hooks: Include unique stats, formulas, or checklists that are likely to be quoted.

- Disambiguation: Use clear entity names and SameAs references to remove ambiguity for models.

- Media clarity: Add captioned images with alt text reflecting the key fact; compress and lazy-load.

Traffic strategy: target overview-cited positions that resolve to your deeper assets—calculators, configurators, local inventory, downloadable templates—where the overview cannot satisfy intent. Measure change in “Referenced by” patterns via entity tracking and look for anchor text shifts that mirror your primary terms. For accelerated support aligning content with AI-driven surfaces, consider our AI search optimization programs.

We’ve seen 5–15% click-through recovery on affected queries by reframing intros into definitive answers and surfacing utility content within the first viewport, even when the overview captured the top fold.

Fixes 10–11: Internal links and measurement

Fix 10: Rebalance internal linking and sitemap coverage. Internal link architecture is the fastest lever to re-teach crawlers what matters. Every hub should receive diversified, context-rich links from siblings and children, not just global nav. Remove low-value pagination from sitemaps; include only canonical, indexable URLs that represent the best page for a topic.

Build a link plan per hub with tiered anchors (exact, partial, semantic variant) and avoid over-optimizing. Where sections underperform, add “related learning paths” with programmatic links to supporting guides. On very large sites, deploy a link component that chooses the top N destination candidates per template using a relevance score (BM25 or embedding cosine similarity) bounded by a max-children rule to avoid over-dilution.

- Architecture: 3-tier hub structure (Hub → Subhub → Leaf) with breadcrumb schema aligning to URL paths.

- Context links: 3–5 body links per 800–1,200 words to relevant siblings or parents; avoid footer-only linking.

- Sitemap policy: One XML per section; update daily only if content changed; total URLs ≤50k per index file.

- Orphan targets: Sweep weekly for pages with zero internal inlinks; add at least two contextual links or retire.

Fix 11: Instrument recovery and iterate weekly. Recovery isn’t a single deploy; it’s a cadence. Set up dashboards aligning Search Console (impressions, average position) to your log-based crawl data and RUM-based CWV. Create a “Post-Core Update” segment for affected queries and watch for stabilizing lines. Use PPC or Discovery Ads to test revised titles/meta for CTR lift signals that you can roll back into organic.

Measurement checklist: track canonical selection error rate (

Operationally, we retrofit a low-overhead QA: prevent deploy if bundle >X KB, if CLS delta >0.02, or if sitemap introduces >Y new parameterized URLs. Those simple gates have saved recoveries from avoidable regressions more than any other habit.

FAQ: Rapid answers for stressed teams

Below are concise, data-backed answers to the most common recovery questions we field during the immediate aftermath of a google core update. Use them to align your leadership, product, and engineering teams around realistic timelines and the most leverageable fixes in the first two to four weeks after impact.

How do I confirm a core update caused my traffic drop?

Correlate the drop window with Google’s published update dates and look for cross-site chatter peaking the same days. Then segment your data: if losses are broad across queries, pages, and geos without technical errors, it’s likely algorithmic. Validate with logs: steady Googlebot hits with falling impressions suggests reweighting, not crawl blockage.

What’s the fastest fix if Core Web Vitals regressed?

Target the largest pain first: reduce INP below 200ms by deferring non-critical JavaScript, trimming hydration, and eliminating expensive event handlers. Move your hero image into HTML and preload it to improve LCP. Prioritize field data improvements; CrUX updates every 28 days, so expect roughly one to two weeks before impact becomes visible.

Should I delete underperforming content after an update?

Not immediately. First consolidate cannibalizing pages into a stronger canonical and 301 the rest. Where content is thin or off-intent, rewrite with clear expert attribution, unique data, and specific answers. Only remove pages that deliver no clicks, links, or strategic utility after consolidation. Deletions without consolidation risk losing accumulated signals.

Do I need to disavow toxic backlinks to recover?

Usually no. Google’s guidance emphasizes the disavow tool for manual action scenarios or clear link scheme patterns you control. Most core update drops are quality and intent-related, not link penalties. Focus on link reclamation, internal link restructuring, and earning editorial citations through data assets. Use disavow only for demonstrably harmful, self-made links.

How do AI Overviews affect my search visibility?

They compress attention on fact-seeking queries and reward authoritative, structured, and clearly written pages. Optimize to be cited—add concise answer blocks, up-to-date facts, and schema that clarifies entities and relationships. Then route users into deeper utility assets (tools, local inventory, calculators) where the overview can’t fully satisfy intent, protecting click-through.

What recovery timeline should leadership expect?

Assuming you deploy high-impact fixes in week one: technical corrections show within days, Core Web Vitals within one to two weeks, and content/EEAT rebuilds over two to six weeks as recrawl and reweighting propagate. Full recovery (or net growth) typically takes four to eight weeks if measurement stays tight and regressions are prevented.

Regain compounding visibility with onwardSEO

Core updates reward teams that move quickly and precisely. If your search visibility dipped, we’ll separate algorithm impact from technical failure within 48 hours, then execute fixes that compound—crawl budget control, Core Web Vitals hardening, schema-driven EEAT, and AI Overviews readiness. onwardSEO’s frameworks deliver measurable deltas and predictable seo recovery cadences. Engage us for focused engineering backlogs, transparent diagnostics, and durable ranking wins aligned to revenue outcomes.